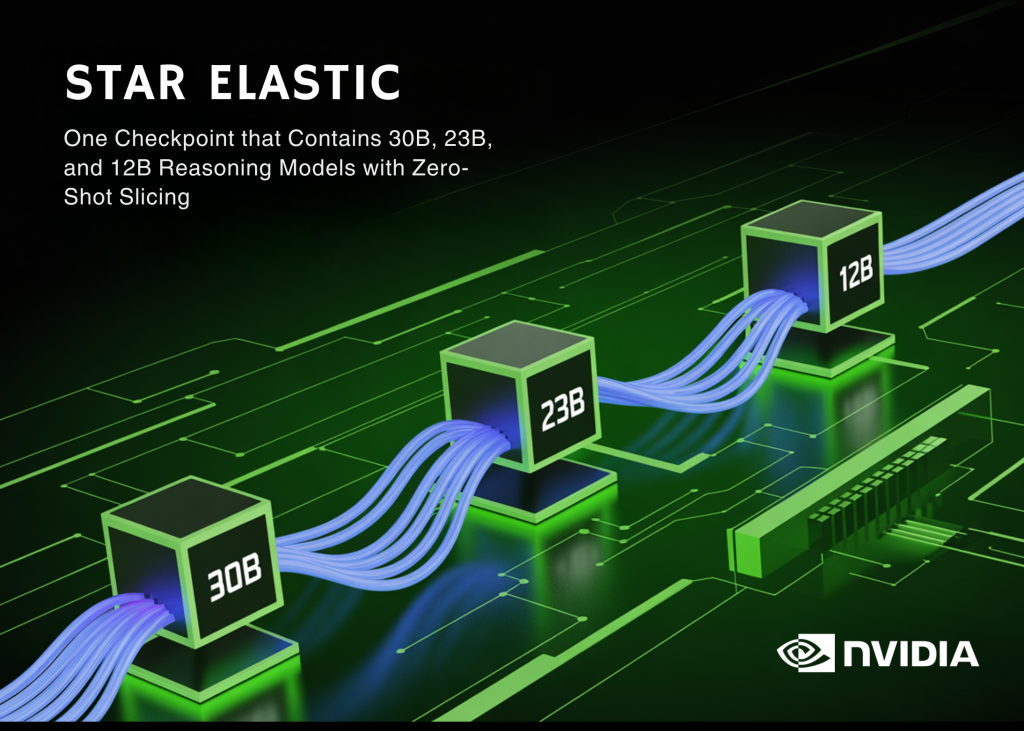

Coaching a household of huge language fashions (LLMs) has all the time include a painful multiplier: each mannequin variant within the household—whether or not 8B, 30B, or 70B—sometimes requires its personal full coaching run, its personal storage, and its personal deployment stack. For a dev workforce operating inference at scale, this implies multiplying compute prices by the variety of mannequin sizes they wish to help. NVIDIA researchers at the moment are proposing a distinct method referred to as Star Elastic.

Star Elastic is a post-training methodology that embeds a number of nested submodels—at totally different parameter budgets—inside a single mother or father reasoning mannequin, utilizing a single coaching run. Utilized to Nemotron Nano v3 (a hybrid Mamba–Transformer–MoE mannequin with 30B whole parameters and three.6B energetic parameters), Star Elastic produces 23B (2.8B energetic) and 12B (2.0B energetic) nested variants educated with roughly 160B tokens. All three variants reside in a single checkpoint and might be extracted with none extra fine-tuning.

What does “Nested” Truly Imply right here

In case you haven’t encountered elastic or nested architectures earlier than, the concept is that this: as a substitute of coaching three separate 30B, 23B, and 12B fashions, you practice one mannequin that comprises the smaller ones as subsets of itself. The smaller submodels reuse a very powerful weights from the mother or father, recognized by way of a course of referred to as significance estimation.

Star Elastic scores every mannequin part: embedding channels, consideration heads, Mamba SSM heads, MoE consultants, and FFN channels by how a lot they contribute to mannequin accuracy. Parts are then ranked and sorted, so smaller-budget submodels all the time use the highest-ranked contiguous subset of elements from the bigger mannequin. This property is known as nested weight-sharing.

The tactic helps nesting alongside a number of axes: the SSM (State House Mannequin) dimension, embedding channels, consideration heads, Mamba heads and head channels, MoE professional depend, and FFN intermediate dimension. For MoE layers particularly, Star Elastic makes use of Router-Weighted Skilled Activation Pruning (REAP), which ranks consultants by each routing gate values and professional output magnitudes—a extra principled sign than naive frequency-based pruning, which ignores how a lot every professional really contributes to the layer output.

A Learnable Router, Not a Mounted Compression Recipe

A key distinction from prior compression strategies like Minitron is that Star Elastic makes use of an end-to-end trainable router to find out the nested submodel architectures. The router takes a goal funds (e.g., “give me a 2.8B energetic parameter mannequin”) as a one-hot enter and outputs differentiable masks that choose which elements are energetic at that funds degree. These masks are educated collectively with the mannequin by way of Gumbel-Softmax, which permits gradient circulate by way of discrete architectural selections.

The loss perform combines data distillation (KD) the place the non-elastified mother or father mannequin acts because the trainer with a router loss that penalizes deviation from the goal useful resource funds (parameter depend, reminiscence, or latency). This implies the router learns to make structure decisions that truly enhance accuracy below KD, fairly than simply minimizing a proxy metric.

Coaching makes use of a two-stage curriculum: a short-context part (sequence size 8,192 tokens) with uniform funds sampling, adopted by an extended-context part (sequence size 49,152 tokens) with non-uniform sampling that prioritizes the complete 30B mannequin (p(30B)=0.5, p(23B)=0.3, p(12B)=0.2). The prolonged context part is crucial for reasoning efficiency. The analysis workforce’s ablations on Nano v2—explicitly reproduced because the empirical foundation for a similar curriculum alternative on Nano v3 present beneficial properties of as much as 19.8% on AIME-2025 for the 6B variant and 4.0 share factors for the 12B variant from Stage 2 alone, motivating its use right here.

Elastic Price range Management: Completely different Fashions for Completely different Reasoning Phases

Present funds management in reasoning fashions together with Nemotron Nano v3’s personal default conduct works by capping the variety of tokens generated throughout a part earlier than forcing a closing reply. This method makes use of the identical mannequin all through. Star Elastic unlocks a distinct technique: utilizing totally different nested submodels for the pondering part versus the answering part.

The researchers evaluated 4 configurations. The optimum one, referred to as ℳS → ℳL (small mannequin for pondering, giant mannequin for answering), allocates a less expensive mannequin to generate prolonged reasoning traces and reserves the full-capacity mannequin for synthesizing the ultimate reply. The 23B → 30B configuration particularly advances the accuracy–latency Pareto frontier, attaining as much as 16% increased accuracy and 1.9× decrease latency in comparison with default Nemotron Nano v3 funds management. The instinct: reasoning tokens are high-volume however tolerant of some capability discount; the ultimate reply requires increased precision.

Quantization With out Breaking the Nested Construction

A naive method to deploying a quantized elastic mannequin can be to quantize every variant individually after slicing. That breaks the nested weight-sharing property and requires a separate quantization cross per dimension. As a substitute, Star Elastic applies Quantization-Conscious Distillation (QAD) straight on the elastic checkpoint, preserving the nested masks hierarchy all through.

For FP8 (E4M3 format), post-training quantization (PTQ) is enough, recovering 98.69% of BF16 accuracy on the 30B variant. For NVFP4 (NVIDIA’s 4-bit floating-point format), PTQ alone causes a 4.12% common accuracy drop, so a brief nested QAD part (~5B tokens at 48K context) brings restoration again to 97.79% for the 30B variant. In each instances, zero-shot slicing of the 23B and 12B variants from the one quantized checkpoint is preserved.

The reminiscence implications are important. Storing separate 12B, 23B, and 30B BF16 checkpoints requires 126.1 GB; the one elastic checkpoint requires 58.9 GB. The 30B NVFP4 elastic checkpoint suits in 18.7 GB, enabling the 12B NVFP4 variant to run on an RTX 5080 the place each BF16 configuration runs out of reminiscence. On an RTX Professional 6000, the 12B NVFP4 variant reaches 7,426 tokens/s, a 3.4× throughput enchancment over the 30B BF16 baseline.

Depth vs. Width: Why Star Elastic Compresses Width

One design alternative value calling out explicitly: the analysis workforce in contrast two compression methods—eradicating layers solely (depth compression) versus decreasing inside dimensions like hidden dimension, professional depend, and head depend (width compression). With a 15% parameter discount and 25B tokens of data distillation, width compression recovered 98.1% of baseline efficiency whereas depth compression recovered solely 95.2%, with noticeable degradation on HumanEval and MMLU-Professional. Because of this, Star Elastic prioritizes width-based elasticity for its important outcomes, although depth compression (layer skipping) stays obtainable as a mechanism for excessive latency-constrained situations.

On the analysis suite—AIME-2025, GPQA, LiveCodeBench v5, MMLU-Professional, IFBench, and Tau Bench—the Elastic-30B variant matches its mother or father Nemotron Nano v3 30B on most benchmarks, whereas the Elastic-23B and Elastic-12B variants stay aggressive in opposition to independently educated fashions of comparable sizes. The Elastic-23B notably scores 85.63 on AIME-2025 versus Qwen3-30B-A3B’s 80.00, regardless of having fewer energetic parameters.

On coaching value, the analysis workforce experiences a 360× token discount in comparison with pretraining every variant from scratch, and a 7× discount over prior state-of-the-art compression strategies that require sequential distillation runs per mannequin dimension. The 12B variant runs at 2.4× the throughput of the 30B mother or father on an H100 GPU at bfloat16 with the identical enter/output sequence lengths.

Key Takeaways

- Star Elastic trains 30B, 23B, and 12B nested reasoning fashions from a single 160B-token post-training run, attaining a 360× token discount over pretraining from scratch.

- Elastic funds management (23B for pondering, 30B for answering) improves the accuracy–latency Pareto frontier by as much as 16% accuracy and 1.9× latency beneficial properties.

- A learnable router with Gumbel-Softmax permits end-to-end trainable structure choice, eliminating the necessity for separate compression runs per mannequin dimension.

- Nested QAD preserves zero-shot slicing throughout FP8 and NVFP4 quantized checkpoints, decreasing the 30B elastic checkpoint to 18.7 GB in NVFP4.

- All three precision variants (BF16, FP8, NVFP4) are publicly obtainable on Hugging Face below

nvidia/NVIDIA-Nemotron-Labs-3-Elastic-30B-A3B.

Try the Paper, Elastic Fashions on Hugging Face BF16, FP8 and NVFP4 . Additionally, be at liberty to observe us on Twitter and don’t overlook to affix our 150k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Have to associate with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Connect with us