Tabular information—structured info saved in rows and columns—is on the coronary heart of most real-world machine studying issues, from healthcare information to monetary transactions. Over time, fashions based mostly on choice timber, corresponding to Random Forest, XGBoost, and CatBoost, have turn out to be the default selection for these duties. Their energy lies in dealing with combined information varieties, capturing advanced characteristic interactions, and delivering robust efficiency with out heavy preprocessing. Whereas deep studying has reworked areas like laptop imaginative and prescient and pure language processing, it has traditionally struggled to persistently outperform these tree-based approaches on tabular datasets.

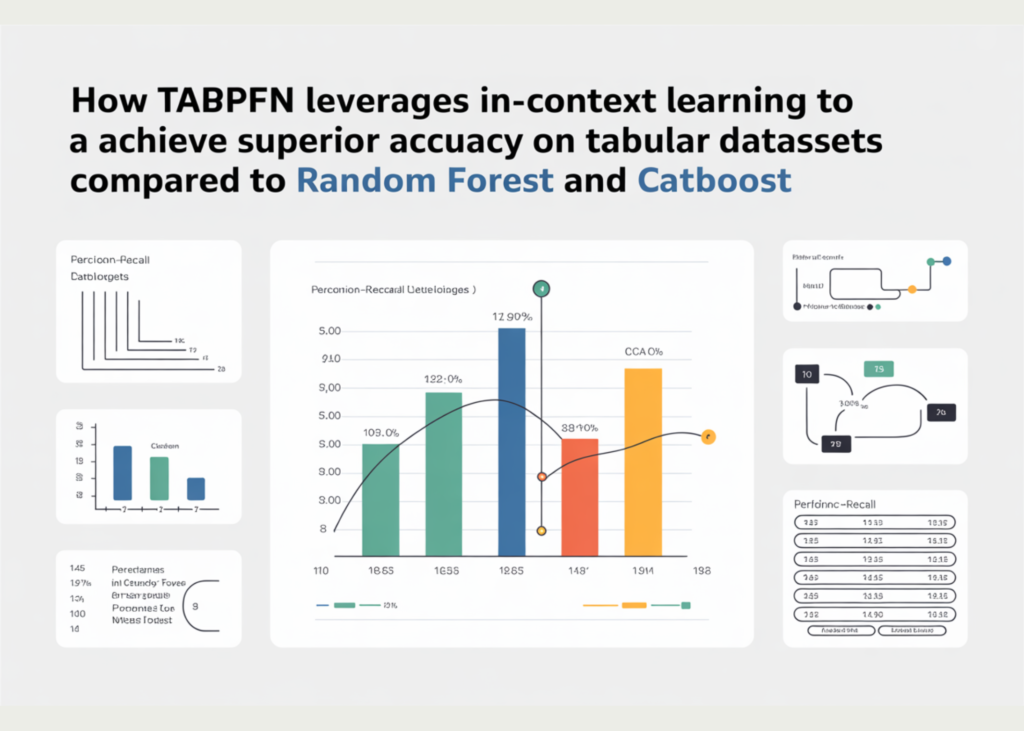

That long-standing pattern is now being questioned. A more moderen method, TabPFN, introduces a special method of tackling tabular issues—one which avoids conventional dataset-specific coaching altogether. As a substitute of studying from scratch every time, it depends on a pretrained mannequin to make predictions immediately, successfully shifting a lot of the training course of to inference time. On this article, we take a more in-depth take a look at this concept and put it to the take a look at by evaluating TabPFN with established tree-based fashions like Random Forest and CatBoost on a pattern dataset, evaluating their efficiency when it comes to accuracy, coaching time, and inference pace.

TabPFN is a tabular basis mannequin designed to deal with structured information in a very completely different method from conventional machine studying. As a substitute of coaching a brand new mannequin for each dataset, TabPFN is pretrained on tens of millions of artificial tabular duties generated from causal processes. This enables it to be taught a common technique for fixing supervised studying issues. Once you give it your dataset, it doesn’t undergo iterative coaching like tree-based fashions—as a substitute, it performs predictions immediately by leveraging what it has already discovered. In essence, it applies a type of in-context studying to tabular information, much like how massive language fashions work for textual content.

The newest model, TabPFN-2.5, considerably expands this concept by supporting bigger and extra advanced datasets, whereas additionally bettering efficiency. It has been proven to outperform tuned tree-based fashions like XGBoost and CatBoost on commonplace benchmarks and even match robust ensemble methods like AutoGluon. On the similar time, it reduces the necessity for hyperparameter tuning and guide effort. To make it sensible for real-world deployment, TabPFN additionally introduces a distillation method, the place its predictions may be transformed into smaller fashions like neural networks or tree ensembles—retaining many of the accuracy whereas enabling a lot sooner inference.

Organising the dependencies

pip set up tabpfn-client scikit-learn catboostimport time

import numpy as np

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

# Fashions

from sklearn.ensemble import RandomForestClassifier

from catboost import CatBoostClassifier

from tabpfn_client import TabPFNClassifierTo run the mannequin, you require the TabPFN API Key. You will get the identical from https://ux.priorlabs.ai/home

import os

from getpass import getpass

os.environ['TABPFN_TOKEN'] = getpass('Enter TABPFN Token: ')Creating the dataset

For our experiment, we generate an artificial binary classification dataset utilizing make_classification from scikit-learn. The dataset comprises 5,000 samples and 20 options, out of which 10 are informative (truly contribute to predicting the goal) and 5 are redundant (derived from the informative ones). This setup helps simulate a practical tabular situation the place not all options are equally helpful, and a few introduce noise or correlation.

We then cut up the information into coaching (80%) and testing (20%) units to judge mannequin efficiency on unseen information. Utilizing an artificial dataset permits us to have full management over the information traits whereas guaranteeing a good and reproducible comparability between TabPFN and conventional tree-based fashions.

X, y = make_classification(

n_samples=5000,

n_features=20,

n_informative=10,

n_redundant=5,

random_state=42

)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)Testing Random Forest

We begin with a Random Forest classifier as a baseline, utilizing 200 timber. Random Forest is a sturdy ensemble technique that builds a number of choice timber and aggregates their predictions, making it a robust and dependable selection for tabular information with out requiring heavy tuning.

After coaching on the dataset, the mannequin achieves an accuracy of 95.5%, which is a strong efficiency given the artificial nature of the information. Nonetheless, this comes with a coaching time of 9.56 seconds, reflecting the price of constructing tons of of timber. On the constructive facet, inference is comparatively quick at 0.0627 seconds, since predictions solely contain passing information by way of the already constructed timber. This consequence serves as a robust baseline to match in opposition to extra superior strategies like CatBoost and TabPFN.

rf = RandomForestClassifier(n_estimators=200)

begin = time.time()

rf.match(X_train, y_train)

rf_train_time = time.time() - begin

begin = time.time()

rf_preds = rf.predict(X_test)

rf_infer_time = time.time() - begin

rf_acc = accuracy_score(y_test, rf_preds)

print(f"RandomForest → Acc: {rf_acc:.4f}, Prepare: {rf_train_time:.2f}s, Infer: {rf_infer_time:.4f}s")Testing CatBoost

Subsequent, we prepare a CatBoost classifier, a gradient boosting mannequin particularly designed for tabular information. It builds timber sequentially, the place every new tree corrects the errors of the earlier ones. In comparison with Random Forest, CatBoost is often extra correct due to this boosting method and its capability to mannequin advanced patterns extra successfully.

On our dataset, CatBoost achieves an accuracy of 96.7%, outperforming Random Forest and demonstrating its energy as a state-of-the-art tree-based technique. It additionally trains barely sooner, taking 8.15 seconds, regardless of utilizing 500 boosting iterations. One among its greatest benefits is inference pace—predictions are extraordinarily quick at simply 0.0119 seconds, making it well-suited for manufacturing eventualities the place low latency is essential. This makes CatBoost a robust benchmark earlier than evaluating in opposition to newer approaches like TabPFN.

cat = CatBoostClassifier(

iterations=500,

depth=6,

learning_rate=0.1,

verbose=0

)

begin = time.time()

cat.match(X_train, y_train)

cat_train_time = time.time() - begin

begin = time.time()

cat_preds = cat.predict(X_test)

cat_infer_time = time.time() - begin

cat_acc = accuracy_score(y_test, cat_preds)

print(f"CatBoost → Acc: {cat_acc:.4f}, Prepare: {cat_train_time:.2f}s, Infer: {cat_infer_time:.4f}s")Testing TabPFN

Lastly, we consider TabPFN, which takes a basically completely different method in comparison with conventional fashions. As a substitute of studying from scratch on the dataset, it leverages a pretrained mannequin and easily circumstances on the coaching information throughout inference. The .match() step primarily entails loading the pretrained weights, which is why this can be very quick.

On our dataset, TabPFN achieves the very best accuracy of 98.8%, outperforming each Random Forest and CatBoost. The match time is simply 0.47 seconds, considerably sooner than the tree-based fashions since no precise coaching is carried out. Nonetheless, this shift comes with a trade-off—inference takes 2.21 seconds, which is far slower than CatBoost and Random Forest. It is because TabPFN processes each the coaching and take a look at information collectively throughout prediction, successfully performing the “studying” step at inference time.

Total, TabPFN demonstrates a robust benefit in accuracy and setup pace, whereas highlighting a special computational trade-off in comparison with conventional tabular fashions.

tabpfn = TabPFNClassifier()

begin = time.time()

tabpfn.match(X_train, y_train) # masses pretrained mannequin

tabpfn_train_time = time.time() - begin

begin = time.time()

tabpfn_preds = tabpfn.predict(X_test)

tabpfn_infer_time = time.time() - begin

tabpfn_acc = accuracy_score(y_test, tabpfn_preds)

print(f"TabPFN → Acc: {tabpfn_acc:.4f}, Match: {tabpfn_train_time:.2f}s, Infer: {tabpfn_infer_time:.4f}s")Throughout our experiments, TabPFN delivers the strongest total efficiency, reaching the very best accuracy (98.8%) whereas requiring nearly no coaching time (0.47s) in comparison with Random Forest (9.56s) and CatBoost (8.15s). This highlights its key benefit: eliminating dataset-specific coaching and hyperparameter tuning whereas nonetheless outperforming well-established tree-based strategies. Nonetheless, this profit comes with a trade-off—inference latency is considerably larger (2.21s), because the mannequin processes each coaching and take a look at information collectively throughout prediction. In distinction, CatBoost and Random Forest provide a lot sooner inference, making them extra appropriate for real-time functions.

From a sensible standpoint, TabPFN is very efficient for small-to-medium tabular duties, speedy experimentation, and eventualities the place minimizing growth time is essential. For manufacturing environments, particularly these requiring low-latency predictions or dealing with very massive datasets, newer developments corresponding to TabPFN’s distillation engine assist bridge this hole by changing the mannequin into compact neural networks or tree ensembles, retaining most of its accuracy whereas drastically bettering inference pace. Moreover, assist for scaling to tens of millions of rows makes it more and more viable for enterprise use circumstances. Total, TabPFN represents a shift in tabular machine studying—buying and selling conventional coaching effort for a extra versatile, inference-driven method.

Take a look at the Full Codes with Notebook here. Additionally, be happy to observe us on Twitter and don’t overlook to hitch our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Must companion with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and many others.? Connect with us

I’m a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I’ve a eager curiosity in Information Science, particularly Neural Networks and their utility in varied areas.