Physical Intelligence, the two-year-old, San Francisco-based robotics startup that has quietly grow to be probably the most carefully watched AI corporations within the Bay Space, printed new research Thursday exhibiting that its newest mannequin can direct robots to carry out duties they have been by no means explicitly educated on — a functionality the corporate’s personal researchers say caught them off guard.

The brand new mannequin, known as π0.7, represents what the corporate describes as an early however significant step towards the long-sought purpose of a general-purpose robotic mind: one that may be pointed at an unfamiliar job, coached by it in plain language, and really pull it off. If the findings maintain as much as scrutiny, they counsel that robotic AI could also be approaching an inflection level much like what the sector noticed with massive language fashions — the place capabilities start compounding in ways in which outpace what the underlying information would appear to foretell.

However first: The core declare within the paper is compositional generalization — the power to mix abilities discovered in numerous contexts to unravel issues the mannequin has by no means encountered. Till now, the usual strategy to robotic coaching has been primarily rote memorization — gather information on a particular job, practice a specialist mannequin on that information, then repeat for each new job. π0.7, Bodily Intelligence says, breaks that sample.

“As soon as it crosses that threshold the place it goes from solely doing precisely the stuff that you simply gather the info for to truly remixing issues in new methods,” says Sergey Levine, a co-founder of Bodily Intelligence and a UC Berkeley professor centered on AI for robotics, “the capabilities are going up greater than linearly with the quantity of knowledge. That rather more favorable scaling property is one thing we’ve seen in different domains, like language and imaginative and prescient.”

The paper’s most hanging demonstration entails an air fryer the mannequin had primarily by no means seen in coaching. When the analysis workforce investigated, they discovered solely two related episodes in the whole coaching dataset: one the place a unique robotic merely pushed the air fryer closed, and one from an open supply dataset the place one more robotic positioned a plastic bottle inside one on somebody’s directions. The mannequin had by some means synthesized these fragments, plus broader web-based pretraining information, right into a practical understanding of how the equipment works.

“It’s very arduous to trace down the place the data is coming from, or the place it would succeed or fail,” says Lucy Shi, a Bodily Intelligence researcher and Stanford pc science Ph.D. pupil. Nonetheless, with zero teaching, the mannequin made a satisfactory try at utilizing the equipment to prepare dinner a candy potato. With step-by-step verbal directions — primarily, a human strolling the robotic by the duty the best way you would possibly clarify one thing to a brand new worker — it carried out efficiently.

That teaching functionality issues as a result of it suggests robots might be deployed in new environments and improved in actual time with out extra information assortment or mannequin retraining.

So what does all of it imply? The researchers aren’t shy concerning the mannequin’s limitations and are cautious to not get forward of themselves. In at the least one case, they level the finger squarely at their very own workforce.

“Generally the failure mode just isn’t on the robotic or on the mannequin,” says Shi. “It’s on us. Not being good at immediate engineering.” She describes an early air fryer experiment that produced a 5% success charge. After spending about half an hour refining how the duty was defined to the mannequin, it jumped to 95%, she says.

The mannequin additionally isn’t but able to executing complicated multi-step duties autonomously from a single high-level command. “You’ll be able to’t inform it, ‘Hey, go make me some toast’,” Levine says. “However if you happen to stroll it by — ‘for the toaster, open this half, push that button, do that’ — then it really tends to work fairly nicely.”

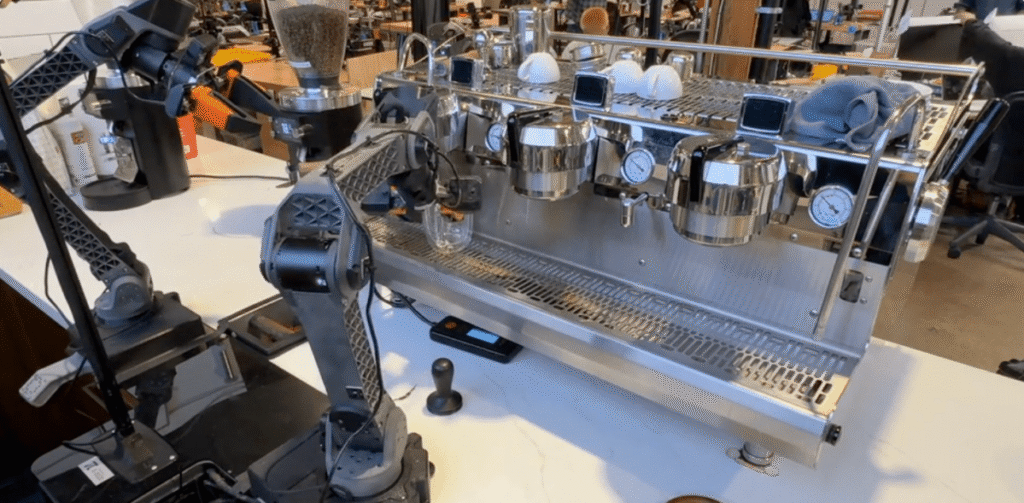

The workforce additionally acknowledged that standardized benchmarks for robotics don’t actually exist, which makes exterior validation of their claims tough. As a substitute, the corporate measured π0.7 in opposition to its personal earlier specialist fashions — purpose-built techniques educated on particular person duties — and located that the generalist mannequin matched their efficiency throughout a variety of complicated work, together with making espresso, folding laundry, and assembling packing containers.

What could also be most notable concerning the analysis — if you happen to take the researchers at their phrase — isn’t any single demo however the diploma to which the outcomes shocked them, individuals whose job it’s to know precisely what’s within the coaching information and subsequently what the mannequin ought to and shouldn’t be capable of do.

“My expertise has all the time been that once I deeply know what’s within the information, I can sort of simply guess what the mannequin will be capable of do,” says Ashwin Balakrishna, a analysis scientist at Bodily Intelligence. “I’m hardly ever shocked. However the previous couple of months have been the primary time the place I’m genuinely shocked. I simply purchased a gear set randomly and requested the robotic, ‘Hey, are you able to rotate this gear?’ And it simply labored.”

Levine recalled the second researchers first encountered GPT-2 producing a narrative about unicorns in the Andes. “The place the heck did it find out about unicorns in Peru?” he says. “That’s such a bizarre mixture. And I believe that seeing that in robotics is de facto particular.”

Naturally, critics will level to an uncomfortable asymmetry right here: Language fashions had the whole web to be taught from. Robots don’t, and no quantity of intelligent prompting absolutely closes that hole. However when requested the place he expects the skepticism, Levine factors elsewhere totally.

“The criticism that may all the time be leveled at any robotic generalization demo is that the duties are sort of boring,” he says. “The robotic just isn’t doing a backflip.” He pushes again on that framing, arguing that the excellence between a formidable robotic demo and a robotic system that truly generalizes is exactly the purpose. Generalization, he suggests, will all the time look much less dramatic than a rigorously choreographed stunt — however it’s significantly extra helpful.

The paper itself makes use of cautious hedging language all through, describing π0.7 as exhibiting “early indicators” of generalization and “preliminary demonstrations” of recent capabilities. These are analysis outcomes, not a deployed product.

When requested instantly when a system based mostly on these findings may be prepared for real-world deployment, Levine declines to invest. “I believe there’s good motive to be optimistic, and definitely it’s progressing sooner than I anticipated a few years in the past,” he says. “Nevertheless it’s very arduous for me to reply that query.”

Bodily Intelligence has raised over $1 billion thus far and was most lately valued at $5.6 billion. A major a part of the investor enthusiasm across the firm traces to Lachy Groom, a co-founder who spent years as one among Silicon Valley’s most well-regarded angel buyers — backing Figma, Notion, and Ramp, amongst others — earlier than deciding that Bodily Intelligence was the corporate he’d been searching for. That pedigree has helped the startup entice severe institutional cash even because it has refused to supply buyers a commercialization timeline.

The corporate is now stated to be in discussions for a brand new spherical that may practically double that valuation determine to $11 billion. The workforce declined to remark.