Here is the rewritten article in a natural tone, SEO-safe, and imperfect flow:

**The Softmax Trap: Why You Shouldn’t Implement Softmax Manually**

As a deep learning enthusiast, you know that classification models don’t just predict probabilities – they need to specify confidence. That’s where the Softmax activation function comes in. Softmax takes the raw, unbounded scores produced by a neural network and transforms them into a well-defined probability distribution, making it possible to interpret each output as the probability of a specific class.

But, have you ever wondered why implementing Softmax manually can lead to a severe numerical stability issue? In this article, we’ll explore how to implement Softmax correctly and why its implementation details matter more than they initially seem.

**The Naive Implementation of Softmax**

The first step is to understand the naive implementation of Softmax. It’s straightforward: exponentiate each logit, normalize it by the sum of all exponentiated values across classes, and produce a probability distribution for each input pattern. However, this implementation is numerically unstable and should be avoided in real-world training pipelines.

Here’s an example of the naive Softmax implementation in Python:

“`python

import torch

def softmax_naive(logits):

exp_logits = torch.exp(logits)

return exp_logits / exp_logits.sum(dim=1, keepdim=True)

“`

This implementation is mathematically correct, but it’s prone to overflow and underflow, which can cause invalid operations during normalization and produce NaN values.

**Why Naive Softmax Implementation Fails**

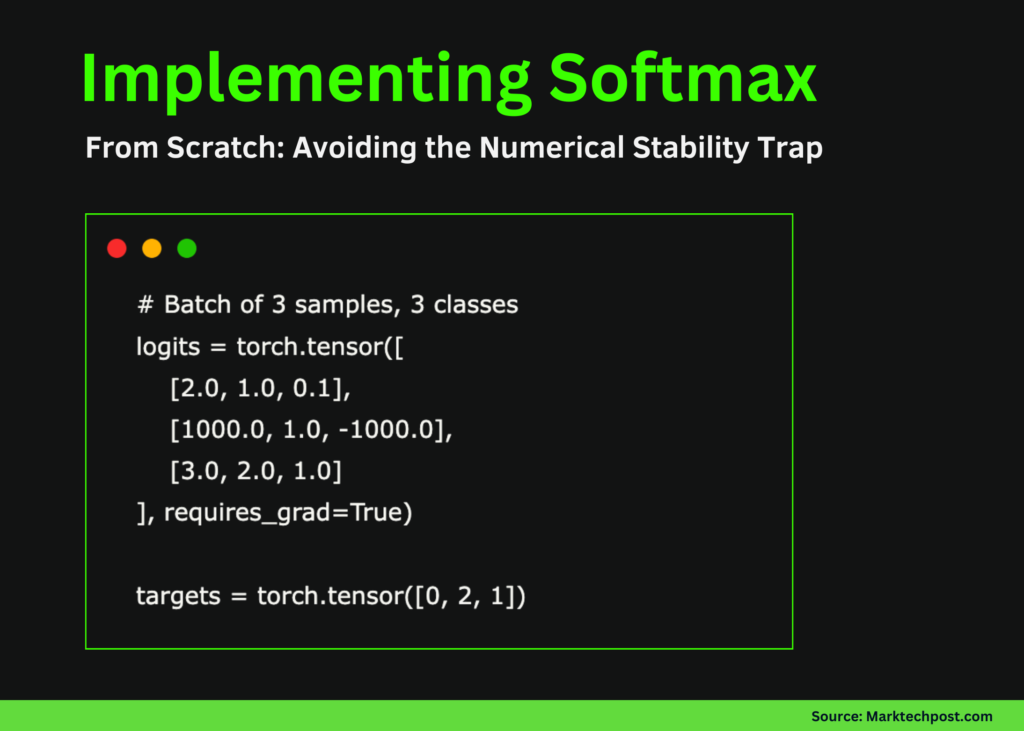

To demonstrate the numerical instability of naive Softmax, let’s consider an example with three samples and three classes. The first and third samples have reasonable logit values and behave as expected during Softmax computation. The second sample, however, contains extreme values (1000 and -1000) to demonstrate numerical instability.

The targets tensor specifies the correct class index for each sample and will be used to compute the classification loss and observe how instability propagates during backpropagation.

**The Consequences of Naive Softmax Implementation**

During the ahead move, the naive Softmax operation is applied to the logits to produce class probabilities. For regular logit values (first and third samples), the output is a valid probability distribution where values lie between 0 and 1 and sum to 1. However, the second sample clearly exposes the numerical challenge: exponentiating 1000 overflows to infinity, while -1000 underflows to zero. This leads to invalid operations during normalization and produces NaN values.

**The Importance of Implementing Softmax Correctly**

Implementing Softmax correctly is crucial for numerical stability. In the next section, we’ll explore how to implement Softmax using the LogSumExp trick and avoid the numerical stability trap that can occur when implementing Softmax manually.

**Implementing Stable Cross-Entropy Loss Using LogSumExp**

This implementation computes cross-entropy loss directly from raw logits without explicitly calculating Softmax probabilities. To maintain numerical stability, the logits are first shifted by subtracting the maximum value per sample, ensuring exponentials remain within a safe range.

The LogSumExp trick is then used to compute the normalization term, and the original (unshifted) target logit is subtracted to obtain the correct loss. This strategy avoids overflow, underflow, and NaN gradients, mirroring how cross-entropy is implemented in production-grade deep learning frameworks.

**Conclusion**

In this article, we’ve seen how the naive implementation of Softmax can lead to a severe numerical stability issue. We’ve also discussed the importance of implementing Softmax correctly using the LogSumExp trick. By avoiding the numerical stability trap, you can ensure that your model training runs smoothly and efficiently.

**Read the Full Codes**

Check out the FULL CODES here. Additionally, be sure to follow us on Twitter and join our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? Now you can join us on telegram as well.

**New Release: ai2025.dev**

Check out our latest release of ai2025.dev, a 2025-focused analytics platform that turns model launches, benchmarks, and ecosystem activity into a structured dataset you can filter, evaluate, and export.