Here’s the rewritten text in a natural, conversational tone:

—

Building a Federated Learning-based Fraud Detection System with OpenAI and PyTorch

Hey there, fellow data enthusiasts! Today, we’re going to dive into the world of Federated Learning and build a privacy-preserving fraud detection system from scratch. Don’t worry if you’re new to the topic – we’ll take it one step at a time.

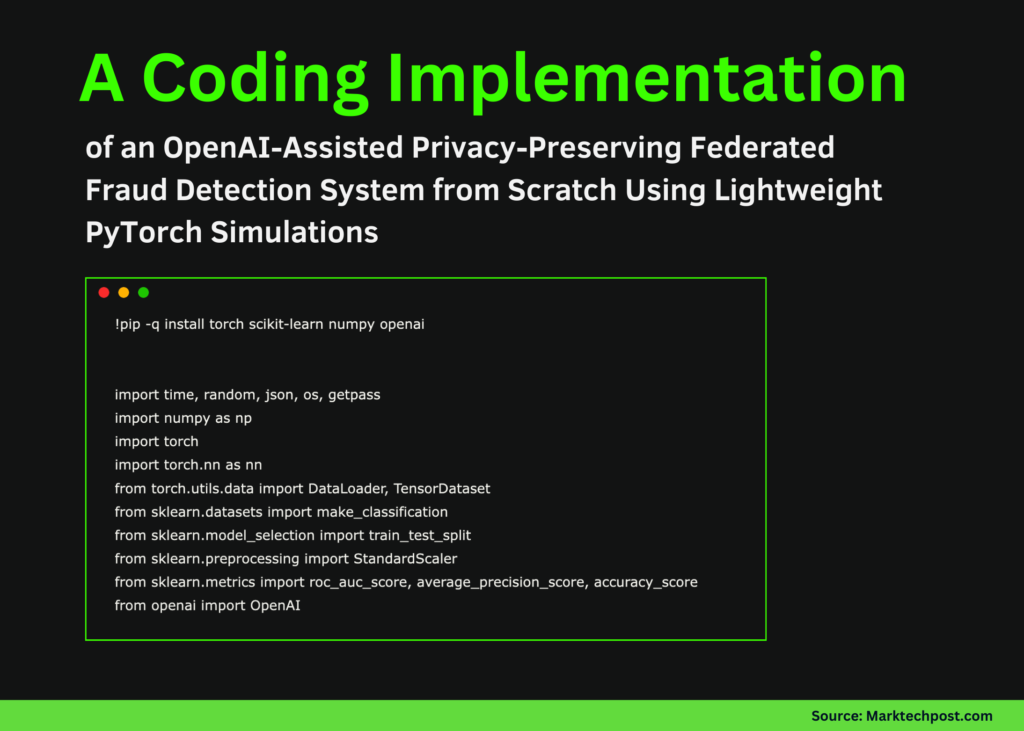

To get started, we’ll set up our execution environment and import all the required libraries for data generation, modeling, analysis, and reporting. We’ll also make sure to fix our random seeds and machine configuration so that our simulation is deterministic and reproducible on CPU.

Next, we’ll generate a highly imbalanced, credit-card-like fraud dataset and split it into training and test sets. We’ll standardize our server-side data and prepare a global test loader that allows us to consistently evaluate our aggregated model after each Federated round.

To make things interesting, we’ll partition our training data across ten clients using a Dirichlet distribution. This will ensure that each simulated bank operates on its own locally scaled data, mimicking real-world scenarios.

Now, let’s take a look at the neural network we’ll use for fraud detection. We’ll outline the utility functions for training, analysis, and weight updates, and implement lightweight local optimization and metric computation to keep client-side updates efficient and easy to understand.

In the next section, we’ll orchestrate the Federated learning process by iteratively training local client models and aggregating their parameters using FedAvg. We’ll evaluate our global model after each round to monitor convergence and understand how collective learning improves fraud detection performance.

Finally, we’ll use an external language model to transform our technical results into a concise analytical report. We’ll securely accept our API key through keyboard input and generate decision-oriented insights that summarize performance, risks, and recommended next steps.

**Conclusion**

In this tutorial, we’ve shown how to implement Federated Learning from first principles in a Colab notebook while prioritizing security, interpretability, and practicality. We’ve seen how high data heterogeneity across clients influences convergence and why careful aggregation and analysis are crucial in fraud detection settings. We’ve also extended our workflow by generating an automatic risk-report, illustrating how analytical results can be translated into decision-ready insights.

Want to take a closer look at the full code? You can find it here. Additionally, feel free to follow me on Twitter, join our ML SubReddit, subscribe to our Newsletter, and now, yes, you can even join us on Telegram!