On this tutorial, we discover how we will construct a compact but highly effective Enterprise AI assistant that runs effortlessly on Colab. We begin by integrating retrieval-augmented era (RAG) utilizing FAISS for doc retrieval and FLAN-T5 for textual content era, each totally open-source and free. As we progress, we embed enterprise insurance policies comparable to information redaction, entry management, and PII safety straight into the workflow, guaranteeing our system is clever and compliant. Take a look at the FULL CODES here.

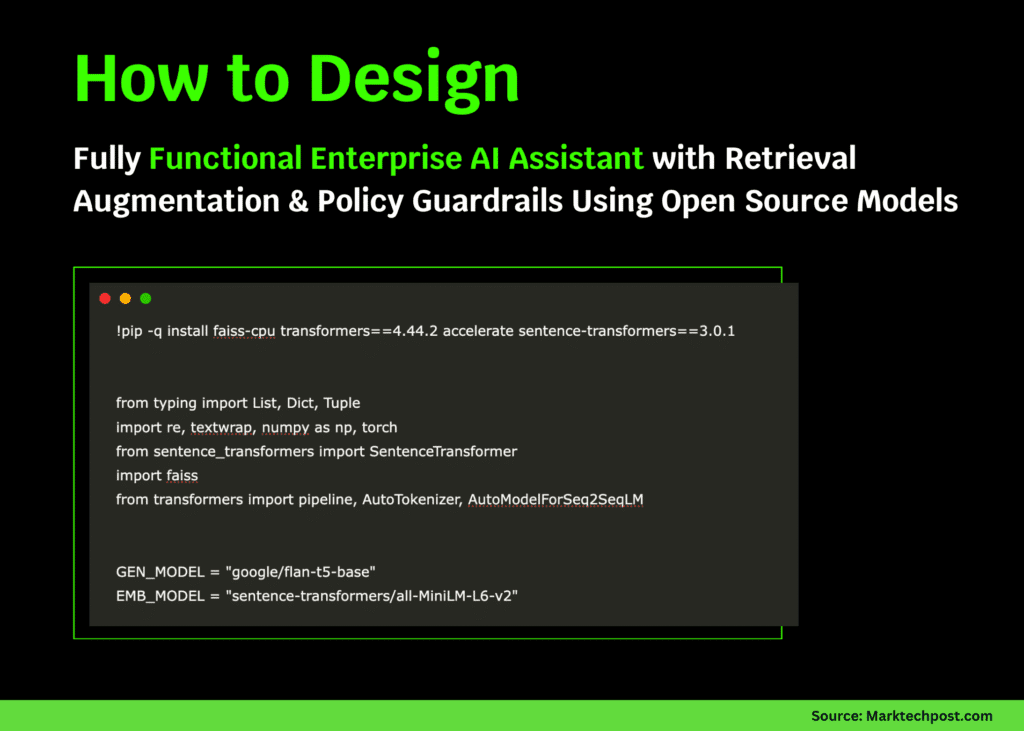

!pip -q set up faiss-cpu transformers==4.44.2 speed up sentence-transformers==3.0.1

from typing import Checklist, Dict, Tuple

import re, textwrap, numpy as np, torch

from sentence_transformers import SentenceTransformer

import faiss

from transformers import pipeline, AutoTokenizer, AutoModelForSeq2SeqLM

GEN_MODEL = "google/flan-t5-base"

EMB_MODEL = "sentence-transformers/all-MiniLM-L6-v2"

gen_tok = AutoTokenizer.from_pretrained(GEN_MODEL)

gen_model = AutoModelForSeq2SeqLM.from_pretrained(GEN_MODEL, device_map="auto")

generate = pipeline("text2text-generation", mannequin=gen_model, tokenizer=gen_tok)

emb_device = "cuda" if torch.cuda.is_available() else "cpu"

emb_model = SentenceTransformer(EMB_MODEL, machine=emb_device)We start by organising our surroundings and loading the required fashions. We initialize FLAN-T5 for textual content era and MiniLM for embedding representations. We guarantee each fashions are configured to robotically use the GPU when obtainable, so our pipeline runs effectively. Take a look at the FULL CODES here.

DOCS = [

{"id":"policy_sec_001","title":"Data Security Policy",

"text":"All customer data must be encrypted at rest (AES-256) and in transit (TLS 1.2+). Access is role-based (RBAC). Secrets are stored in a managed vault. Backups run nightly with 35-day retention. PII includes name, email, phone, address, PAN/Aadhaar."},

{"id":"policy_ai_002","title":"Responsible AI Guidelines",

"text":"Use internal models for confidential data. Retrieval sources must be logged. No customer decisioning without human-in-the-loop. Redact PII in prompts and outputs. All model prompts and outputs are stored for audit for 180 days."},

{"id":"runbook_inc_003","title":"Incident Response Runbook",

"text":"If a suspected breach occurs, page on-call SecOps. Rotate keys, isolate affected services, perform forensic capture, notify DPO within regulatory SLA. Communicate via the incident room only."},

{"id":"sop_sales_004","title":"Sales SOP - Enterprise Deals",

"text":"For RFPs, use the approved security questionnaire responses. Claims must match policy_sec_001. Custom clauses need Legal sign-off. Keep records in CRM with deal room links."}

]

def chunk(textual content:str, chunk_size=600, overlap=80):

w = textual content.break up()

if len(w) <= chunk_size: return [text]

out=[]; i=0

whereas i < len(w):

j=min(i+chunk_size, len(w)); out.append(" ".be a part of(w[i:j]))

if j==len(w): break

i = j - overlap

return out

CORPUS=[]

for d in DOCS:

for i,c in enumerate(chunk(d["text"])):

CORPUS.append({"doc_id":d["id"],"title":d["title"],"chunk_id":i,"textual content":c})We create a small enterprise-style doc set to simulate inside insurance policies and procedures. We then break these lengthy texts into manageable chunks to allow them to be embedded and retrieved successfully. This chunking helps our AI assistant deal with contextual data with higher precision. Take a look at the FULL CODES here.

def build_index(chunks:Checklist[Dict]) -> Tuple[faiss.IndexFlatIP, np.ndarray]:

vecs = emb_model.encode([c["text"] for c in chunks], normalize_embeddings=True, convert_to_numpy=True)

index = faiss.IndexFlatIP(vecs.form[1]); index.add(vecs); return index, vecs

INDEX, VECS = build_index(CORPUS)

PII_PATTERNS = [

(re.compile(r"bd{10}b"), ""),

(re.compile(r"b[A-Z0-9._%+-]+@[A-Z0-9.-]+.[A-Z]{2,}b", re.I), ""),

(re.compile(r"bd{12}b"), ""),

(re.compile(r"b[A-Z]{5}d{4}[A-Z]b"), "")

]

def redact(t:str)->str:

for p,r in PII_PATTERNS: t = p.sub(r, t)

return t

POLICY_DISALLOWED = [

re.compile(r"b(share|exfiltrate)b.*b(raw|all)b.*bdatab", re.I),

re.compile(r"bdisableb.*bencryptionb", re.I),

]

def policy_check(q:str):

for r in POLICY_DISALLOWED:

if r.search(q): return False, "Request violates safety coverage (information exfiltration/encryption tampering)."

return True, ""We embed all chunks utilizing Sentence Transformers and retailer them in a FAISS index for quick retrieval. We introduce PII redaction guidelines and coverage checks to forestall misuse of information. By doing this, we guarantee our assistant adheres to enterprise safety and compliance tips. Take a look at the FULL CODES here.

def retrieve(question:str, okay=4)->Checklist[Dict]:

qv = emb_model.encode([query], normalize_embeddings=True, convert_to_numpy=True)

scores, idxs = INDEX.search(qv, okay)

return [{**CORPUS[i], "rating": float(s)} for s,i in zip(scores[0], idxs[0])]

SYSTEM = ("You're an enterprise AI assistant.n"

"- Reply strictly from the offered CONTEXT.n"

"- If lacking information, say what's unknown and recommend the proper coverage/runbook.n"

"- Maintain it concise and cite titles + doc_ids inline like [Title (doc_id:chunk)].")

def build_prompt(user_q:str, ctx_blocks:Checklist[Dict])->str:

ctx = "nn".be a part of(f"[{i+1}] {b['title']} (doc:{b['doc_id']}:{b['chunk_id']})n{b['text']}" for i,b in enumerate(ctx_blocks))

uq = redact(user_q)

return f"SYSTEM:n{SYSTEM}nnCONTEXT:n{ctx}nnUSER QUESTION:n{uq}nnINSTRUCTIONS:n- Cite sources inline.n- Maintain to 5-8 sentences.n- Protect redactions."

def reply(user_q:str, okay=4, max_new_tokens=220)->Dict:

okay,msg = policy_check(user_q)

if not okay: return {"reply": f"❌ {msg}", "ctx":[]}

ctx = retrieve(user_q, okay=okay); immediate = build_prompt(user_q, ctx)

out = generate(immediate, max_new_tokens=max_new_tokens, do_sample=False)[0]["generated_text"].strip()

return {"reply": out, "ctx": ctx}We design the retrieval perform to fetch related doc sections for every consumer question. We then assemble a structured immediate combining context and questions for FLAN-T5 to generate exact solutions. This step ensures that our assistant produces grounded, policy-compliant responses. Take a look at the FULL CODES here.

def eval_query(user_q:str, ctx:Checklist[Dict])->Dict:

phrases = [w.lower() for w in re.findall(r"[a-zA-Z]{4,}", user_q)]

ctx_text = " ".be a part of(c["text"].decrease() for c in ctx)

hits = sum(t in ctx_text for t in phrases)

return {"phrases": len(phrases), "hits": hits, "hit_rate": spherical(hits/max(1,len(phrases)), 2)}

QUERIES = [

"What encryption and backup rules do we follow for customer data?",

"Can we auto-answer RFP security questionnaires? What should we cite?",

"If there is a suspected breach, what are the first three steps?",

"Is it allowed to share all raw customer data externally for testing?"

]

for q in QUERIES:

res = reply(q, okay=3)

print("n" + "="*100); print("Q:", q); print("nA:", res["answer"])

if res["ctx"]:

ev = eval_query(q, res["ctx"]); print("nRetrieved Context (prime 3):")

for r in res["ctx"]: print(f"- {r['title']} [{r['doc_id']}:{r['chunk_id']}] rating={r['score']:.3f}")

print("Eval:", ev)We consider our system utilizing pattern enterprise queries that take a look at encryption, RFPs, and incident procedures. We show retrieved paperwork, solutions, and easy hit-rate scores to test relevance. Via this demo, we observe our Enterprise AI assistant performing retrieval-augmented reasoning securely and precisely.

In conclusion, we efficiently created a self-contained enterprise AI system that retrieves, analyzes, and responds to enterprise queries whereas sustaining robust guardrails. We respect how seamlessly we will mix FAISS for retrieval, Sentence Transformers for embeddings, and FLAN-T5 for era to simulate an inside enterprise information engine. As we end, we notice that this easy Colab-based implementation can function a blueprint for scalable, auditable, and compliant enterprise deployments.

Take a look at the FULL CODES here. Be happy to take a look at our GitHub Page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its reputation amongst audiences.