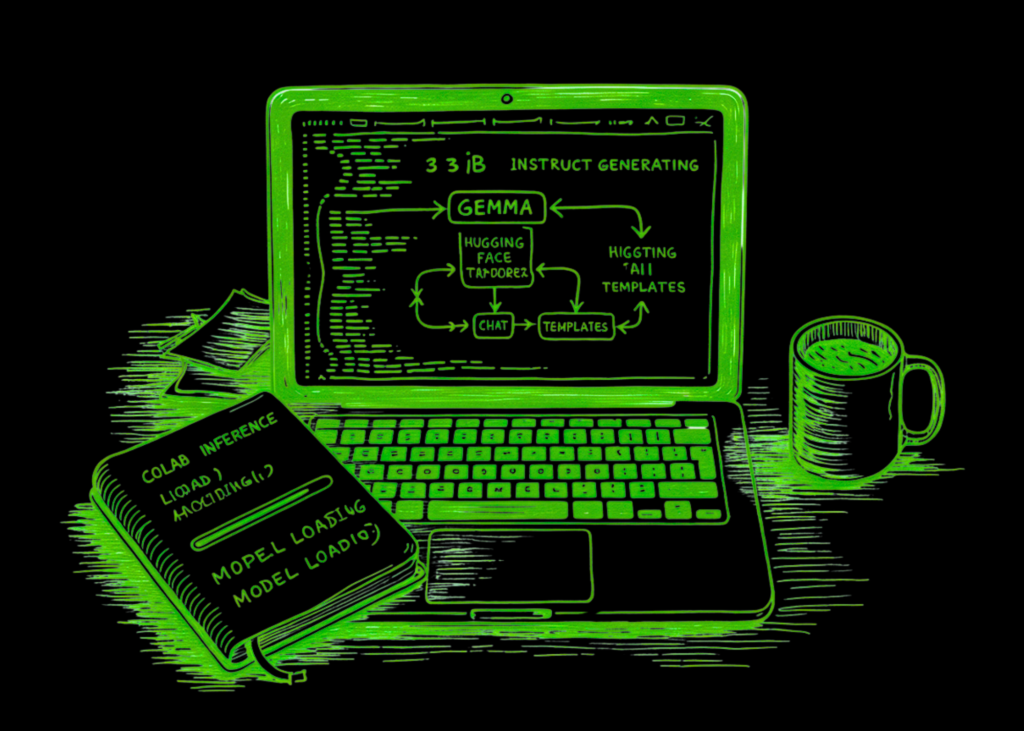

On this tutorial, we construct and run a Colab workflow for Gemma 3 1B Instruct utilizing Hugging Face Transformers and HF Token, in a sensible, reproducible, and easy-to-follow step-by-step method. We start by putting in the required libraries, securely authenticating with our Hugging Face token, and loading the tokenizer and mannequin onto the out there machine with the right precision settings. From there, we create reusable technology utilities, format prompts in a chat-style construction, and check the mannequin throughout a number of life like duties similar to fundamental technology, structured JSON-style responses, immediate chaining, benchmarking, and deterministic summarization, so we don’t simply load Gemma however truly work with it in a significant method.

import os

import sys

import time

import json

import getpass

import subprocess

import warnings

warnings.filterwarnings("ignore")

def pip_install(*pkgs):

subprocess.check_call([sys.executable, "-m", "pip", "install", "-q", *pkgs])

pip_install(

"transformers>=4.51.0",

"speed up",

"sentencepiece",

"safetensors",

"pandas",

)

import torch

import pandas as pd

from huggingface_hub import login

from transformers import AutoTokenizer, AutoModelForCausalLM

print("=" * 100)

print("STEP 1 — Hugging Face authentication")

print("=" * 100)

hf_token = None

attempt:

from google.colab import userdata

attempt:

hf_token = userdata.get("HF_TOKEN")

besides Exception:

hf_token = None

besides Exception:

go

if not hf_token:

hf_token = getpass.getpass("Enter your Hugging Face token: ").strip()

login(token=hf_token)

os.environ["HF_TOKEN"] = hf_token

print("HF login profitable.")We arrange the atmosphere wanted to run the tutorial easily in Google Colab. We set up the required libraries, import all of the core dependencies, and securely authenticate with Hugging Face utilizing our token. By the tip of this half, we are going to put together the pocket book to entry the Gemma mannequin and proceed the workflow with out handbook setup points.

print("=" * 100)

print("STEP 2 — Machine setup")

print("=" * 100)

machine = "cuda" if torch.cuda.is_available() else "cpu"

dtype = torch.bfloat16 if torch.cuda.is_available() else torch.float32

print("machine:", machine)

print("dtype:", dtype)

model_id = "google/gemma-3-1b-it"

print("model_id:", model_id)

print("=" * 100)

print("STEP 3 — Load tokenizer and mannequin")

print("=" * 100)

tokenizer = AutoTokenizer.from_pretrained(

model_id,

token=hf_token,

)

mannequin = AutoModelForCausalLM.from_pretrained(

model_id,

token=hf_token,

torch_dtype=dtype,

device_map="auto",

)

mannequin.eval()

print("Tokenizer and mannequin loaded efficiently.")We configure the runtime by detecting whether or not we’re utilizing a GPU or a CPU and choosing the suitable precision to load the mannequin effectively. We then outline the Gemma 3 1 B Instruct mannequin path and cargo each the tokenizer and the mannequin from Hugging Face. At this stage, we full the core mannequin initialization, making the pocket book able to generate textual content.

def build_chat_prompt(user_prompt: str):

messages = [

{"role": "user", "content": user_prompt}

]

attempt:

textual content = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

besides Exception:

textual content = f"usern{user_prompt}nmodeln"

return textual content

def generate_text(immediate, max_new_tokens=256, temperature=0.7, do_sample=True):

chat_text = build_chat_prompt(immediate)

inputs = tokenizer(chat_text, return_tensors="pt").to(mannequin.machine)

with torch.no_grad():

outputs = mannequin.generate(

**inputs,

max_new_tokens=max_new_tokens,

do_sample=do_sample,

temperature=temperature if do_sample else None,

top_p=0.95 if do_sample else None,

eos_token_id=tokenizer.eos_token_id,

pad_token_id=tokenizer.eos_token_id,

)

generated = outputs[0][inputs["input_ids"].form[-1]:]

return tokenizer.decode(generated, skip_special_tokens=True).strip()

print("=" * 100)

print("STEP 4 — Fundamental technology")

print("=" * 100)

prompt1 = """Clarify Gemma 3 in plain English.

Then give:

1. one sensible use case

2. one limitation

3. one Colab tip

Hold it concise."""

resp1 = generate_text(prompt1, max_new_tokens=220, temperature=0.7, do_sample=True)

print(resp1)We construct the reusable capabilities that format prompts into the anticipated chat construction and deal with textual content technology from the mannequin. We make the inference pipeline modular so we will reuse the identical operate throughout totally different duties within the pocket book. After that, we run a primary sensible technology instance to substantiate that the mannequin is working appropriately and producing significant output.

print("=" * 100)

print("STEP 5 — Structured output")

print("=" * 100)

prompt2 = """

Examine native open-weight mannequin utilization vs API-hosted mannequin utilization.

Return JSON with this schema:

{

"native": {

"execs": ["", "", ""],

"cons": ["", "", ""]

},

"api": {

"execs": ["", "", ""],

"cons": ["", "", ""]

},

"best_for": {

"native": "",

"api": ""

}

}

Solely output JSON.

"""

resp2 = generate_text(prompt2, max_new_tokens=300, temperature=0.2, do_sample=True)

print(resp2)

print("=" * 100)

print("STEP 6 — Immediate chaining")

print("=" * 100)

job = "Draft a 5-step guidelines for evaluating whether or not Gemma matches an inside enterprise prototype."

resp3 = generate_text(job, max_new_tokens=250, temperature=0.6, do_sample=True)

print(resp3)

followup = f"""

Right here is an preliminary guidelines:

{resp3}

Now rewrite it for a product supervisor viewers.

"""

resp4 = generate_text(followup, max_new_tokens=250, temperature=0.6, do_sample=True)

print(resp4)We push the mannequin past easy prompting by testing structured output technology and immediate chaining. We ask Gemma to return a response in an outlined JSON-like format after which use a follow-up instruction to remodel an earlier response for a unique viewers. This helps us see how the mannequin handles formatting constraints and multi-step refinement in a sensible workflow.

print("=" * 100)

print("STEP 7 — Mini benchmark")

print("=" * 100)

prompts = [

"Explain tokenization in two lines.",

"Give three use cases for local LLMs.",

"What is one downside of small local models?",

"Explain instruction tuning in one paragraph."

]

rows = []

for p in prompts:

t0 = time.time()

out = generate_text(p, max_new_tokens=140, temperature=0.3, do_sample=True)

dt = time.time() - t0

rows.append({

"immediate": p,

"latency_sec": spherical(dt, 2),

"chars": len(out),

"preview": out[:160].substitute("n", " ")

})

df = pd.DataFrame(rows)

print(df)

print("=" * 100)

print("STEP 8 — Deterministic summarization")

print("=" * 100)

long_text = """

In sensible utilization, groups usually consider

trade-offs amongst native deployment value, latency, privateness, controllability, and uncooked functionality.

Smaller fashions will be simpler to deploy, however they might battle extra on complicated reasoning or domain-specific duties.

"""

summary_prompt = f"""

Summarize the next in precisely 4 bullet factors:

{long_text}

"""

abstract = generate_text(summary_prompt, max_new_tokens=180, do_sample=False)

print(abstract)

print("=" * 100)

print("STEP 9 — Save outputs")

print("=" * 100)

report = {

"model_id": model_id,

"machine": str(mannequin.machine),

"basic_generation": resp1,

"structured_output": resp2,

"chain_step_1": resp3,

"chain_step_2": resp4,

"abstract": abstract,

"benchmark": rows,

}

with open("gemma3_1b_text_tutorial_report.json", "w", encoding="utf-8") as f:

json.dump(report, f, indent=2, ensure_ascii=False)

print("Saved gemma3_1b_text_tutorial_report.json")

print("Tutorial full.")We consider the mannequin throughout a small benchmark of prompts to watch response conduct, latency, and output size in a compact experiment. We then carry out a deterministic summarization job to see how the mannequin behaves when randomness is decreased. Lastly, we save all the most important outputs to a report file, turning the pocket book right into a reusable experimental setup slightly than only a momentary demo.

In conclusion, we’ve got a whole text-generation pipeline that exhibits how Gemma 3 1B can be utilized in Colab for sensible experimentation and light-weight prototyping. We generated direct responses, in contrast outputs throughout totally different prompting types, measured easy latency conduct, and saved the outcomes right into a report file for later inspection. In doing so, we turned the pocket book into greater than a one-off demo: we made it a reusable basis for testing prompts, evaluating outputs, and integrating Gemma into bigger workflows with confidence.

Try the Full Coding Notebook here. Additionally, be happy to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.