**Breaking Down the Bottleneck: NVIDIA AI’s KVZAP for Transformer Caches**

If you’re a Transformer enthusiast, you’ll know that as model sizes grow, the key-value (KV) cache becomes a significant bottleneck. We all know that limiting batch sizes and increasing time to first token are major pain points in deployment. But what if I told you there’s a solution that uses a novel approach to prune your KV cache, achieving near-lossless compression ratios of 2x-4x? Enter KVZAP, a recently open-sourced method from NVIDIA AI.

**Embracing Sequence-Axis Compression**

Traditional approaches to compressing the KV cache, like grouped question consideration and deep seek V2, have already gained traction. These methods have been compressing the cache alongside multiple axes, but there’s still a key consideration missing: sequence-axis compression. For those working with long-context serving, this can be a major issue.

**Meet the Surrogate Model: KVZAP**

NVIDIA AI’s KVZAP takes a different tack, relying on a small surrogate model that operates on hidden states. Similar to the oracle scoring mechanism, this model learns to regress from the hidden state to the log KVZ+ score using prompts from the Nemotron Pretraining Dataset. Could this be the breakthrough we’ve been waiting for? The results from the MLP variant show a promising Pearson correlation of 0.63-0.77 with the oracle scores.

**How KVZAP Works**

In inference mode, the KVZAP model analyzes hidden states, producing scores for each cache entry. If the score falls below a set threshold, the entry gets pruned, while a sliding window of 128 tokens is always kept. The goal is to achieve efficient compression with minimal overhead, making it suitable for production use.

**Real-World Performance**

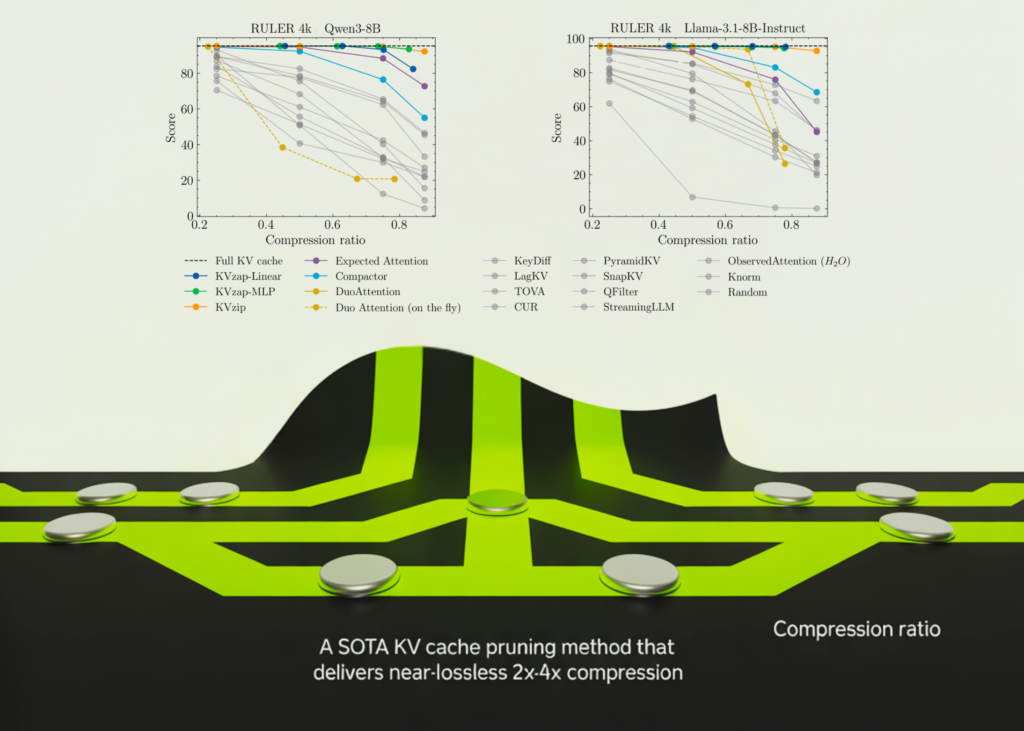

KVZAP has been tested on several benchmarks, including RULER, LongBench, and AIME25. The results show that KVZAP matches the full cache baseline while trimming a substantial portion of the cache. On RULER, KVZAP delivers compression ratios of 2-4x with accuracy near the full cache baseline. LongBench results show that KVZAP stays close to the full cache baseline up to a compression ratio of 2-3x.

**Takeaways and Next Steps**

**KVZAP** uses a novel approach to prune your KV cache by learning to predict oracle scores from hidden states using a small surrogate model.

**KVZAP** achieves near-lossless compression ratios of 2x-4x while preserving accuracy near the full cache baseline.

**The code is open-sourced on GitHub for you to try.**

Stay up-to-date with the latest AI and machine learning news by following me on Twitter and joining our 100k+ ML SubReddit.