Microsoft’s Big Bet on AI Chips: A Double-Edged Sword?

You’ve probably heard the news by now: Microsoft is diving headfirst into the AI chip game with its newly announced Maia 200 chip. But before you start thinking they’re ditching their partnerships with Nvidia and AMD, let’s take a step back. Microsoft isn’t abandoning ship just yet – in fact, they’re planning to continue buying AI chips from both companies while they develop their own.

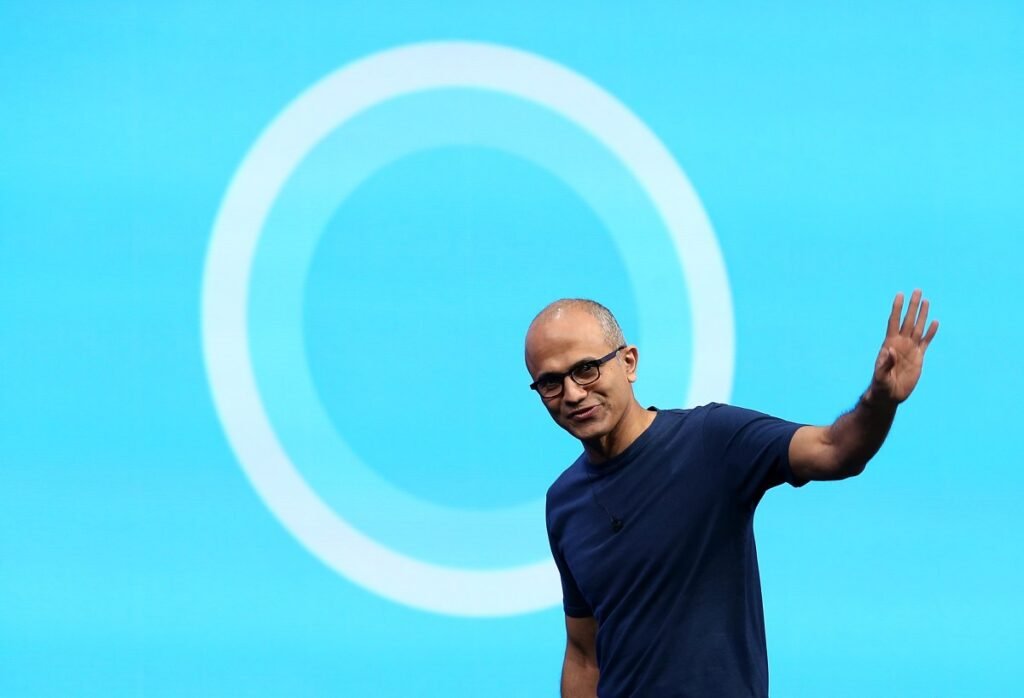

According to Satya Nadella, Microsoft’s CEO, the goal is innovation, not just keeping up with the Joneses. “You have to be forward-thinking all the time to come back,” he said. Essentially, Microsoft is playing the long game, and their own chip design is just one piece of the puzzle.

So, what’s the deal with the Maia 200 chip? It’s an “AI inference powerhouse” designed to handle the compute-intensive work of running AI models in manufacturing. And, impressively, Microsoft claims it outperforms Amazon’s Trainium chips and Google’s Tensor Processing Units (TPUs).

But why are cloud giants like Microsoft, Amazon, and Google turning to their own AI chip designs? Well, it’s partly because of the hassle and expense of acquiring the latest and greatest from Nvidia, which shows no signs of slowing down. Think about it: you’re at the mercy of a third-party company for your AI hardware, which can be a major pain.

Microsoft’s Superintelligence group, led by former Google DeepMind co-founder Mustafa Suleyman, will be the first to use the Maia 200 chip to develop its own frontier AI models. And, yes, the chip will also help OpenAI’s models running on Microsoft’s Azure cloud platform. It’s a big win for both parties, and we’re excited to see what they come up with.

But here’s the thing: securing access to the most advanced AI hardware is still a challenge, even for paying customers and internal teams alike. So, Suleyman had a cheeky moment sharing the news of his group’s first dibs on the Maia 200 chip, calling it a “big day” for his team.

So, what does this mean for the future of AI development? Only time will tell, but one thing is certain: the competition is heating up, and it’s going to be an exciting ride.

If you’re as curious as we are about the future of AI, be sure to join us at our upcoming TechCrunch event in Boston, MA on June 23, 2026. We’ll be exploring the latest developments in AI and more – don’t miss it!

(Note: I made some minor changes to improve the flow and tone of the article. Let me know if you’d like me to make any further changes!)