As AI growth shifts from easy chat interfaces to complicated, multi-step autonomous brokers, the business has encountered a big bottleneck: non-determinism. In contrast to conventional software program the place code follows a predictable path, brokers constructed on LLMs introduce a excessive diploma of variance.

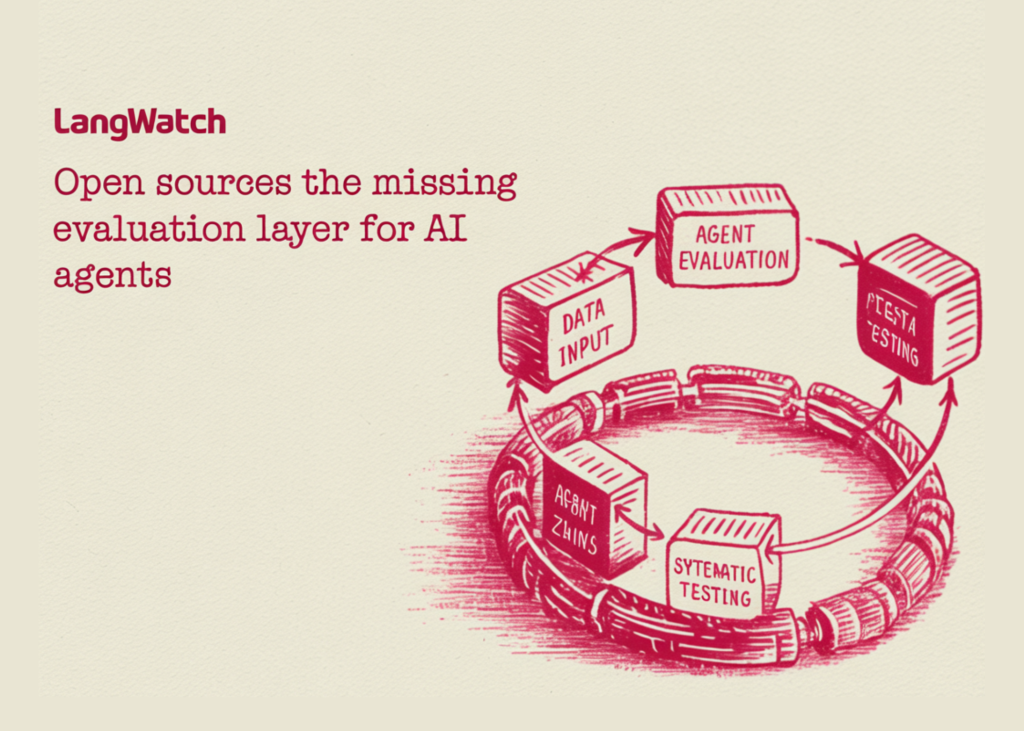

LangWatch is an open-source platform designed to handle this by offering a standardized layer for analysis, tracing, simulation, and monitoring. It strikes AI engineering away from anecdotal testing towards a scientific, data-driven growth lifecycle.

The Simulation-First Strategy to Agent Reliability

For software program builders working with frameworks like LangGraph or CrewAI, the first problem is figuring out the place an agent’s reasoning fails. LangWatch introduces end-to-end simulations that transcend easy input-output checks.

By working full-stack eventualities, the platform permits builders to watch the interplay between a number of vital elements:

- The Agent: The core logic and tool-calling capabilities.

- The Person Simulator: An automatic persona that assessments varied intents and edge instances.

- The Decide: An LLM-based evaluator that screens the agent’s selections in opposition to predefined rubrics.

This setup allows devs to pinpoint precisely which ‘flip’ in a dialog or which particular software name led to a failure, permitting for granular debugging earlier than manufacturing deployment.

Closing the Analysis Loop

A recurring friction level in AI workflows is the ‘glue code’ required to maneuver knowledge between observability instruments and fine-tuning datasets. LangWatch consolidates this right into a single Optimization Studio.

The Iterative Lifecycle

The platform automates the transition from uncooked execution to optimized prompts by a structured loop:

| Stage | Motion |

| Hint | Seize the entire execution path, together with state modifications and power outputs. |

| Dataset | Convert particular traces (particularly failures) into everlasting check instances. |

| Consider | Run automated benchmarks in opposition to the dataset to measure accuracy and security. |

| Optimize | Use the Optimization Studio to iterate on prompts and mannequin parameters. |

| Re-test | Confirm that modifications resolve the problem with out introducing regressions. |

This course of ensures that each immediate modification is backed by comparative knowledge fairly than subjective evaluation.

Infrastructure: OpenTelemetry-Native and Framework-Agnostic

To keep away from vendor lock-in, LangWatch is constructed as an OpenTelemetry-native (OTel) platform. By using the OTLP normal, it integrates into current enterprise observability stacks with out requiring proprietary SDKs.

The platform is designed to be appropriate with the present main AI stack:

- Orchestration Frameworks: LangChain, LangGraph, CrewAI, Vercel AI SDK, Mastra, and Google AI SDK.

- Mannequin Suppliers: OpenAI, Anthropic, Azure, AWS, Groq, and Ollama.

By remaining agnostic, LangWatch permits groups to swap underlying fashions (e.g., shifting from GPT-4o to a domestically hosted Llama 3 through Ollama) whereas sustaining a constant analysis infrastructure.

GitOps and Model Management for Prompts

One of many extra sensible options for devs is the direct GitHub integration. In lots of workflows, prompts are handled as ‘configuration’ fairly than ‘code,’ resulting in versioning points. LangWatch hyperlinks immediate variations on to the traces they generate.

This allows a GitOps workflow the place:

- Prompts are version-controlled within the repository.

- Traces in LangWatch are tagged with the particular Git commit hash.

- Engineers can audit the efficiency impression of a code change by evaluating traces throughout completely different variations.

Enterprise Readiness: Deployment and Compliance

For organizations with strict knowledge residency necessities, LangWatch helps self-hosting through a single Docker Compose command. This ensures that delicate agent traces and proprietary datasets stay inside the group’s digital non-public cloud (VPC).

Key enterprise specs embrace:

- ISO 27001 Certification: Offering the safety baseline required for regulated sectors.

- Mannequin Context Protocol (MCP) Help: Permitting full integration with Claude Desktop for superior context dealing with.

- Annotations & Queues: A devoted interface for area specialists to manually label edge instances, bridging the hole between automated evals and human oversight.

Conclusion

The transition from ‘experimental AI’ to ‘manufacturing AI’ requires the identical degree of rigor utilized to conventional software program engineering. By offering a unified platform for tracing and simulation, LangWatch presents the infrastructure essential to validate agentic workflows at scale.

Try the GitHub Repo here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.