The problem of wrangling a deep studying mannequin is usually understanding why it does what it does: Whether or not it’s xAI’s repeated wrestle classes to fine-tune Grok’s odd politics, ChatGPT’s struggles with sycophancy, or run-of-the-mill hallucinations, plumbing by way of a neural community with billions of parameters isn’t straightforward.

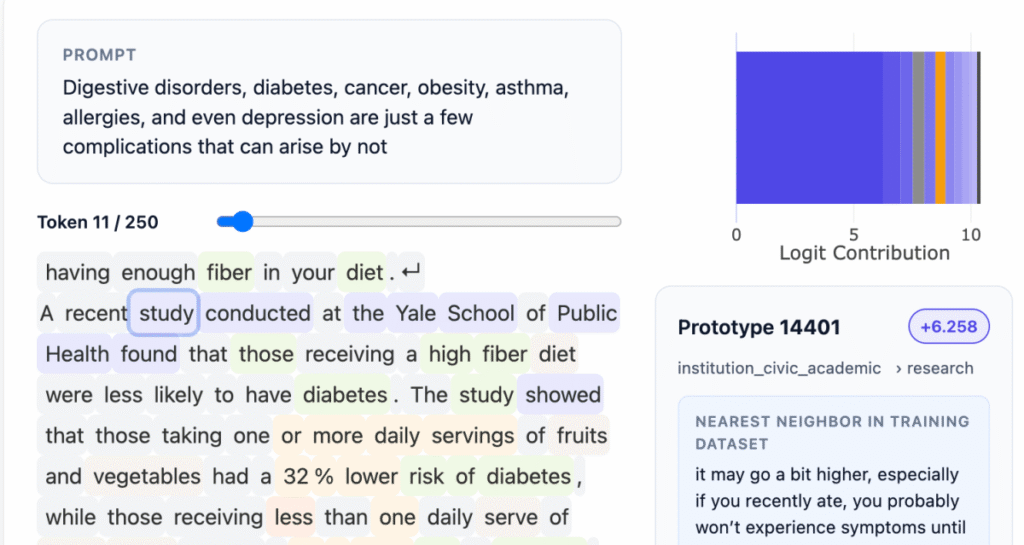

Information Labs, a San Francisco startup based by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, is providing a solution to that downside at present. On Monday, the corporate open sourced an 8-billion-parameter LLM, Steerling-8B, skilled with a brand new structure designed to make its actions simply interpretable: Each token produced by the mannequin could be traced again to its origins within the LLM’s coaching knowledge.

That may be so simple as figuring out the reference supplies for info cited by the mannequin, or as advanced as understanding the mannequin’s understanding of humor or gender.

“If I’ve a trillion methods to encode gender, and I encode it in 1 billion of the 1 trillion issues that I’ve, you need to be sure you discover all these 1 billion issues that I’ve encoded, after which you may have to have the ability to reliably flip that on, flip them off,” Adebayo instructed TechCrunch. “You are able to do it with present fashions, however it’s very fragile … It’s type of one of many holy grail questions.”

Adebayo started this work whereas incomes his PhD at MIT, co-authoring a broadly cited 2018 paper that confirmed current strategies of understanding deep studying fashions weren’t dependable. That work finally led to the creation of a brand new approach of constructing LLMs: Builders insert an idea layer within the mannequin that buckets knowledge into traceable classes. This requires extra up-front knowledge annotation, however through the use of different AI fashions to assist, they had been in a position to practice this mannequin as their largest proof of idea but.

“The form of interpretability folks do is … neuroscience on a mannequin, and we flip that,” Adebayo stated. “What we do is definitely engineer the mannequin from the bottom up so that you just don’t have to do neuroscience.”

One concern with this method is that it would remove a few of the emergent behaviors that make LLMs so intriguing: Their means to generalize in new methods about issues they haven’t been skilled on but. Adebayo says that also occurs in his firm’s mannequin: His crew tracks what they name “found ideas” that the mannequin found by itself, like quantum computing.

Techcrunch occasion

Boston, MA

|

June 9, 2026

Adebayo argues this interpretable structure will likely be one thing everybody wants. For consumer-facing LLMs, these strategies ought to permit mannequin builders to do issues like block using copyrighted supplies, or higher management outputs round topics like violence or drug abuse. Regulated industries would require extra controllable LLMs — for instance, in finance — the place a mannequin evaluating mortgage candidates wants to think about issues like monetary information however not race. There’s additionally a necessity for interpretability in scientific work, one other space the place Information Labs has developed know-how. Protein folding has been a giant success for deep studying fashions, however scientists want extra perception into why their software program discovered promising combos.

“This mannequin demonstrates that coaching interpretable fashions is now not a type of science; it’s now an engineering downside,” Adebayo stated. “We discovered the science and we will scale them, and there’s no motive why this sort of mannequin wouldn’t match the efficiency of the frontier stage fashions,” which have many extra parameters.

Information Labs says that Steerling-8B can obtain 90% of the aptitude of current fashions, however makes use of much less coaching knowledge, due to its novel structure. The following step for the corporate, which emerged from Y Combinator and raised a $9 million seed spherical from Initialized Capital in November 2024, is to construct a bigger mannequin and start providing API and agentic entry to customers.

“The best way we’re at the moment coaching fashions is tremendous primitive, and so democratizing inherent interpretability is definitely going to be a long-term good factor for our position throughout the human race,” Adebayo instructed TechCrunch. “As we’re going after these fashions which might be going to be tremendous clever, you don’t need one thing to be making selections in your behalf that’s type of mysterious to you.”