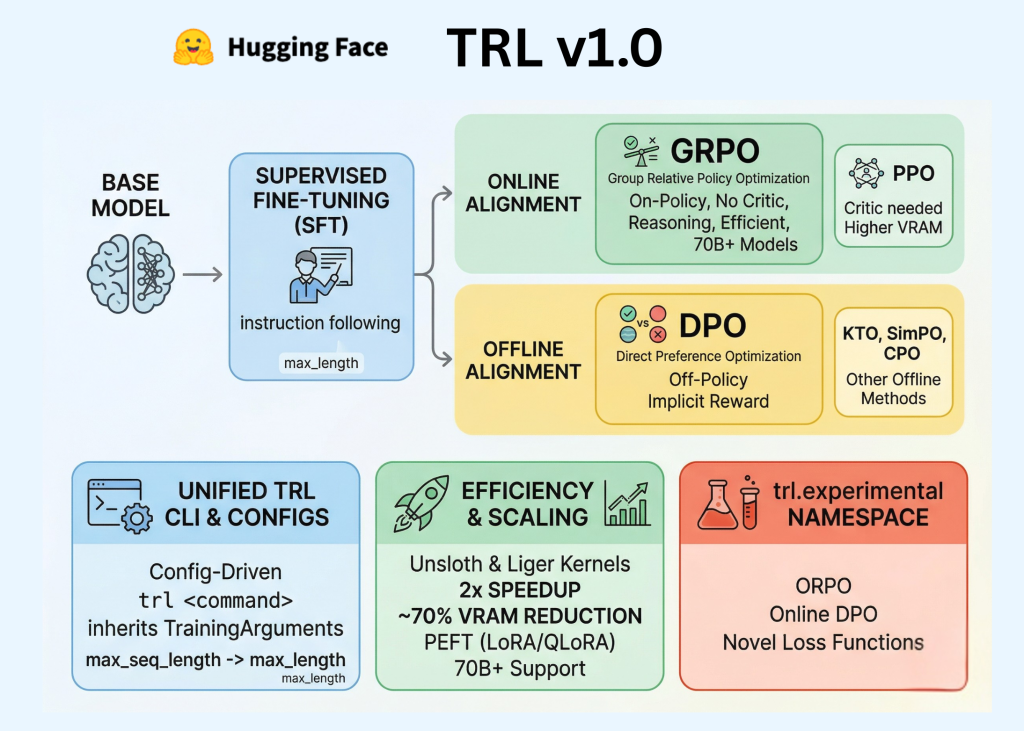

Hugging Face has formally launched TRL (Transformer Reinforcement Studying) v1.0, marking a pivotal transition for the library from a research-oriented repository to a secure, production-ready framework. For AI professionals and builders, this launch codifies the Put up-Coaching pipeline—the important sequence of Supervised Effective-Tuning (SFT), Reward Modeling, and Alignment—right into a unified, standardized API.

Within the early phases of the LLM growth, post-training was usually handled as an experimental ‘darkish artwork.’ TRL v1.0 goals to vary that by offering a constant developer expertise constructed on three core pillars: a devoted Command Line Interface (CLI), a unified Configuration system, and an expanded suite of alignment algorithms together with DPO, GRPO, and KTO.

The Unified Put up-Coaching Stack

Put up-training is the part the place a pre-trained base mannequin is refined to comply with directions, undertake a selected tone, or exhibit advanced reasoning capabilities. TRL v1.0 organizes this course of into distinct, interoperable phases:

- Supervised Effective-Tuning (SFT): The foundational step the place the mannequin is skilled on high-quality instruction-following knowledge to adapt its pre-trained data to a conversational format.

- Reward Modeling: The method of coaching a separate mannequin to foretell human preferences, which acts as a ‘decide’ to attain totally different mannequin responses.

- Alignment (Reinforcement Studying): The ultimate refinement the place the mannequin is optimized to maximise choice scores. That is achieved both by way of “on-line” strategies that generate textual content throughout coaching or “offline” strategies that be taught from static choice datasets.

Standardizing the Developer Expertise: The TRL CLI

Probably the most vital updates for software program engineers is the introduction of a strong TRL CLI. Beforehand, engineers had been required to put in writing intensive boilerplate code and customized coaching loops for each experiment. TRL v1.0 introduces a config-driven strategy that makes use of YAML information or direct command-line arguments to handle the coaching lifecycle.

The trl Command

The CLI gives standardized entry factors for the first coaching phases. For example, initiating an SFT run can now be executed through a single command:

trl sft --model_name_or_path meta-llama/Llama-3.1-8B --dataset_name openbmb/UltraInteract --output_dir ./sft_resultsThis interface is built-in with Hugging Face Speed up, which permits the identical command to scale throughout various {hardware} configurations. Whether or not operating on a single native GPU or a multi-node cluster using Totally Sharded Information Parallel (FSDP) or DeepSpeed, the CLI manages the underlying distribution logic.

TRLConfig and TrainingArguments

Technical parity with the core transformers library is a cornerstone of this launch. Every coach now incorporates a corresponding configuration class—corresponding to SFTConfig, DPOConfig, or GRPOConfig—which inherits immediately from transformers.TrainingArguments.

Alignment Algorithms: Selecting the Proper Goal

TRL v1.0 consolidates a number of reinforcement studying strategies, categorizing them primarily based on their knowledge necessities and computational overhead.

| Algorithm | Sort | Technical Attribute |

| PPO | On-line | Requires Coverage, Reference, Reward, and Worth (Critic) fashions. Highest VRAM footprint. |

| DPO | Offline | Learns from choice pairs (chosen vs. rejected) with no separate Reward mannequin. |

| GRPO | On-line | An on-policy technique that removes the Worth (Critic) mannequin through the use of group-relative rewards. |

| KTO | Offline | Learns from binary “thumbs up/down” alerts as an alternative of paired preferences. |

| ORPO (Exp.) | Experimental | A one-step technique that merges SFT and alignment utilizing an odds-ratio loss. |

Effectivity and Efficiency Scaling

To accommodate fashions with billions of parameters on shopper or mid-tier enterprise {hardware}, TRL v1.0 integrates a number of efficiency-focused applied sciences:

- PEFT (Parameter-Environment friendly Effective-Tuning): Native help for LoRA and QLoRA allows fine-tuning by updating a small fraction of the mannequin’s weights, drastically lowering reminiscence necessities.

- Unsloth Integration: TRL v1.0 leverages specialised kernels from the Unsloth library. For SFT and DPO workflows, this integration can lead to a 2x enhance in coaching velocity and as much as a 70% discount in reminiscence utilization in comparison with normal implementations.

- Information Packing: The

SFTTrainerhelps constant-length packing. This method concatenates a number of brief sequences right into a single fixed-length block (e.g., 2048 tokens), making certain that just about each token processed contributes to the gradient replace and minimizing computation spent on padding.

The trl.experimental Namespace

Hugging Face workforce has launched the trl.experimental namespace to separate production-stable instruments from quickly evolving analysis. This enables the core library to stay backward-compatible whereas nonetheless internet hosting cutting-edge developments.

Options at present within the experimental monitor embody:

- ORPO (Odds Ratio Choice Optimization): An rising technique that makes an attempt to skip the SFT part by making use of alignment on to the bottom mannequin.

- On-line DPO Trainers: Variants of DPO that incorporate real-time era.

- Novel Loss Features: Experimental targets that focus on particular mannequin behaviors, corresponding to lowering verbosity or enhancing mathematical reasoning.

Key Takeaways

- TRL v1.0 standardizes LLM post-training with a unified CLI, config system, and coach workflow.

- The discharge separates a secure core from experimental strategies corresponding to ORPO and KTO.

- GRPO reduces RL coaching overhead by eradicating the separate critic mannequin utilized in PPO.

- TRL integrates PEFT, knowledge packing, and Unsloth to enhance coaching effectivity and reminiscence utilization.

- The library makes SFT, reward modeling, and alignment extra reproducible for engineering groups.

Try the Technical details. Additionally, be at liberty to comply with us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Michal Sutter is an information science skilled with a Grasp of Science in Information Science from the College of Padova. With a strong basis in statistical evaluation, machine studying, and knowledge engineering, Michal excels at reworking advanced datasets into actionable insights.