**Designing an Agentic AI Architecture with LangGraph and OpenAI: A Step Towards Autonomy**

In this tutorial, we’re going to build an Agentic AI system that can reason, act, learn, and evolve. We’ll create a superior AI system using LangGraph and OpenAI models, taking a step beyond simple planner-executor loops. Our goal is to demonstrate how modern agentic methods can achieve autonomy by adapting their reasoning depth, reusing prior information, and encoding classes as persistent memory.

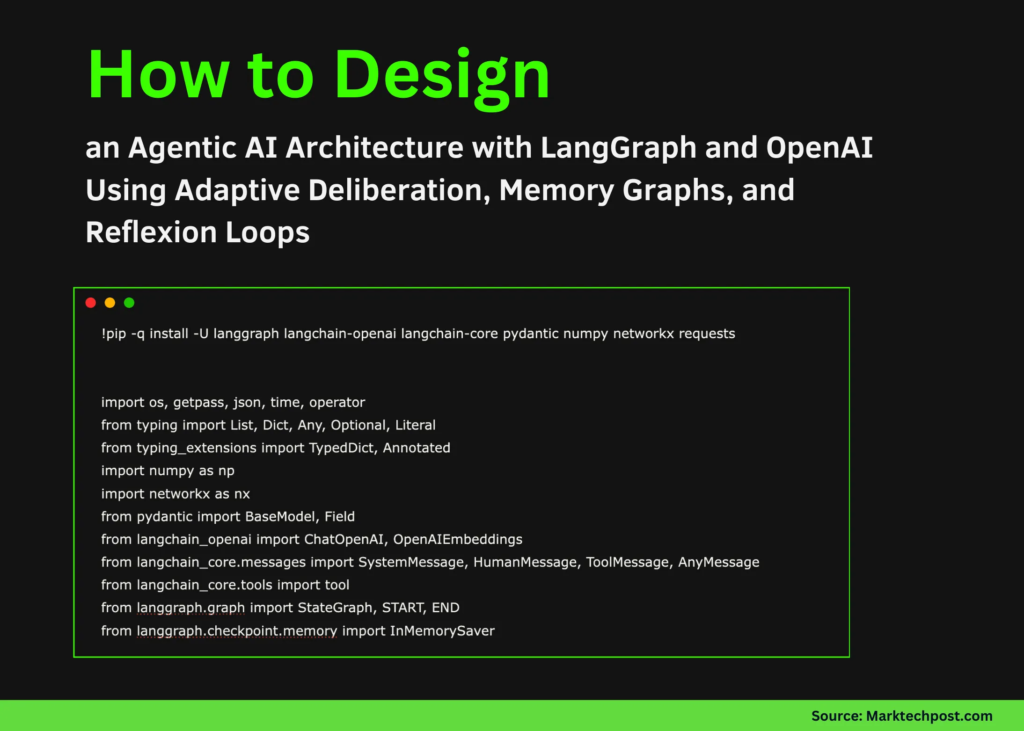

To start, we’ll set up the execution environment by installing all the required libraries and importing the core modules. We’ll bring together LangGraph for orchestration, LangChain for model and power abstractions, and supporting libraries for memory graphs and numerical operations.

Next, we’ll load the OpenAI API key at runtime and initialize the language models used for quick, deep, and reflective reasoning. We’ll also configure the embedding model that powers semantic similarity in memory. This separation allows us to flexibly switch between reasoning depths while maintaining a shared representation space for memory.

We’ll create an agentic memory graph inspired by the Zettelkasten technique, where each interaction is stored as an atomic note. We’ll embed each note and link it to semantically related notes using similarity scores.

We’ll also define the external instruments the agent can invoke, including internet access and memory-based retrieval. We’ll combine these instruments in a structured way, allowing the agent to query previous experiences or fetch new information when needed.

**Structured Schemas and System Prompts**

We’ll formalize the agent’s internal representations using structured schemas for deliberation, execution targets, reflection, and global state. We’ll also outline system prompts that guide conduct in quick and deep modes. This ensures the agent’s reasoning and selections remain consistent, interpretable, and controllable.

**Implementing the Core Behaviors**

We’ll implement the core agentic behaviors as LangGraph nodes, including deliberation, motion, instrument governance, and reflexion. We’ll orchestrate how information flows between these levels and the way choices affect the execution path.

**Assembling the Workflow**

We’ll assemble all nodes into a LangGraph workflow and compile it with checkpointed state management. We’ll also outline a reusable runner function that executes the agent while preserving memory across runs.

**Conclusion**

In conclusion, we’ve demonstrated how an agent can repeatedly improve its conduct through reflection and memory rather than relying on static prompts or hard-coded logic. We used LangGraph to orchestrate deliberation, execution, instrument governance, and reflexion as a coherent graph, while OpenAI models provide the reasoning and synthesis capabilities at each stage. This approach has shown how agentic AI methods can move closer to autonomy by adapting their reasoning depth, reusing prior information, and encoding classes as persistent memory.

**Try the FULL CODES here**

Additionally, don’t forget to follow us on [Twitter](https://x.com/intent/follow?screen_name=marktechpost) and join our [100k+ ML SubReddit](https://www.reddit.com/r/machinelearningnews/). We’re also on Telegram – [join us now](https://t.me/machinelearningresearchnews). And, if you’re interested in more AI-related news and updates, subscribe to our [Newsletter](https://www.aidevsignals.com/).

**ai2025.dev**

Try our latest release of [ai2025.dev](https://ai2025.dev/), a 2025-focused analytics platform that turns model launches, benchmarks, and ecosystem activity into a structured dataset you can filter, analyze, and export.

**About the Author**

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good.