**Building a Production-Grade Agentic AI System: A Comprehensive Guide**

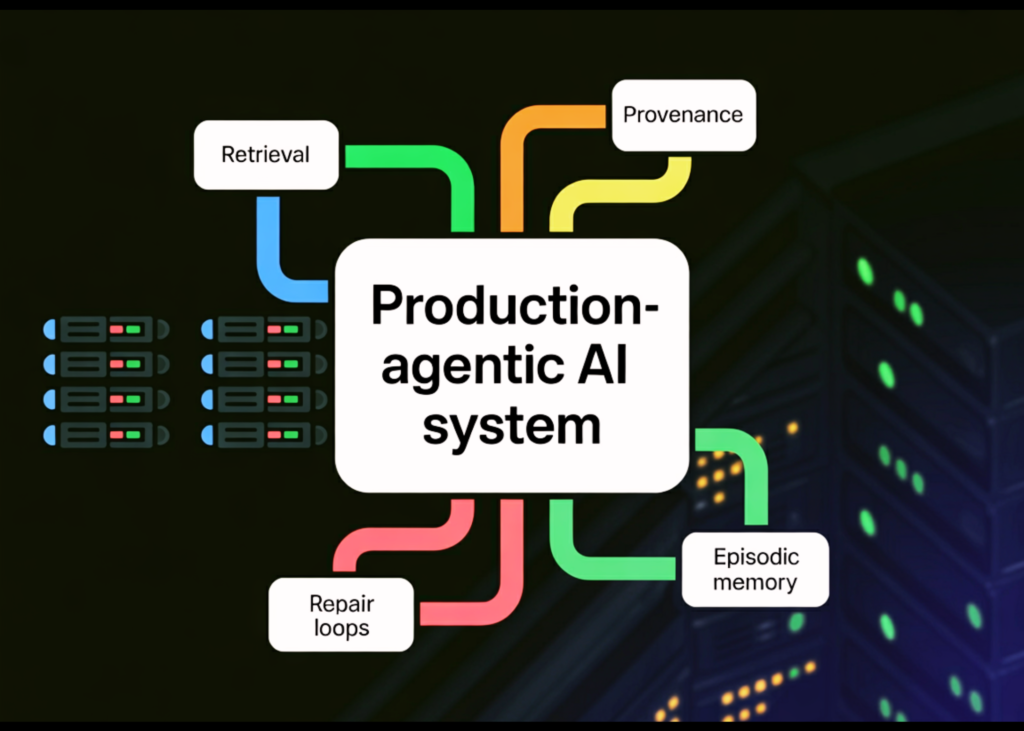

Hey AI enthusiasts! Today, we’re going to tackle the ambitious project of building an ultra-advanced agentic AI workflow that mirrors a production-grade research and reasoning system. We’ll be combining the power of hybrid retrieval, provenance-first citations, restore loops, and episodic memory to create a robust, scalable, and reliable system.

Before we dive in, let me break down the high-level overview of what we’ll be covering. We’ll set up our environment, define some essential functions, and then move on to asynchronously fetching web sources, deduplicating content, and converting raw pages into structured text. We’ll also define core knowledge models, collect evidence, and assemble proof packs with scores and provenance. Finally, we’ll orchestrate the entire agentic loop and ensure that every answer stays grounded and auditable.

One important thing to note is that we’ll be using some advanced AI concepts, so if you’re new to this stuff, you might want to brush up on your knowledge of hybrid retrieval, provenance-first citations, restore loops, and episodic memory. But don’t worry, we’ll walk through each step together!

**Step 1: Setting up the Environment and Core Utilities**

We’ll start by setting up our environment and initializing the core utilities that everything else relies on. This includes defining functions for hashing, URL normalization, HTML cleaning, and chunking. We’ll also add some deterministic helpers to normalize and inject citations. This is crucial to ensure that our system is always compliant and auditable.

**Step 2: Fetching Web Sources and Deduplicating Content**

Next, we’ll asynchronously fetch multiple web sources in parallel and aggressively deduplicate content to avoid redundant evidence. We’ll convert raw pages into structured text and define the core knowledge models that represent chunks and retrieval hits.

**Step 3: Collecting Evidence and Assembling Proof Packs**

We’ll collect evidence by running multiple focused queries, fusing sparse and dense results, and assembling proof packs with scores and provenance. We’ll define strict schemas for plans and final answers, then normalize and validate citations against retrieved chunk IDs.

**Step 4: Orchestrating the Agentic Loop**

Finally, we’ll orchestrate the entire agentic loop by chaining planning, synthesis, validation, and restore in an async-safe pipeline. We’ll automatically retry and repair outputs until they pass all constraints without human intervention.

**What You’ll Take Away**

By the end of this tutorial, you’ll have a complete agentic pipeline that’s robust against common failure modes, including unstable embedding shapes, citation drift, and lacking grounding in government summaries. You’ll also learn how to validate outputs against allowlisted sources, retrieve chunk IDs, automatically normalize citations, and inject deterministic citations when needed to ensure compliance without sacrificing correctness.

**Get the Full Code**

Want to dive deeper into the code and explore the full implementation? You can find the complete code on our GitHub repository. Also, be sure to follow us on Twitter, join our 100k+ ML SubReddit, and join us on Telegram!

I hope you enjoyed this comprehensive guide to building a production-grade agentic AI system. Let me know if you have any questions or need further clarification on any of the steps. Happy coding!