**The Shocking Truth About Large Language Models: They’re Storing Copies of Training Data – And It’s a Game-Changer**

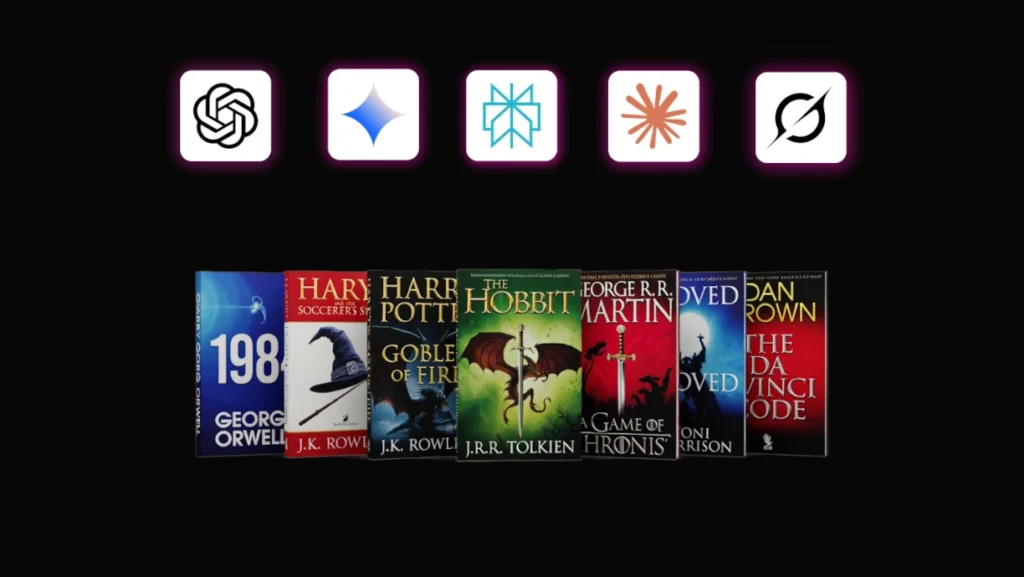

Remember when we were promised that large language models like GPT, Claude, Gemini, and Grok were just “learning patterns” and “transforming” the data? Yeah, me neither. It turns out that these AI giants have been, well, not exactly telling the truth. A new study from Stanford and Yale researchers has blown the lid off the biggest secret in the AI world: large language models are indeed storing copies of the training data they were trained on.

For years, AI firms like OpenAI, Anthropic, and Google have been adamant that their models are too smart for that, that they’re too busy “learning patterns” and “transforming” the data to even think about storing copies of the training data. But, it seems, this notion has been nothing more than a clever marketing ploy.

The researchers behind the study put the language models to the test, using simple prompting techniques to get them to reproduce excerpts from copyrighted books. And, boy, did they ever. They were able to extract extensive excerpts from well-known works, like George Orwell’s “1984” and “Harry Potter and the Sorcerer’s Stone”, with impressive accuracy. Claude 3.7 Sonnet, for example, was able to recreate “1984” with 94% accuracy and “Harry Potter” with 96% precision.

The implications of this study are staggering. It means that AI firms are, in fact, storing copies of the training data, which fundamentally challenges their legal arguments about “truthful use”. This, in turn, raises serious questions about the ownership and control of intellectual property in the digital age.

To put it simply, this study has blown open the doors to a whole new world of possibilities – and, equally, risks. For those of us who’ve been following the AI world, this revelation should come as no surprise. It’s been clear for some time now that the boundaries between human and machine intelligence are getting increasingly blurred. But, still, this is a big deal.

The question on everyone’s mind is: what does this mean for the future of AI? Will we see a shift in the way we think about intellectual property? Will we see a new wave of AI-powered “content creation” that blurs the lines between human and machine? Only time will tell.

For now, though, the study is out, and we’ve got a new appreciation for just how clever – and, let’s be honest, how sneaky – these AI giants can be.

Source link: [insert link here]

Image credit: [insert link here]