Here’s a rewritten version of the post in a more natural, human tone:

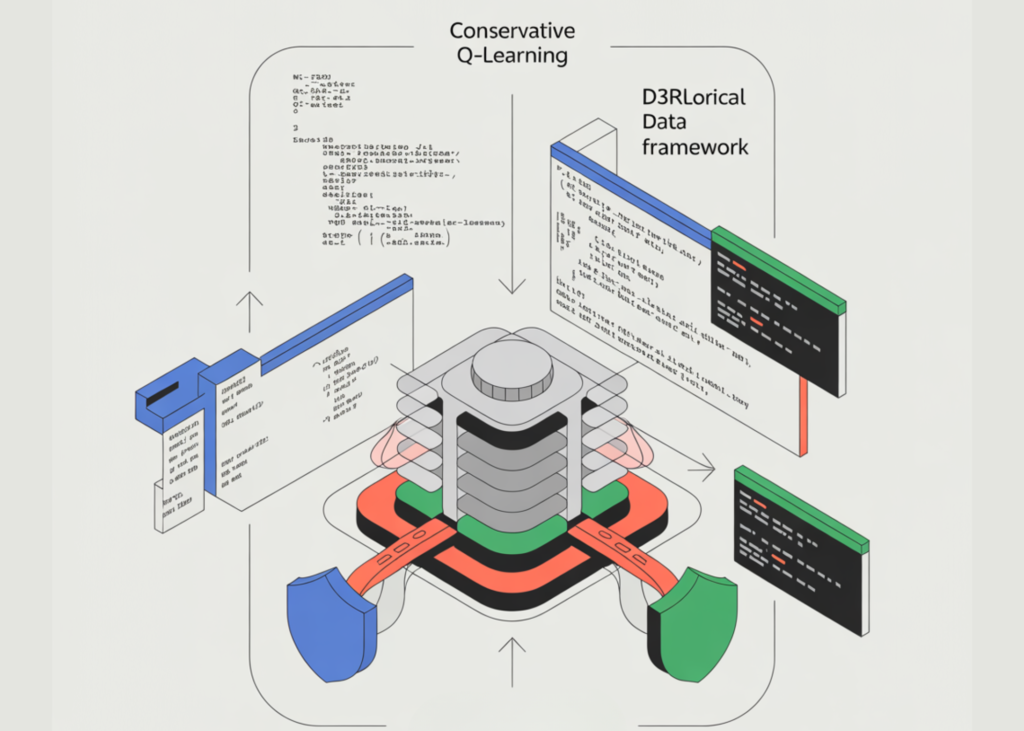

**The Power of Conservative Q-Learning: Building a Safe Reinforcement Learning Pipeline**

Hey there, fellow AI enthusiasts! Today, I’m excited to share a tutorial on building a safety-critical reinforcement learning pipeline that’s designed to learn from offline data, not risk-prone exploration. This is a crucial topic, especially when it comes to industries where safety is paramount.

**Setting the Stage**

Before we dive in, let’s get our dependencies in order and set some random seeds for reproducibility. We’ll also configure our computation machine to ensure consistent execution across different systems. And, we’ll create a utility to create configuration objects safely across different versions of d3rlpy (yes, it’s a mouthful!).

**Introducing GridWorld: A Safety-Critical Environment**

Next up, we’ll define a challenging GridWorld environment with hazards, terminal states, and stochastic transitions. We’ll assign penalties for entering those pesky hazards and rewards for completing goals successfully. This environment will reflect real-world safety constraints, so we can test our reinforcement learning agents in a more realistic setting.

**Offline Episodes: The Safe Way**

Now, we’ll generate offline episodes by simulating a policy in the GridWorld environment. We’ll create a secure analysis routine to measure policy efficiency without risking uncontrolled exploration. We’ll compute returns and safety metrics, including hazard and goal rates. And, we’ll introduce a novel diagnostic to quantify how often learned actions deviate from the dataset behavior.

**Training Agents: The Conservative Approach**

We’ll train both Habit Cloning and Conservative Q-Learning agents purely from offline data. We’ll evaluate their performance using managed rollouts and diagnostic metrics. And, we’ll wrap up the workflow by saving trained policies and summarizing safety-aware learning outcomes.

**The Bottom Line**

In the end, we showed that Conservative Q-Learning yields a more reliable policy than simple imitation when learning from historical data in safety-sensitive environments. By comparing offline training outcomes, managed online evaluations, and action distribution mismatches, we illustrated how conservatism helps reduce bad, out-of-distribution behavior. So, if you’re ready to build a safety-critical reinforcement learning pipeline, feel free to follow along!

**Get the Code and Stay Connected**

Get the full code and experiment with it yourself! Join our 100k+ ML SubReddit, follow me on Twitter, and subscribe to our newsletter for more AI-related content. And, if you’re on Telegram, join our channel as well!