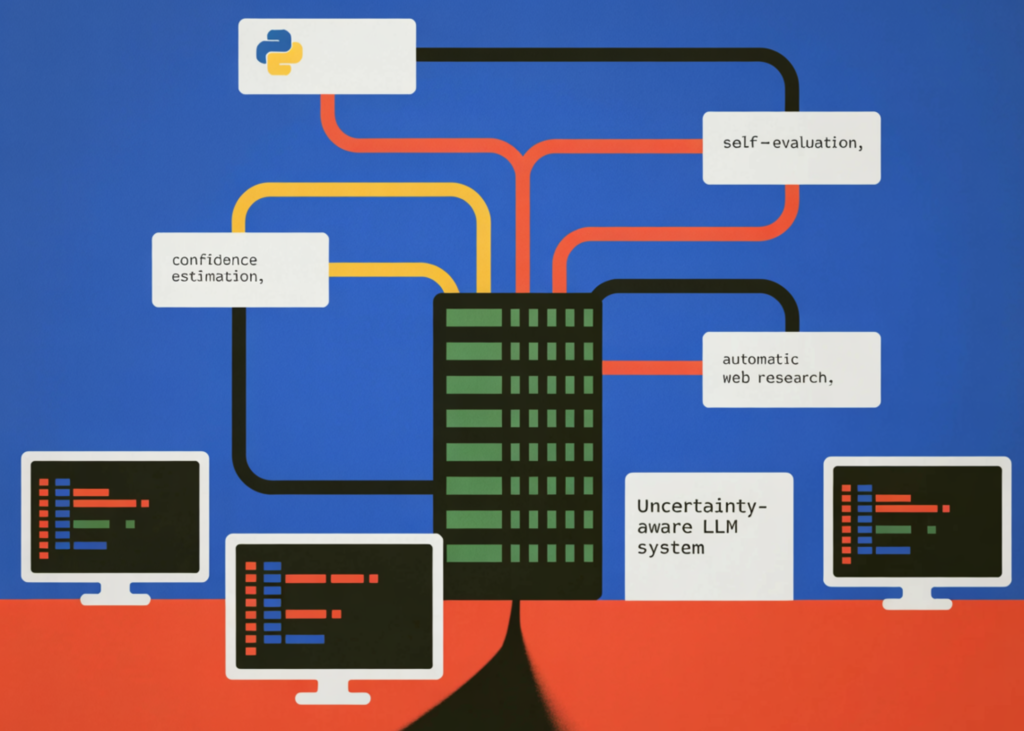

On this tutorial, we construct an uncertainty-aware giant language mannequin system that not solely generates solutions but in addition estimates the arrogance in these solutions. We implement a three-stage reasoning pipeline during which the mannequin first produces a solution together with a self-reported confidence rating and a justification. We then introduce a self-evaluation step that permits the mannequin to critique and refine its personal response, simulating a meta-cognitive verify. If the mannequin determines that its confidence is low, we mechanically set off an online analysis section that retrieves related data from reside sources and synthesizes a extra dependable reply. By combining confidence estimation, self-reflection, and automatic analysis, we create a sensible framework for constructing extra reliable and clear AI techniques that may acknowledge uncertainty and actively search higher data.

import os, json, re, textwrap, getpass, sys, warnings

from dataclasses import dataclass, subject

from typing import Optionally available

from openai import OpenAI

from ddgs import DDGS

from wealthy.console import Console

from wealthy.desk import Desk

from wealthy.panel import Panel

from wealthy import field

warnings.filterwarnings("ignore", class=DeprecationWarning)

def _get_api_key() -> str:

key = os.environ.get("OPENAI_API_KEY", "").strip()

if key:

return key

attempt:

from google.colab import userdata

key = userdata.get("OPENAI_API_KEY") or ""

if key.strip():

return key.strip()

besides Exception:

move

console = Console()

console.print(

"n[bold cyan]OpenAI API Key required[/bold cyan]n"

"[dim]Your key is not going to be echoed and isn't saved to disk.n"

"To skip this immediate in future runs, set the atmosphere variable:n"

" export OPENAI_API_KEY=sk-...[/dim]n"

)

key = getpass.getpass(" Enter your OpenAI API key: ").strip()

if not key:

Console().print("[bold red]No API key offered — exiting.[/bold red]")

sys.exit(1)

return key

OPENAI_API_KEY = _get_api_key()

MODEL = "gpt-4o-mini"

CONFIDENCE_LOW = 0.55

CONFIDENCE_MED = 0.80

consumer = OpenAI(api_key=OPENAI_API_KEY)

console = Console()

@dataclass

class LLMResponse:

query: str

reply: str

confidence: float

reasoning: str

sources: listing[str] = subject(default_factory=listing)

researched: bool = False

raw_json: dict = subject(default_factory=dict)We import all required libraries and configure the runtime atmosphere for the uncertainty-aware LLM pipeline. We securely retrieve the OpenAI API key utilizing atmosphere variables, Colab secrets and techniques, or a hidden terminal immediate. We additionally outline the LLMResponse knowledge construction that shops the query, reply, confidence rating, reasoning, and analysis metadata used all through the system.

SYSTEM_UNCERTAINTY = """

You're an skilled AI assistant that's HONEST about what it is aware of and would not know.

For each query you MUST reply with legitimate JSON solely (no markdown, no prose outdoors JSON):

{

"reply": "",

"confidence": ,

"reasoning": ""

}

Confidence scale:

0.90-1.00 → very excessive: well-established reality, you might be sure

0.75-0.89 → excessive: robust data, minor uncertainty

0.55-0.74 → medium: believable however you could be fallacious, could possibly be outdated

0.30-0.54 → low: vital uncertainty, reply is a greatest guess

0.00-0.29 → very low: largely guessing, minimal dependable data

Be CALIBRATED — don't at all times give excessive confidence. Genuinely mirror uncertainty

about current occasions (after your data cutoff), area of interest subjects, numerical claims,

and something that modifications over time.

""".strip()

SYSTEM_SYNTHESIS = """

You're a analysis synthesizer. Given a query, a preliminary reply,

and web-search snippets, produce an improved last reply grounded within the proof.

Reply in JSON solely:

{

"reply": "",

"confidence": ,

"reasoning": ""

}

""".strip()

def query_llm_with_confidence(query: str) -> LLMResponse:

completion = consumer.chat.completions.create(

mannequin=MODEL,

temperature=0.2,

response_format={"sort": "json_object"},

messages=[

{"role": "system", "content": SYSTEM_UNCERTAINTY},

{"role": "user", "content": question},

],

)

uncooked = json.hundreds(completion.decisions[0].message.content material)

return LLMResponse(

query=query,

reply=uncooked.get("reply", ""),

confidence=float(uncooked.get("confidence", 0.5)),

reasoning=uncooked.get("reasoning", ""),

raw_json=uncooked,

)We outline the system prompts that instruct the mannequin to report solutions together with calibrated confidence and reasoning. We then implement the query_llm_with_confidence operate that performs the primary stage of the pipeline. This stage generates the mannequin’s reply whereas forcing the output to be structured JSON containing the reply, confidence rating, and rationalization.

def self_evaluate(response: LLMResponse) -> LLMResponse:

critique_prompt = f"""

Assessment this reply and its acknowledged confidence. Verify for:

1. Logical consistency

2. Whether or not the arrogance matches the precise high quality of the reply

3. Any factual errors you possibly can spot

Query: {response.query}

Proposed reply: {response.reply}

Said confidence: {response.confidence}

Said reasoning: {response.reasoning}

Reply in JSON:

{{

"revised_confidence": ,

"critique": "",

"revised_answer": ""

}}

""".strip()

completion = consumer.chat.completions.create(

mannequin=MODEL,

temperature=0.1,

response_format={"sort": "json_object"},

messages=[

{"role": "system", "content": "You are a rigorous self-critic. Respond in JSON only."},

{"role": "user", "content": critique_prompt},

],

)

ev = json.hundreds(completion.decisions[0].message.content material)

response.confidence = float(ev.get("revised_confidence", response.confidence))

response.reply = ev.get("revised_answer", response.reply)

response.reasoning += f"nn[Self-Eval Critique]: {ev.get('critique', '')}"

return response

def web_search(question: str, max_results: int = 5) -> listing[dict]:

outcomes = DDGS().textual content(question, max_results=max_results)

return listing(outcomes) if outcomes else []

def research_and_synthesize(response: LLMResponse) -> LLMResponse:

console.print(f" [yellow]🔍 Confidence {response.confidence:.0%} is low — triggering auto-research...[/yellow]")

snippets = web_search(response.query)

if not snippets:

console.print(" [red]No search outcomes discovered.[/red]")

return response

formatted = "nn".be part of(

f"[{i+1}] {s.get('title','')}n{s.get('physique','')}nURL: {s.get('href','')}"

for i, s in enumerate(snippets)

)

synthesis_prompt = f"""

Query: {response.query}

Preliminary reply (low confidence): {response.reply}

Net search snippets:

{formatted}

Synthesize an improved reply utilizing the proof above.

""".strip()

completion = consumer.chat.completions.create(

mannequin=MODEL,

temperature=0.2,

response_format={"sort": "json_object"},

messages=[

{"role": "system", "content": SYSTEM_SYNTHESIS},

{"role": "user", "content": synthesis_prompt},

],

)

syn = json.hundreds(completion.decisions[0].message.content material)

response.reply = syn.get("reply", response.reply)

response.confidence = float(syn.get("confidence", response.confidence))

response.reasoning += f"nn[Post-Research]: {syn.get('reasoning', '')}"

response.sources = [s.get("href", "") for s in snippets if s.get("href")]

response.researched = True

return responseWe implement a self-evaluation stage during which the mannequin critiques its personal reply and revises its confidence as wanted. We additionally introduce the net search functionality that retrieves reside data utilizing DuckDuckGo. If the mannequin’s confidence is low, we synthesize the search outcomes with the preliminary reply to provide an improved response grounded in exterior proof.

def self_evaluate(response: LLMResponse) -> LLMResponse:

critique_prompt = f"""

Assessment this reply and its acknowledged confidence. Verify for:

1. Logical consistency

2. Whether or not the arrogance matches the precise high quality of the reply

3. Any factual errors you possibly can spot

Query: {response.query}

Proposed reply: {response.reply}

Said confidence: {response.confidence}

Said reasoning: {response.reasoning}

Reply in JSON:

{{

"revised_confidence": ,

"critique": "",

"revised_answer": ""

}}

""".strip()

completion = consumer.chat.completions.create(

mannequin=MODEL,

temperature=0.1,

response_format={"sort": "json_object"},

messages=[

{"role": "system", "content": "You are a rigorous self-critic. Respond in JSON only."},

{"role": "user", "content": critique_prompt},

],

)

ev = json.hundreds(completion.decisions[0].message.content material)

response.confidence = float(ev.get("revised_confidence", response.confidence))

response.reply = ev.get("revised_answer", response.reply)

response.reasoning += f"nn[Self-Eval Critique]: {ev.get('critique', '')}"

return response

def web_search(question: str, max_results: int = 5) -> listing[dict]:

outcomes = DDGS().textual content(question, max_results=max_results)

return listing(outcomes) if outcomes else []

def research_and_synthesize(response: LLMResponse) -> LLMResponse:

console.print(f" [yellow]🔍 Confidence {response.confidence:.0%} is low — triggering auto-research...[/yellow]")

snippets = web_search(response.query)

if not snippets:

console.print(" [red]No search outcomes discovered.[/red]")

return response

formatted = "nn".be part of(

f"[{i+1}] {s.get('title','')}n{s.get('physique','')}nURL: {s.get('href','')}"

for i, s in enumerate(snippets)

)

synthesis_prompt = f"""

Query: {response.query}

Preliminary reply (low confidence): {response.reply}

Net search snippets:

{formatted}

Synthesize an improved reply utilizing the proof above.

""".strip()

completion = consumer.chat.completions.create(

mannequin=MODEL,

temperature=0.2,

response_format={"sort": "json_object"},

messages=[

{"role": "system", "content": SYSTEM_SYNTHESIS},

{"role": "user", "content": synthesis_prompt},

],

)

syn = json.hundreds(completion.decisions[0].message.content material)

response.reply = syn.get("reply", response.reply)

response.confidence = float(syn.get("confidence", response.confidence))

response.reasoning += f"nn[Post-Research]: {syn.get('reasoning', '')}"

response.sources = [s.get("href", "") for s in snippets if s.get("href")]

response.researched = True

return responseWe assemble the primary reasoning pipeline that orchestrates reply era, self-evaluation, and optionally available analysis. We compute visible confidence indicators and implement helper features to label their confidence ranges. We additionally constructed a formatted show system that presents the ultimate reply, reasoning, confidence meter, and sources in a clear console interface.

DEMO_QUESTIONS = [

"What is the speed of light in a vacuum?",

"What were the main causes of the 2008 global financial crisis?",

"What is the latest version of Python released in 2025?",

"What is the current population of Tokyo as of 2025?",

]

def run_comparison_table(questions: listing[str]) -> None:

console.rule("[bold cyan]UNCERTAINTY-AWARE LLM — BATCH RUN[/bold cyan]")

outcomes = []

for i, q in enumerate(questions, 1):

console.print(f"n[bold]Query {i}/{len(questions)}:[/bold] {q}")

r = uncertainty_aware_query(q)

display_response(r)

outcomes.append(r)

console.rule("[bold cyan]SUMMARY TABLE[/bold cyan]")

tbl = Desk(field=field.ROUNDED, show_lines=True, spotlight=True)

tbl.add_column("#", type="dim", width=3)

tbl.add_column("Query", max_width=40)

tbl.add_column("Confidence", justify="heart", width=12)

tbl.add_column("Stage", justify="heart", width=10)

tbl.add_column("Researched", justify="heart", width=10)

for i, r in enumerate(outcomes, 1):

emoji, label = confidence_label(r.confidence)

col = "inexperienced" if r.confidence >= 0.75 else "yellow" if r.confidence >= 0.55 else "purple"

tbl.add_row(

str(i),

textwrap.shorten(r.query, 55),

f"[{col}]{r.confidence:.0%}[/{col}]",

f"{emoji} {label}",

"✅ Sure" if r.researched else "—",

)

console.print(tbl)

def interactive_mode() -> None:

console.rule("[bold cyan]INTERACTIVE MODE[/bold cyan]")

console.print(" Sort any query. Sort [bold]give up[/bold] to exit.n")

whereas True:

q = console.enter("[bold cyan]You ▶[/bold cyan] ").strip()

if q.decrease() in ("give up", "exit", "q"):

console.print("Goodbye!")

break

if not q:

proceed

resp = uncertainty_aware_query(q)

display_response(resp)

if __name__ == "__main__":

console.print(Panel(

"[bold white]Uncertainty-Conscious LLM Tutorial[/bold white]n"

"[dim]Confidence Estimation · Self-Analysis · Auto-Analysis[/dim]",

border_style="cyan",

increase=False,

))

run_comparison_table(DEMO_QUESTIONS)

console.print("n")

interactive_mode()We outline demonstration questions and implement a batch pipeline that evaluates the uncertainty-aware system throughout a number of queries. We generate a abstract desk that compares confidence ranges and whether or not analysis was triggered. Lastly, we implement an interactive mode that constantly accepts person questions and runs the total uncertainty-aware reasoning workflow.

In conclusion, we designed and carried out an entire uncertainty-aware reasoning pipeline for giant language fashions utilizing Python and the OpenAI API. We demonstrated how fashions can verbalize confidence, carry out inner self-evaluation, and mechanically conduct analysis when uncertainty is detected. This method improves reliability by enabling the system to acknowledge data gaps and increase its solutions with exterior proof when wanted. By integrating these elements right into a unified workflow, we confirmed how builders can construct AI techniques which can be clever, calibrated, clear, and adaptive, making them much more appropriate for real-world decision-support functions.

Take a look at the FULL Notebook Here. Additionally, be at liberty to observe us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Jean-marc is a profitable AI enterprise govt .He leads and accelerates progress for AI powered options and began a pc imaginative and prescient firm in 2006. He’s a acknowledged speaker at AI conferences and has an MBA from Stanford.