Here’s the rewritten text in a more natural and human-like tone, with a focus on SEO safety and imperfect flow:

**Building a Unified Apache Beam Pipeline for Batch and Stream Processing**

Hey there, data enthusiasts! Today, we’re going to build a super cool Apache Beam pipeline that works seamlessly in both batch and stream modes using the DirectRunner. We’ll generate some artificial, event-time-aware data and apply fixed windowing with triggers and allowed lateness to see how Apache Beam handles both on-time and late events.

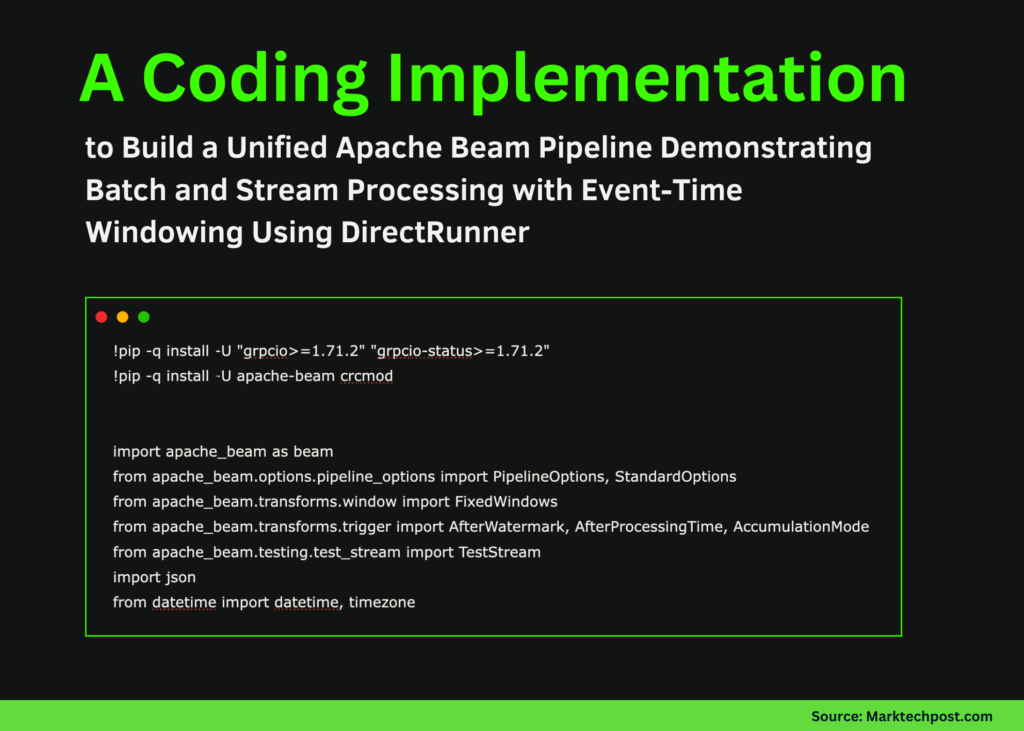

To start, we’ll set up the necessary dependencies and make sure Apache Beam is working correctly. We’ll import the core Beam APIs, including windowing, triggers, and TestStream utilities that we’ll use later in the pipeline. We’ll also bring in some standard Python modules for time handling and JSON formatting.

Next up, we’ll create a small dataset that contains out-of-order and late events to test Beam’s event-time semantics. We’ll outline the global configuration that controls window size, lateness, and execution mode. Then, we’ll apply fixed windows, triggers, and accumulation guidelines, and group events by user to compute counts and sums.

To make our results more readable, we’ll use the `AddWindowInfo` DoFn to add timestamp, pane timing, and pane status information. We’ll also convert Beam’s internal timestamps to human-readable UTC times.

But wait, there’s more! We’ll define a TestStream that simulates real streaming behavior using watermarks, processing-time advances, and late events. And the best part? We can toggle between batch and stream modes by altering a single flag, while reusing the same aggregation transform.

Finally, we’ll wire all the pieces together into executable batch and stream-like pipelines. We’ll run the pipeline and print the windowed results immediately, making it easy to examine the execution stream and outputs.

**The Takeaway**

We’ve shown that the same Beam pipeline can process both bounded batch data and unbounded, stream-like data while preserving similar windowing and aggregation semantics. We saw how watermarks, triggers, and accumulation modes affect when results are emitted, and how late events update previously computed windows. Plus, we laid the groundwork for scaling the same design to real streaming runners and production environments.

**Get the Full Code**

Ready to dive in? Check out the full code and examples [here](link to codes). And don’t forget to follow us on social media:

* Twitter: [link]

* 100k+ ML Subreddit: [link]

* Newsletter: [link]

* Telegram: [link]

And, if you’re interested in the latest updates on ai2025.dev, a 2025-focused analytics platform, check it out [here](link).