**Decentralized Federated Learning: The Future of AI – Without the Privacy Risks**

Hey fellow data enthusiasts! Today, I’m excited to dive into the world of decentralized federated learning, a relatively new and powerful approach to training AI models. We’ll explore why it’s becoming increasingly important, and how it’s changing the way we approach data privacy.

To start, let’s face it – data breaches and hacks are on the rise. That’s why we need to take a more privacy-focused approach to training our models. Decentralized federated learning is all about ditching the centralized server model, which makes us vulnerable to info theft. Instead, we use a peer-to-peer model, where each node communicates directly with its neighbors. This not only makes training more efficient, but also keeps our data secure.

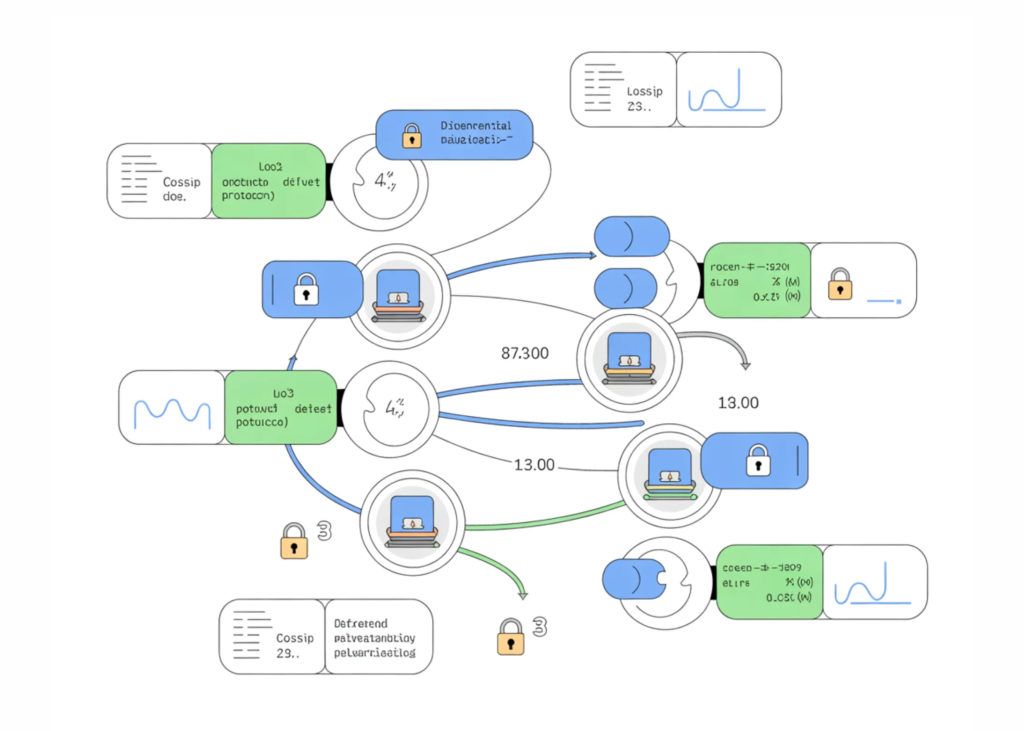

But how do we make this work? Enter gossip protocols, a type of decentralized algorithm that allows nodes to swap information with each other. In our experiment, we’ll be using a gossip protocol to simulate repeated local training and pairwise parameter averaging. It’s a game-changer – by understanding how privacy noise spreads through the network, we can design more efficient and safer methods.

And speaking of safety, differential privacy is the way to inject a bit of noise into our data to protect it from unauthorized access. We’ll be using a type of noise injection mechanism to ensure the privacy of our data. It’s like adding a bit of noise to our data to keep eavesdroppers at bay – and it’s crucial in a world where privacy is king.

So, what’s next? We’ll be running a series of experiments, each with a different privacy level. We’ll visualize the convergence trends and final accuracy to reveal the trade-offs between privacy guarantees and learning efficiency in real-world decentralized systems. We’ll even take a look at different aggregation schemes to see how they respond to rising privacy constraints.

The takeaway is that decentralization can have a huge impact on how differential privacy noise propagates through a federated system. We saw that centralized FedAvg typically converges faster under weak privacy constraints, while gossip-based federated learning is more robust to noisy updates, but slower. The lesson? We need to consider how privacy, topology, and communication patterns interact when designing privacy-preserving federated systems.

**Get the Full Code (and Join the Conversation!)**

Curious about our full code? Check it out here! And if you haven’t already, join our Twitter, Telegram, and Reddit communities to stay up-to-date on AI and data science news. We can’t wait to hear your thoughts and feedback!