A group researchers from China have launched AntAngelMed, a big open-source medical language mannequin that the group describes as the biggest and most able to its variety presently obtainable.

What Is AntAngelMed?

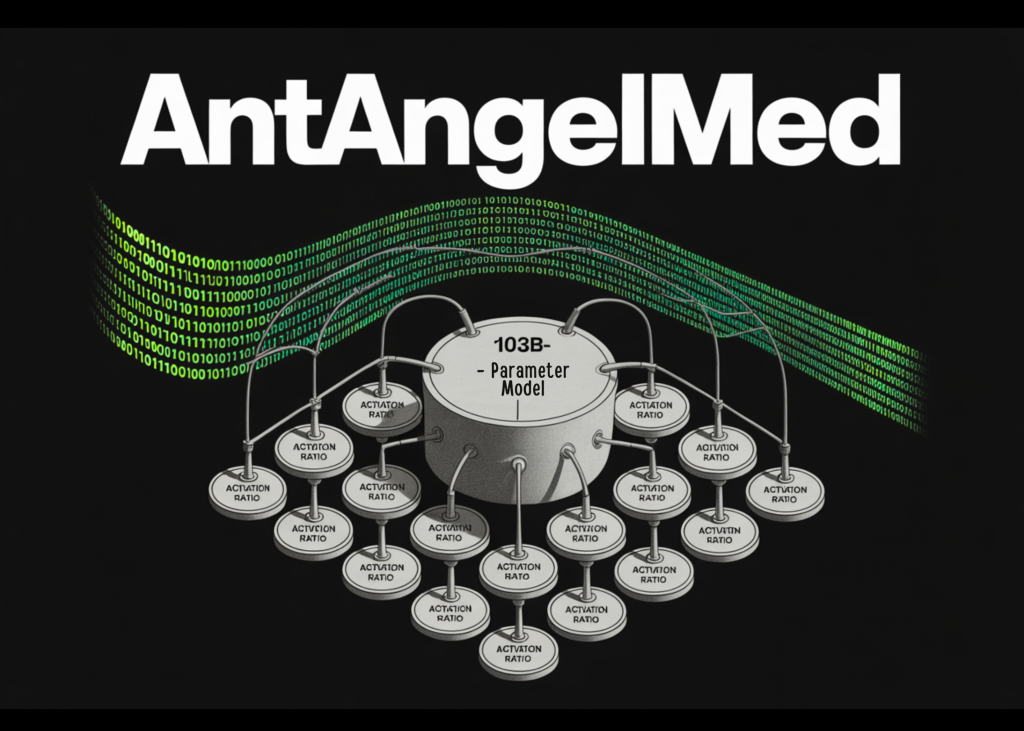

AntAngelMed is a medical-domain language mannequin with 103 billion complete parameters, but it surely doesn’t activate all of these parameters throughout inference. As an alternative, it makes use of a Combination-of-Consultants (MoE) structure with a 1/32 activation ratio, that means solely 6.1 billion parameters are energetic at any given time when processing a question.

It helps to know the way MoE architectures work. In a regular dense mannequin, each parameter participates in processing each token. In an MoE mannequin, the community is split into many ‘skilled’ sub-networks, and a routing mechanism selects solely a small subset of them to deal with every enter. This lets you have a really giant complete parameter depend — which generally correlates with sturdy data capability — whereas retaining the precise compute price of inference proportional to the smaller energetic parameter depend.

AntAngelMed inherits this design from Ling-flash-2.0, a base mannequin developed by inclusionAI and guided by what the group calls Ling Scaling Legal guidelines. The particular optimizations layered on high embody: refined skilled granularity, a tuned shared skilled ratio, consideration steadiness mechanisms, sigmoid routing with out auxiliary loss, an MTP (Multi-Token Prediction) layer, QK-Norm, and Partial-RoPE (Rotary Place Embedding utilized to a subset of consideration heads relatively than all of them). In keeping with the analysis group, these design selections collectively permit small-activation MoE fashions to ship as much as 7× effectivity in comparison with equally sized dense architectures which implies with solely 6.1B activated parameters, AntAngelMed can match roughly 40B dense mannequin efficiency. Individually, as output size grows throughout inference, the relative velocity benefit can even attain 7× or extra over dense fashions of comparable dimension.

Coaching Pipeline

AntAngelMed makes use of a three-stage coaching course of designed to layer common language understanding on high of deep medical area adaptation.

The first stage is continuous pre-training on large-scale medical corpora, together with encyclopedias, net textual content, and educational publications. This part is constructed on high of the Ling-flash-2.0 checkpoint, giving the mannequin a powerful common reasoning basis earlier than medical specialization begins.

The second stage is Supervised Nice-Tuning (SFT), the place the mannequin is educated on a multi-source instruction dataset. This dataset mixes common reasoning duties — math, programming, logic — to protect chain-of-thought capabilities, alongside medical situations similar to physician–affected person Q&A, diagnostic reasoning, and security and ethics circumstances.

The third stage is Reinforcement Studying utilizing the GRPO (Group Relative Coverage Optimization) algorithm, mixed with task-specific reward fashions. GRPO, initially launched within the DeepSeekMath paper, is a variant of PPO that estimates baselines from group scores relatively than a separate critic mannequin, making it computationally lighter. Right here, reward indicators are designed to form mannequin conduct towards empathy, structured scientific responses, security boundaries, and evidence-based reasoning — all with the purpose of lowering hallucinations on medical questions.

Inference Efficiency

On H20 {hardware}, AntAngelMed exceeds 200 tokens per second, which the analysis group reviews is roughly 3× quicker than a 36 billion parameter dense mannequin. With YaRN (But One other RoPE extensioN) extrapolation, it helps a 128K context size — lengthy sufficient to deal with full scientific paperwork, prolonged affected person histories, or multi-turn medical dialogues.

The analysis group has additionally launched an FP8 quantized model of the mannequin. When this quantization is mixed with EAGLE3 speculative decoding optimization, inference throughput at a concurrency of 32 improves considerably over FP8 alone: 71% on HumanEval, 45% on GSM8K, and 94% on Math-500. These benchmarks measure coding and math reasoning duties — not medical duties instantly — however function proxies for the mannequin’s common throughput stability throughout output varieties.

Benchmark Outcomes

On HealthBench, the open-source medical analysis benchmark from OpenAI that makes use of simulated multi-turn medical dialogues to measure real-world scientific efficiency, AntAngelMed ranks first amongst all open-source fashions and surpasses a variety of high proprietary fashions as properly, with a very vital benefit on the HealthBench-Exhausting subset.

On MedAIBench, an analysis system maintained by China’s Nationwide Synthetic Intelligence Medical Trade Pilot Facility, AntAngelMed ranks on the high stage, with notably sturdy scores in medical data Q&A and medical ethics and security classes.

On MedBench, a benchmark for Chinese language healthcare LLMs masking 36 independently curated datasets and roughly 700,000 samples throughout 5 dimensions — medical data query answering, medical language understanding, medical language technology, advanced medical reasoning, and security and ethics — AntAngelMed ranks first general.

Marktechpost’s Visible Explainer

Key Takeaways

- AntAngelMed is a 103B-parameter open-source medical LLM that prompts solely 6.1B parameters at inference time utilizing a 1/32 activation-ratio MoE structure inherited from Ling-flash-2.0.

- It makes use of a three-stage coaching pipeline: continuous pre-training on medical corpora, SFT with blended common and scientific instruction information, and GRPO-based reinforcement studying for security and diagnostic reasoning.

- On H20 {hardware}, the mannequin exceeds 200 tokens/s and helps 128K context size through YaRN extrapolation — roughly 3× quicker than a comparable 36B dense mannequin.

- AntAngelMed ranks first amongst open-source fashions on OpenAI’s HealthBench, surpasses a number of proprietary fashions, and tops each MedAIBench and MedBench leaderboards.

- The mannequin is offered on Hugging Face, ModelScope, and GitHub; mannequin weights are Apache 2.0, code is MIT, and an FP8 quantized model can be launched.

Try the Model Weights on HF, GitHub Repo and Technical details. Additionally, be happy to observe us on Twitter and don’t overlook to hitch our 150k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Have to associate with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Connect with us