Coaching frontier AI fashions isn’t just a compute downside — it’s more and more a networking downside. And OpenAI simply launched its answer.

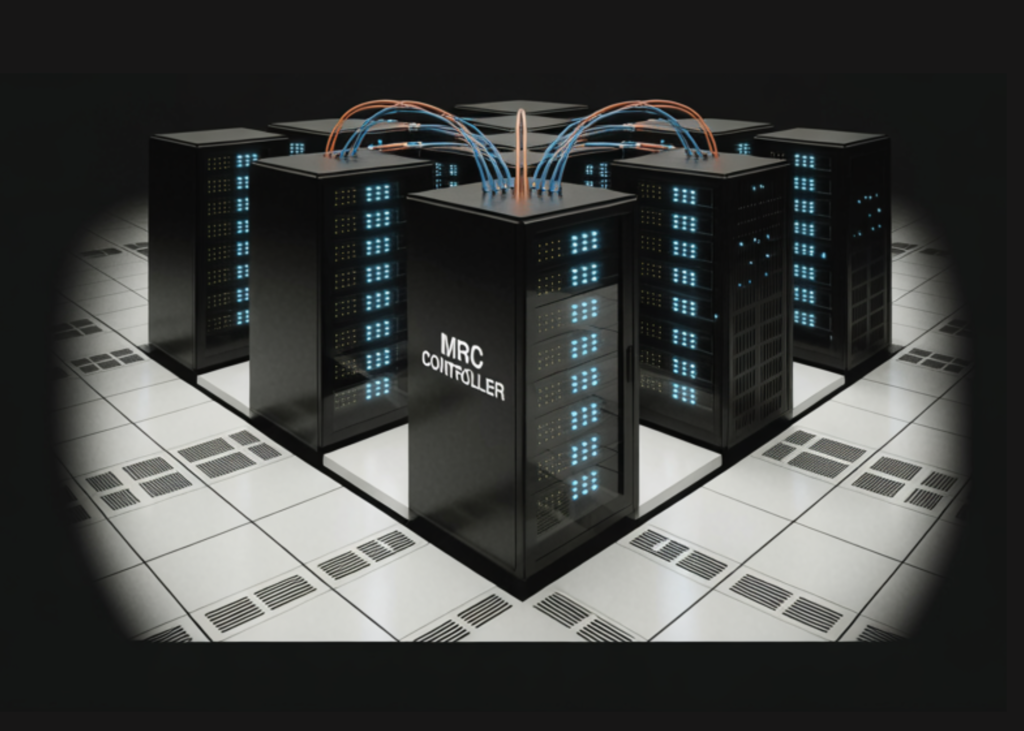

OpenAI introduced the discharge of MRC (Multipath Dependable Connection), a novel networking protocol developed over the previous two years in partnership with AMD, Broadcom, Intel, Microsoft, and NVIDIA. The specification was printed via the Open Compute Venture (OCP), enabling the broader trade to make use of and construct on it.

Why Networking is the Hidden Bottleneck in AI Coaching

To know why MRC issues, it is advisable perceive what occurs inside a supercomputer throughout mannequin coaching. When coaching massive AI fashions, a single step can contain many tens of millions of knowledge transfers. One switch arriving late can ripple via your entire job, probably inflicting GPUs to sit down idle.

Community congestion, hyperlink, and system failures are the commonest sources of delay and jitter in transfers — and these issues get extra frequent, and more durable to unravel, as the scale of the cluster will increase. That is the compounding infrastructure problem OpenAI got down to repair.

In keeping with OpenAI, greater than 900 million folks use ChatGPT each week. Sustaining and enhancing these fashions at that scale means each second of GPU idle time represents actual price and functionality loss. The OpenAI states its purpose as “not simply to construct a quick community, but additionally to construct one which delivers very predictable efficiency, even within the presence of failures, to maintain coaching jobs shifting.”

What MRC Really Does: Three Core Mechanisms

MRC is just not a ground-up invention. It extends RDMA over Converged Ethernet (RoCE) — an InfiniBand Commerce Affiliation (IBTA) commonplace that allows hardware-accelerated distant direct reminiscence entry amongst GPUs and CPUs. It attracts on strategies developed by the Extremely Ethernet Consortium (UEC) and extends them with SRv6-based supply routing to assist large-scale AI networking materials.

RoCE is a protocol that enables one machine to learn or write reminiscence on one other machine instantly over an Ethernet community, bypassing the CPU for optimum throughput. SRv6 (Phase Routing over IPv6) takes this additional — the sending machine encodes the precise route the packet ought to comply with instantly contained in the packet header, so switches not must run advanced routing calculations. This reduces the processing load on switches and saves energy — a significant issue at information middle scale.

1. Adaptive Packet Spraying to Get rid of Congestion

As an alternative of sending every switch over a single community path, MRC spreads packets throughout a whole lot of paths concurrently, lowering congestion within the core of the community. With conventional RoCEv2, packets have been caught in a single path from level A to level B, which contributes to congestion. To beat this, MRC launched Clever Packet-Spray Load Balancing, in order that if a packet’s path is unusable, packets can traverse throughout different paths on the community. This allows greater bandwidth utilization, lowered tail latency, and fine-grained load balancing on the packet degree.

2. Microsecond-Stage Failure Restoration by way of SRv6 Static Supply Routing

When community paths, hyperlinks, or switches fail, MRC can detect the issue and route round it on a microsecond timescale. Typical community materials can take seconds and even tens of seconds to stabilize after failures. A key architectural resolution makes this doable: the switches don’t must recompute routes or do something aside from blindly comply with the static routes they have been configured with. All routing intelligence lives on the NIC degree, not the swap degree. This can be a intentionally unconventional design — disabling dynamic routing within the switches fully to stop two adaptive mechanisms from interfering with one another.

Earlier than MRC, if a hyperlink between a GPU’s community interface and a tier-0 swap failed, the coaching job would fail. With MRC, the job survives with cheap efficiency. If an 8-port community interface loses one port, the utmost price is lowered by one eighth. MRC detects this, recalculates paths to keep away from the failed airplane, and instantly tells friends to not use that airplane for inbound visitors. Most failed hyperlinks get well inside a minute, at which level MRC brings the airplane again into use.

3. Multi-Airplane Networks with Fewer Change Tiers and Decrease Value

That is the place MRC adjustments cluster structure essentially. As an alternative of treating every community interface as one 800Gb/s hyperlink, it’s cut up into a number of smaller hyperlinks. For instance, one interface can connect with eight totally different switches. A swap that may join 64 ports at 800Gb/s can as an alternative join 512 ports at 100Gb/s. This lets to construct a community absolutely connecting about 131,000 GPUs with solely two tiers of switches. A standard 800Gb/s community would require three or 4 tiers.

The financial savings compound additional: the analysis staff quantifies that for full bisection bandwidth, the two-tier multi-plane design requires 2/3 of the optics and three/5 the variety of switches in comparison with a three-tier community. Fewer swap tiers additionally means decrease latency — the longest path traverses solely three switches moderately than 5 or seven — and smaller blast radius when any particular person part fails.

{Hardware}: Which NICs and Switches Run MRC

As per the research paper, MRC is already working in manufacturing on particular, named {hardware}. It’s applied throughout 400 and 800Gb/s RDMA NICs — together with NVIDIA ConnectX-8, AMD Pollara, AMD Vulcano, and Broadcom Thor Extremely — with SRv6 swap assist on NVIDIA Spectrum-4 and Spectrum-5 (working Cumulus and SONiC) and Broadcom Tomahawk 5 by way of Arista EOS. On the protocol aspect, AMD contributed the NSCC congestion management algorithm, now a part of the UEC Congestion Management specification, together with IB/RDMA transport semantic layer extensions that enable MRC to combine with present RDMA programming fashions whereas including the multipath capabilities that set it other than conventional transports.

Already in Manufacturing: From Stargate to Fairwater

MRC isn’t just a prototype. It’s already deployed throughout all of OpenAI’s largest NVIDIA GB200 supercomputers used to coach frontier fashions, together with the positioning with Oracle Cloud Infrastructure (OCI) in Abilene, Texas, and in Microsoft’s Fairwater supercomputers. MRC has been used to coach a number of OpenAI fashions, leveraging {hardware} from NVIDIA and Broadcom. Microsoft’s Fairwater supercomputers are situated in Atlanta and Wisconsin.

MRC has been used particularly to coach frontier massive language fashions for ChatGPT and Codex. In the course of the coaching of a latest frontier mannequin, OpenAI needed to reboot 4 tier-1 switches. With MRC, the corporate didn’t must coordinate the reboot with the groups working coaching jobs within the cluster.

Key Takeaways

- OpenAI Introduces MRC — OpenAI partnered with AMD, Broadcom, Intel, Microsoft, and NVIDIA to launch MRC (Multipath Dependable Connection) via the Open Compute Venture (OCP).

- Packet Spraying Kills Congestion — MRC spreads packets throughout a whole lot of paths concurrently, eliminating core congestion and lowering tail latency throughout large-scale GPU coaching.

- Microsecond Failure Restoration — MRC detects hyperlink and swap failures and reroutes visitors in microseconds, preserving coaching jobs alive via failures that may beforehand have triggered full job termination.

- Two-Tier Topology for 131,000+ GPUs — By splitting 800Gb/s interfaces into eight 100Gb/s planes, MRC helps supercomputers with over 100,000 GPUs utilizing solely two tiers of switches as an alternative of three or 4.

- Already used for ChatGPT and Codex — MRC is already deployed throughout OpenAI’s largest NVIDIA GB200 supercomputers and has been used to coach frontier massive language fashions for ChatGPT and Codex.

Take a look at the Paper and Technical details. Additionally, be at liberty to comply with us on Twitter and don’t overlook to affix our 150k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Have to companion with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and many others.? Connect with us

Michal Sutter is a knowledge science skilled with a Grasp of Science in Knowledge Science from the College of Padova. With a stable basis in statistical evaluation, machine studying, and information engineering, Michal excels at reworking advanced datasets into actionable insights.