When you’ve got been working reinforcement studying (RL) post-training on a language mannequin for math reasoning, code technology, or any verifiable activity, you could have virtually definitely stared at a progress bar whereas your GPU cluster burns by way of rollout technology. A team of researchers from NVIDIA proposes a precise fix by integrating speculative decoding into the RL coaching loop itself, and do it in a manner that preserves the goal mannequin’s actual output distribution.

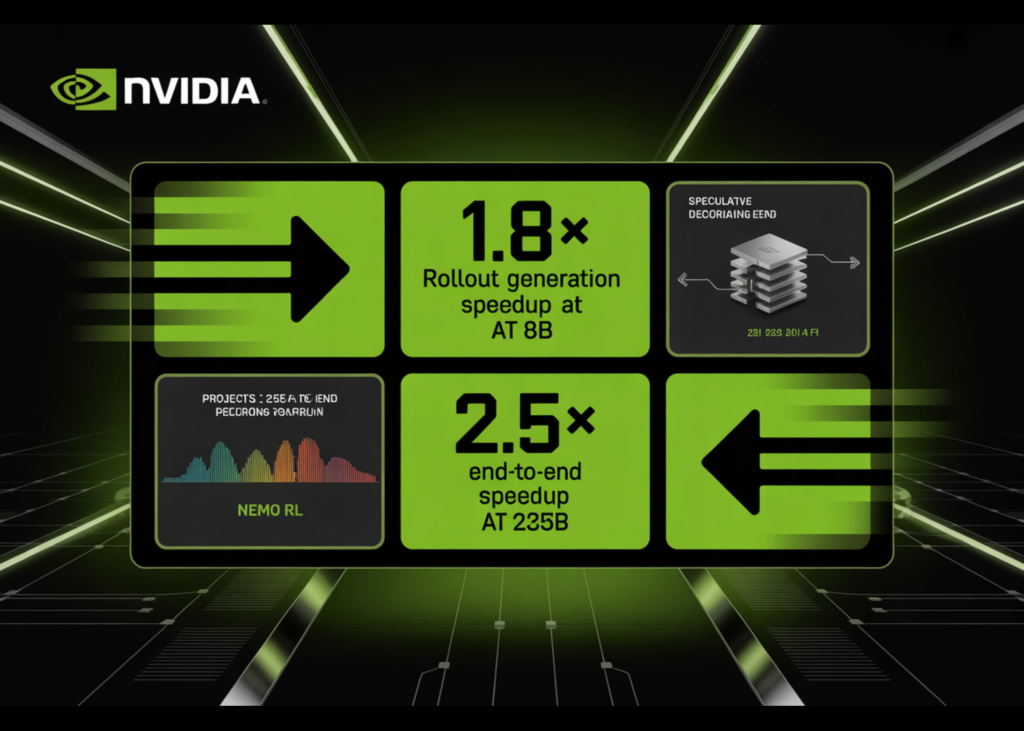

The analysis workforce built-in speculative decoding straight into NeMo RL v0.6.0 with a vLLM backend, delivering lossless rollout acceleration at each 8B and projected 235B mannequin scales.The newest NeMo RL v0.6.0 launch formally ships speculative decoding as a supported characteristic alongside the SGLang backend, the Muon optimizer, and YaRN long-context coaching.

Why Rollout Era is the Bottleneck

To grasp the issue, it helps to understand how a synchronous RL coaching step breaks down. In NeMo RL, every step consists of 5 phases: information loading, weight synchronization and backend preparation (put together), rollout technology (gen), log-probability recomputation (logprob), and coverage optimization (practice).

The analysis workforce measured this breakdown on Qwen3-8B beneath two workloads — RL-Assume, which continues coaching a reasoning-capable mannequin, and RL-Zero, which begins from a base mannequin and learns reasoning from scratch. In each circumstances, rollout technology accounts for 65–72% of complete step time. Log-probability recomputation and coaching collectively take solely about 27–33%. This makes technology the one stage price focusing on for acceleration, and the one which determines the ceiling for any rollout-side optimization.

What Speculative Decoding Truly Does

Speculative decoding is a method the place a smaller, sooner draft mannequin proposes a number of tokens without delay, and the bigger goal mannequin (the one you might be truly coaching) verifies them utilizing a rejection sampling process. The important thing property and why it issues for RL, is that the rejection process is mathematically assured to provide the identical output distribution as if the goal mannequin had generated these tokens autoregressively. No distribution mismatch, no off-policy corrections wanted, no change to the coaching sign.

That is necessary as a result of in RL post-training, the coaching reward relies on the coverage’s personal samples. Strategies like asynchronous execution, off-policy replay, or low-precision rollouts all commerce some quantity of coaching constancy for throughput. Speculative decoding trades nothing: the rollouts are an identical in distribution to what the goal mannequin would have generated by itself, simply produced sooner.

The System Integration Problem

Including a draft mannequin to a serving backend is easy. Including one to an RL coaching loop isn’t. Each time the coverage updates, the rollout engine should obtain new weights. The draft mannequin should stay aligned with the evolving coverage. Log-probabilities, KL penalties, and the GRPO coverage loss should all be computed towards the goal (verifier) coverage not the draft or the optimization goal is silently corrupted.

The NVIDIA analysis workforce handles this in NeMo RL with a two-path structure. The overall path makes use of EAGLE-3, a drafting framework that works with any pretrained mannequin with out requiring native multi-token prediction (MTP) help. A local path can also be accessible for fashions that ship with built-in MTP heads. When on-line draft adaptation is enabled, the hidden states and log-probabilities from the MegatronLM verifier ahead move are cached and reused to oversee the draft head through a gradient-detached pathway, so draft coaching by no means interferes with the coverage gradient sign.

Measured Outcomes at 8B Scale

On 32 GB200 GPUs (8 GB200 NVL72 nodes, 4 GPUs per node), EAGLE-3 reduces technology latency from 100 seconds to 56.6 seconds on RL-Zero — a 1.8× technology speedup. On RL-Assume, it drops from 133.6 seconds to 87.0 seconds, a 1.54× speedup. As a result of log-probability re-computation and coaching are unchanged, these generation-side good points translate to total step speedups of 1.41× on RL-Zero and 1.35× on RL-Assume. Validation accuracy on AIME-2024 evolves identically beneath autoregressive and speculative decoding all through coaching, confirming that the lossless assure holds in observe.

The analysis workforce additionally exams n-gram drafting as a model-free speculative baseline. Regardless of reaching acceptance lengths of two.47 on RL-Zero and a couple of.05 on RL-Assume, n-gram drafting is slower than the autoregressive baseline in each settings — 0.7× and 0.5× respectively. It is a important discovering for practitioners: a constructive acceptance size is important however not adequate. If the verification overhead is excessive sufficient, hypothesis makes issues worse.

Three Configuration Choices That Decide Realized Speedup

The analysis workforce isolates three operational selections that practitioners should get proper.

Draft initialization issues greater than generic drafting means. An EAGLE-3 draft initialized on the DAPO post-training dataset achieves a 1.77× technology speedup on RL-Zero, whereas a draft initialized on the general-purpose UltraChat and Magpie datasets achieves just one.51× on the similar draft size. The draft have to be aligned with the precise rollout distribution encountered throughout RL, not only a broad chat distribution.

Draft size has a non-obvious optimum. At draft size ok=3, RL-Zero achieves 1.77× speedup and RL-Assume achieves 1.53×. Rising to ok=5 raises the acceptance size however drops speedup to 1.44× on RL-Zero and 0.84× on RL-Assume — the latter already slower than autoregressive. At ok=7, RL-Zero drops additional to 1.21× and RL-Assume to 0.71×. The distinction issues: RL-Zero’s rollouts are generated from a base mannequin beginning with quick outputs, making them simpler for the draft to foretell even at excessive ok. RL-Assume’s totally developed reasoning traces are more durable to take a position over, so the overhead of longer drafts erases the profit sooner. Extra speculative work per step can erase the advantage of greater acceptance solely, particularly in more durable technology regimes.

On-line draft adaptation — updating the draft throughout RL utilizing rollouts generated by the present coverage helps most when the draft is weakly initialized. For a DAPO-initialized draft, offline and on-line configurations carry out almost identically (1.77× vs. 1.78× on RL-Zero). For a UltraChat-initialized draft, on-line updating improves speedup from 1.51× to 1.63× on RL-Zero.

Interplay with asynchronous execution was additionally examined straight at 8B scale not simply in simulation. The analysis workforce ran RL-Assume at coverage lag 1 in a 16-node non-colocated configuration, with 12 nodes devoted to technology and 4 to coaching. In asynchronous mode, most of rollout technology is already hidden behind log-probability re-computation and coverage updates, so the related amount is the uncovered technology time that continues to be on the important path. Speculative decoding reduces that uncovered technology time from 10.4 seconds to 0.6 seconds per step and lowers efficient step time from 75.0 seconds to 60.5 seconds (1.24×). The achieve is smaller than in synchronous RL — anticipated, since asynchronous overlap already hides a lot of the rollout value — nevertheless it confirms that the 2 mechanisms are genuinely complementary fairly than redundant.

Projected Positive aspects at 235B Scale

Utilizing a proprietary GPU efficiency simulator calibrated to device-level compute, reminiscence, and interconnect traits, the analysis workforce projected speculative decoding good points at bigger scales. For Qwen3-235B-A22B working synchronous RL on 512 GB200 GPUs, draft size ok=3 with an acceptance size of three tokens yields a 2.72× rollout speedup and a 1.70× end-to-end speedup.

On the most favorable simulated working level — Qwen3-235B-A22B on 2048 GB200 GPUs with asynchronous RL at coverage lag 2 — rollout speedup reaches roughly 3.5×, translating to a projected 2.5× end-to-end coaching speedup. Speculative decoding and asynchronous execution are described as complementary: hypothesis reduces the price of every particular person rollout, whereas asynchronous overlap hides the remaining technology time behind coaching and log-probability computation.

Key Takeaways

- Rollout technology is the dominant bottleneck in RL post-training, accounting for 65–72% of complete step time in synchronous RL workloads — making it the one stage the place acceleration has significant affect on end-to-end coaching velocity.

- Speculative decoding through EAGLE-3 delivers lossless rollout acceleration, reaching 1.8× technology speedup at 8B scale (1.41× total step speedup) with out altering the goal mannequin’s output distribution — not like asynchronous execution, off-policy replay, or low-precision rollouts, which all commerce coaching constancy for throughput.

- Draft initialization high quality issues greater than draft size, with in-domain (DAPO-trained) drafts outperforming common chat-domain drafts by a significant margin; longer draft lengths (ok≥5) persistently backfire in more durable reasoning workloads, making ok=3 the dependable default.

- Simulator projections present good points scale up considerably, reaching ~3.5× rollout speedup and a projected ~2.5× end-to-end coaching speedup at 235B scale on 2048 GB200 GPUs — and the method is already accessible in NeMo RL v0.6.0 beneath Apache 2.0.

Take a look at the Full Paper and Nemo RL Repo. Additionally, be happy to comply with us on Twitter and don’t neglect to affix our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Have to associate with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so on.? Connect with us