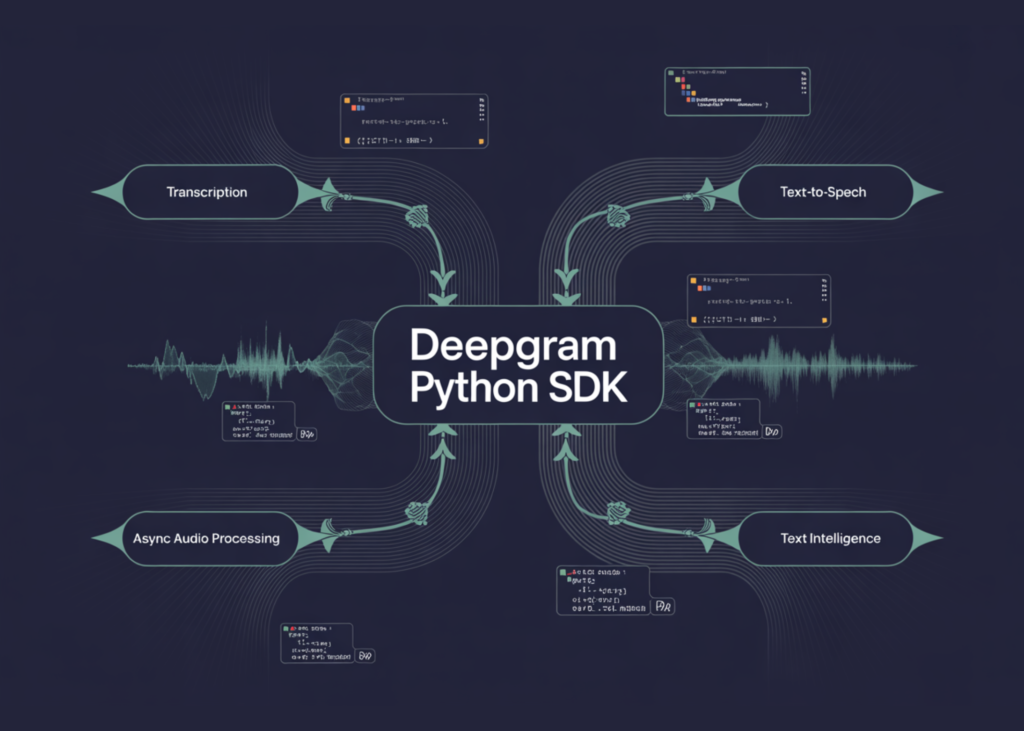

On this tutorial, we construct a complicated hands-on workflow with the Deepgram Python SDK and discover how trendy voice AI capabilities come collectively in a single Python surroundings. We arrange authentication, join each synchronous and asynchronous Deepgram purchasers, and work straight with actual audio knowledge to know how the SDK handles transcription, speech era, and textual content evaluation in observe. We transcribe audio from each a URL and an area file, examine confidence scores, word-level timestamps, speaker diarization, paragraph formatting, and AI-generated summaries, after which lengthen the pipeline to async processing for quicker, extra scalable execution. We additionally generate speech with a number of TTS voices, analyze textual content for sentiment, matters, and intents, and look at superior transcription controls reminiscent of key phrase search, alternative, boosting, uncooked response entry, and structured error dealing with. Via this course of, we create a sensible, end-to-end Deepgram voice AI workflow that’s each technically detailed and simple to adapt for real-world purposes.

!pip set up deepgram-sdk httpx --quiet

import os, asyncio, textwrap, urllib.request

from getpass import getpass

from deepgram import DeepgramClient, AsyncDeepgramClient

from deepgram.core.api_error import ApiError

from IPython.show import Audio, show

DEEPGRAM_API_KEY = getpass("🔑 Enter your Deepgram API key: ")

os.environ["DEEPGRAM_API_KEY"] = DEEPGRAM_API_KEY

shopper = DeepgramClient(api_key=DEEPGRAM_API_KEY)

async_client = AsyncDeepgramClient(api_key=DEEPGRAM_API_KEY)

AUDIO_URL = "https://dpgr.am/spacewalk.wav"

AUDIO_PATH = "/tmp/pattern.wav"

urllib.request.urlretrieve(AUDIO_URL, AUDIO_PATH)

def read_audio(path=AUDIO_PATH):

with open(path, "rb") as f:

return f.learn()

def _get(obj, key, default=None):

"""Get a discipline from both a dict or an object — v6 returns each."""

if isinstance(obj, dict):

return obj.get(key, default)

return getattr(obj, key, default)

def get_model_name(meta):

mi = _get(meta, "model_info")

if mi is None: return "n/a"

return _get(mi, "identify", "n/a")

def tts_to_bytes(response) -> bytes:

"""v6 generate() returns a generator of chunks or an object with .stream."""

if hasattr(response, "stream"):

return response.stream.getvalue()

return b"".be part of(chunk for chunk in response if isinstance(chunk, bytes))

def save_tts(response, path: str) -> str:

with open(path, "wb") as f:

f.write(tts_to_bytes(response))

return path

print("✅ Deepgram shopper prepared | pattern audio downloaded")

print("n" + "="*60)

print("📼 SECTION 2: Pre-Recorded Transcription from URL")

print("="*60)

response = shopper.hear.v1.media.transcribe_url(

url=AUDIO_URL,

mannequin="nova-3",

smart_format=True,

diarize=True,

language="en",

utterances=True,

filler_words=True,

)

transcript = response.outcomes.channels[0].alternate options[0].transcript

print(f"n📝 Full Transcript:n{textwrap.fill(transcript, 80)}")

confidence = response.outcomes.channels[0].alternate options[0].confidence

print(f"n🎯 Confidence: {confidence:.2%}")

phrases = response.outcomes.channels[0].alternate options[0].phrases

print(f"n🔤 First 5 phrases with timing:")

for w in phrases[:5]:

print(f" '{w.phrase}' begin={w.begin:.2f}s finish={w.finish:.2f}s conf={w.confidence:.2f}")

print(f"n👥 Speaker Diarization (first 5 phrases):")

for w in phrases[:5]:

speaker = getattr(w, "speaker", None)

if speaker just isn't None:

print(f" Speaker {int(speaker)}: '{w.phrase}'")

meta = response.metadata

print(f"n📊 Metadata: length={meta.length:.2f}s channels={int(meta.channels)} mannequin={get_model_name(meta)}")We set up the Deepgram SDK and its dependencies, then securely arrange authentication utilizing our API key. We initialize each synchronous and asynchronous Deepgram purchasers, obtain a pattern audio file, and outline helper features to make it simpler to work with blended response objects, audio bytes, mannequin metadata, and streamed TTS outputs. We then run our first pre-recorded transcription from a URL and examine the transcript, confidence rating, word-level timestamps, speaker diarization, and metadata to know the construction and richness of the response.

print("n" + "="*60)

print("📂 SECTION 3: Pre-Recorded Transcription from File")

print("="*60)

file_response = shopper.hear.v1.media.transcribe_file(

request=read_audio(),

mannequin="nova-3",

smart_format=True,

diarize=True,

paragraphs=True,

summarize="v2",

)

alt = file_response.outcomes.channels[0].alternate options[0]

paragraphs = getattr(alt, "paragraphs", None)

if paragraphs and _get(paragraphs, "paragraphs"):

print("n📄 Paragraph-Formatted Transcript:")

for para in _get(paragraphs, "paragraphs")[:2]:

sentences = " ".be part of(_get(s, "textual content", "") for s in (_get(para, "sentences") or []))

print(f" [Speaker {int(_get(para,'speaker',0))}, "

f"{_get(para,'start',0):.1f}s–{_get(para,'end',0):.1f}s] {sentences[:120]}...")

else:

print(f"n📝 Transcript: {alt.transcript[:200]}...")

if getattr(file_response.outcomes, "abstract", None):

quick = _get(file_response.outcomes.abstract, "quick", "")

if quick:

print(f"n📌 AI Abstract: {quick}")

print(f"n🎯 Confidence: {alt.confidence:.2%}")

print(f"🔤 Phrase rely : {len(alt.phrases)}")

print("n" + "="*60)

print("⚡ SECTION 4: Async Parallel Transcription")

print("="*60)

async def transcribe_async():

audio_bytes = read_audio()

async def from_url(label):

r = await async_client.hear.v1.media.transcribe_url(

url=AUDIO_URL, mannequin="nova-3", smart_format=True,

)

print(f" [{label}] {r.outcomes.channels[0].alternate options[0].transcript[:100]}...")

async def from_file(label):

r = await async_client.hear.v1.media.transcribe_file(

request=audio_bytes, mannequin="nova-3", smart_format=True,

)

print(f" [{label}] {r.outcomes.channels[0].alternate options[0].transcript[:100]}...")

await asyncio.collect(from_url("From URL"), from_file("From File"))

await transcribe_async()We transfer from URL-based to file-based transcription by sending uncooked audio bytes on to the Deepgram API, enabling richer choices reminiscent of paragraphs and summarization. We examine the returned paragraph construction, speaker segmentation, abstract output, confidence rating, and phrase rely to see how the SDK helps extra readable and analysis-friendly transcription outcomes. We additionally introduce asynchronous processing and run URL-based and file-based transcription in parallel, serving to us perceive learn how to construct quicker, extra scalable voice AI pipelines.

print("n" + "="*60)

print("🔊 SECTION 5: Textual content-to-Speech")

print("="*60)

sample_text = (

"Welcome to the Deepgram superior tutorial. "

"This SDK permits you to transcribe audio, generate speech, "

"and analyse textual content — all with a easy Python interface."

)

tts_path = save_tts(

shopper.converse.v1.audio.generate(textual content=sample_text, mannequin="aura-2-asteria-en"),

"/tmp/tts_output.mp3",

)

size_kb = os.path.getsize(tts_path) / 1024

print(f"✅ TTS audio saved → {tts_path} ({size_kb:.1f} KB)")

show(Audio(tts_path))

print("n" + "="*60)

print("🎭 SECTION 6: A number of TTS Voices Comparability")

print("="*60)

voices = {

"aura-2-asteria-en": "Asteria (feminine, heat)",

"aura-2-orion-en": "Orion (male, deep)",

"aura-2-luna-en": "Luna (feminine, brilliant)",

}

for model_id, label in voices.gadgets():

attempt:

path = save_tts(

shopper.converse.v1.audio.generate(textual content="Hey! I'm a Deepgram voice mannequin.", mannequin=model_id),

f"/tmp/tts_{model_id}.mp3",

)

print(f" ✅ {label}")

show(Audio(path))

besides Exception as e:

print(f" ⚠️ {label} — {e}")

print("n" + "="*60)

print("🧠 SECTION 7: Textual content Intelligence — Sentiment, Subjects, Intents")

print("="*60)

review_text = (

"I completely love this product! It arrived rapidly, the standard is "

"excellent, and buyer assist was extremely useful after I had "

"a query. I might positively advocate it to anybody on the lookout for "

"a dependable answer. 5 stars!"

)

read_response = shopper.learn.v1.textual content.analyze(

request={"textual content": review_text},

language="en",

sentiment=True,

matters=True,

intents=True,

summarize=True,

)

outcomes = read_response.outcomes

We give attention to speech era by changing textual content to audio utilizing Deepgram’s text-to-speech API and saving the ensuing audio as an MP3 file. We then examine a number of TTS voices to listen to how totally different voice fashions behave and the way simply we will change between them whereas conserving the identical code sample. After that, we start working with the Learn API by passing the evaluation textual content into Deepgram’s textual content intelligence system to investigate language past easy transcription.

if getattr(outcomes, "sentiments", None):

total = outcomes.sentiments.common

print(f"😊 Sentiment: {_get(total,'sentiment','?').higher()} "

f"(rating={_get(total,'sentiment_score',0):.3f})")

for seg in (_get(outcomes.sentiments, "segments") or [])[:2]:

print(f" • "{_get(seg,'textual content','')[:60]}" → {_get(seg,'sentiment','?')}")

if getattr(outcomes, "matters", None):

print(f"n🏷️ Subjects Detected:")

for seg in (_get(outcomes.matters, "segments") or [])[:3]:

for t in (_get(seg, "matters") or []):

print(f" • {_get(t,'matter','?')} (conf={_get(t,'confidence_score',0):.2f})")

if getattr(outcomes, "intents", None):

print(f"n🎯 Intents Detected:")

for seg in (_get(outcomes.intents, "segments") or [])[:3]:

for intent in (_get(seg, "intents") or []):

print(f" • {_get(intent,'intent','?')} (conf={_get(intent,'confidence_score',0):.2f})")

if getattr(outcomes, "abstract", None):

textual content = _get(outcomes.abstract, "textual content", "")

if textual content:

print(f"n📌 Abstract: {textual content}")

print("n" + "="*60)

print("⚙️ SECTION 8: Superior Choices — Search, Substitute, Enhance")

print("="*60)

search_response = shopper.hear.v1.media.transcribe_url(

url=AUDIO_URL,

mannequin="nova-3",

smart_format=True,

punctuate=True,

search=["spacewalk", "mission", "astronaut"],

change=[{"find": "um", "replace": "[hesitation]"}],

keyterm=["spacewalk", "NASA"],

)

ch = search_response.outcomes.channels[0]

if getattr(ch, "search", None):

print("🔍 Key phrase Search Hits:")

for hit_group in ch.search:

hits = _get(hit_group, "hits") or []

print(f" '{_get(hit_group,'question','?')}': {len(hits)} hit(s)")

for h in hits[:2]:

print(f" at {_get(h,'begin',0):.2f}s–{_get(h,'finish',0):.2f}s "

f"conf={_get(h,'confidence',0):.2f}")

print(f"n📝 Transcript:n{textwrap.fill(ch.alternate options[0].transcript, 80)}")

print("n" + "="*60)

print("🔩 SECTION 9: Uncooked HTTP Response Entry")

print("="*60)

uncooked = shopper.hear.v1.media.with_raw_response.transcribe_url(

url=AUDIO_URL, mannequin="nova-3",

)

print(f"Response sort : {sort(uncooked.knowledge).__name__}")

request_id = uncooked.headers.get("dg-request-id", uncooked.headers.get("x-dg-request-id", "n/a"))

print(f"Request ID : {request_id}")We proceed with textual content intelligence and examine sentiment, matters, intents, and abstract outputs from the analyzed textual content to know how Deepgram constructions higher-level language insights. We then discover superior transcription choices, reminiscent of search phrases, phrase alternative, and keyterm boosting, to make transcription extra focused and helpful for domain-specific purposes. Lastly, we entry the uncooked HTTP response and request headers, offering a lower-level view of the API interplay and making debugging and observability simpler.

print("n" + "="*60)

print("🛡️ SECTION 10: Error Dealing with")

print("="*60)

def safe_transcribe(url: str, mannequin: str = "nova-3"):

attempt:

r = shopper.hear.v1.media.transcribe_url(

url=url, mannequin=mannequin,

request_options={"timeout_in_seconds": 30, "max_retries": 2},

)

return r.outcomes.channels[0].alternate options[0].transcript

besides ApiError as e:

print(f" ❌ ApiError {e.status_code}: {e.physique}")

return None

besides Exception as e:

print(f" ❌ {sort(e).__name__}: {e}")

return None

t = safe_transcribe(AUDIO_URL)

print(f"✅ Legitimate URL → '{t[:60]}...'")

t_bad = safe_transcribe("https://instance.com/nonexistent_audio.wav")

if t_bad is None:

print("✅ Invalid URL → error caught gracefully")

print("n" + "="*60)

print("🎉 Tutorial full! Sections coated:")

for s in [

"2. transcribe_url(url=...) + diarization + word timing",

"3. transcribe_file(request=bytes) + paragraphs + summarize",

"4. Async parallel transcription",

"5. Text-to-Speech — generator-safe via save_tts()",

"6. Multi-voice TTS comparison",

"7. Text Intelligence — sentiment, topics, intents (dict-safe)",

"8. Advanced options — keyword search, word replacement, boosting",

"9. Raw HTTP response & request ID",

"10. Error handling with ApiError + retries"

]:

print(f" ✅ {s}")

print("="*60)We construct a secure transcription wrapper that provides timeout and retry controls whereas gracefully dealing with API-specific and basic exceptions. We check the perform with each a legitimate and an invalid audio URL to substantiate that our workflow behaves reliably even when requests fail. We finish the tutorial by printing a whole abstract of all coated sections, which helps us evaluation the total Deepgram pipeline from transcription and TTS to textual content intelligence, superior choices, uncooked responses, and error dealing with.

In conclusion, we established a whole and sensible understanding of learn how to use the Deepgram Python SDK for superior voice and language workflows. We carried out high-quality transcription and text-to-speech era, and we additionally realized to extract deeper worth from audio and textual content by metadata inspection, summarization, sentiment evaluation, matter detection, intent recognition, async execution, and request-level debugging. This makes the tutorial rather more than a fundamental SDK walkthrough, as a result of we actively related a number of capabilities right into a unified pipeline that displays how production-ready voice AI techniques are sometimes constructed. Additionally, we noticed how the SDK helps each ease of use and superior management, enabling us to maneuver from easy examples to richer, extra resilient implementations. Ultimately, we got here away with a powerful basis for constructing transcription instruments, speech interfaces, audio intelligence techniques, and different real-world purposes powered by Deepgram.

Take a look at the Full Codes here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to hitch our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Must companion with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Connect with us