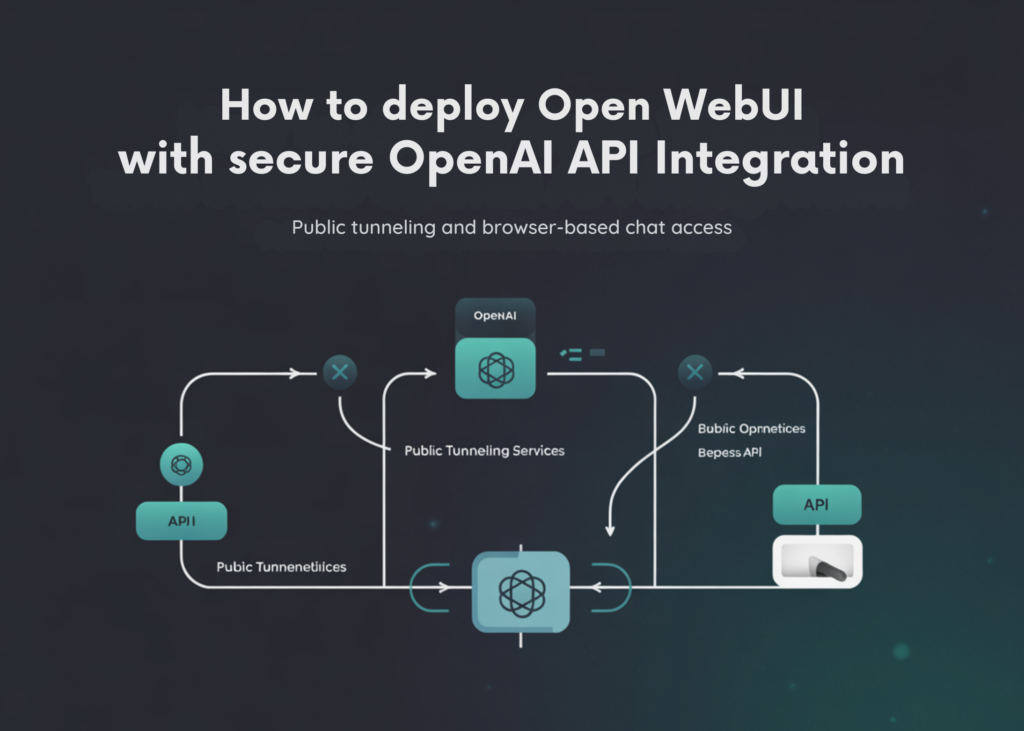

On this tutorial, we construct a whole Open WebUI setup in Colab, in a sensible, hands-on means, utilizing Python. We start by putting in the required dependencies, then securely present our OpenAI API key by means of terminal-based secret enter in order that delicate credentials are usually not uncovered immediately within the pocket book. From there, we configure the surroundings variables wanted for Open WebUI to speak with the OpenAI API, outline a default mannequin, put together a knowledge listing for runtime storage, and launch the Open WebUI server contained in the Colab surroundings. To make the interface accessible exterior the pocket book, we additionally create a public tunnel and seize a shareable URL that lets us open and use the appliance immediately within the browser. Via this course of, we get Open WebUI working end-to-end and perceive how the important thing items of deployment, configuration, entry, and runtime administration match collectively in a Colab-based workflow.

import os

import re

import time

import json

import shutil

import sign

import secrets and techniques

import subprocess

import urllib.request

from getpass import getpass

from pathlib import Path

print("Putting in Open WebUI and helper packages...")

subprocess.check_call([

"python", "-m", "pip", "install", "-q",

"open-webui",

"requests",

"nest_asyncio"

])

print("nEnter your OpenAI API key securely.")

openai_api_key = getpass("OpenAI API Key: ").strip()

if not openai_api_key:

increase ValueError("OpenAI API key can't be empty.")

default_model = enter("Default mannequin to make use of inside Open WebUI [gpt-4o-mini]: ").strip()

if not default_model:

default_model = "gpt-4o-mini"We start by importing all of the required Python modules for managing system operations, securing enter, dealing with file paths, working subprocesses, and accessing the community. We then set up Open WebUI and the supporting packages wanted to run the appliance easily inside Google Colab. After that, we securely enter our OpenAI API key by means of terminal enter and outline the default mannequin that we would like Open WebUI to make use of.

os.environ["ENABLE_OPENAI_API"] = "True"

os.environ["OPENAI_API_KEY"] = openai_api_key

os.environ["OPENAI_API_BASE_URL"] = "https://api.openai.com/v1"

os.environ["WEBUI_SECRET_KEY"] = secrets and techniques.token_hex(32)

os.environ["WEBUI_NAME"] = "Open WebUI on Colab"

os.environ["DEFAULT_MODELS"] = default_model

data_dir = Path("/content material/open-webui-data")

data_dir.mkdir(mother and father=True, exist_ok=True)

os.environ["DATA_DIR"] = str(data_dir)

We configure the surroundings variables that permit Open WebUI to attach correctly with the OpenAI API. We retailer the API key, outline the OpenAI base endpoint, generate a secret key for the online interface, and assign a default mannequin and interface identify for the session. We additionally create a devoted information listing within the Colab surroundings in order that Open WebUI has a structured location to retailer its runtime information.

cloudflared_path = Path("/content material/cloudflared")

if not cloudflared_path.exists():

print("nDownloading cloudflared...")

url = "https://github.com/cloudflare/cloudflared/releases/newest/obtain/cloudflared-linux-amd64"

urllib.request.urlretrieve(url, cloudflared_path)

cloudflared_path.chmod(0o755)

print("nStarting Open WebUI server...")

server_log = open("/content material/open-webui-server.log", "w")

server_proc = subprocess.Popen(

["open-webui", "serve"],

stdout=server_log,

stderr=subprocess.STDOUT,

env=os.environ.copy()

)

We put together the tunnel element by downloading the CloudFlare binary if it’s not already accessible within the Colab surroundings. As soon as that’s prepared, we begin the Open WebUI server and direct its output right into a log file in order that we will examine its conduct if wanted. This a part of the tutorial units up the core software course of that powers the browser-based interface.

local_url = "http://127.0.0.1:8080"

prepared = False

for _ in vary(120):

attempt:

import requests

r = requests.get(local_url, timeout=2)

if r.status_code < 500:

prepared = True

break

besides Exception:

cross

time.sleep(2)

if not prepared:

server_log.shut()

with open("/content material/open-webui-server.log", "r") as f:

logs = f.learn()[-4000:]

increase RuntimeError(

"Open WebUI didn't begin efficiently.nn"

"Current logs:n"

f"{logs}"

)

print("Open WebUI is working regionally at:", local_url)

print("nCreating public tunnel...")

tunnel_proc = subprocess.Popen(

[str(cloudflared_path), "tunnel", "--url", local_url, "--no-autoupdate"],

stdout=subprocess.PIPE,

stderr=subprocess.STDOUT,

textual content=True

)

We repeatedly verify whether or not the Open WebUI server has began efficiently on the native Colab port. If the server doesn’t begin correctly, we learn the current logs and lift a transparent error in order that we will perceive what went improper. As soon as the server is confirmed to be working, we create a public tunnel to make the native interface accessible from exterior Colab.

public_url = None

start_time = time.time()

whereas time.time() - start_time < 90:

line = tunnel_proc.stdout.readline()

if not line:

time.sleep(1)

proceed

match = re.search(r"https://[-a-zA-Z0-9]+.trycloudflare.com", line)

if match:

public_url = match.group(0)

break

if not public_url:

with open("/content material/open-webui-server.log", "r") as f:

server_logs = f.learn()[-3000:]

increase RuntimeError(

"Tunnel began however no public URL was captured.nn"

"Open WebUI server logs:n"

f"{server_logs}"

)

print("n" + "=" * 80)

print("Open WebUI is prepared.")

print("Public URL:", public_url)

print("Native URL :", local_url)

print("=" * 80)

print("nWhat to do subsequent:")

print("1. Open the Public URL.")

print("2. Create your admin account the primary time you open it.")

print("3. Go to the mannequin selector and select:", default_model)

print("4. Begin chatting with OpenAI by means of Open WebUI.")

print("nUseful notes:")

print("- Your OpenAI API key was handed by means of surroundings variables.")

print("- Information persists just for the present Colab runtime except you mount Drive.")

print("- If the tunnel stops, rerun the cell.")

def tail_open_webui_logs(traces=80):

log_path = "/content material/open-webui-server.log"

if not os.path.exists(log_path):

print("No server log discovered.")

return

with open(log_path, "r") as f:

content material = f.readlines()

print("".be part of(content material[-lines:]))

def stop_open_webui():

world server_proc, tunnel_proc, server_log

for proc in [tunnel_proc, server_proc]:

attempt:

if proc and proc.ballot() is None:

proc.terminate()

besides Exception:

cross

attempt:

server_log.shut()

besides Exception:

cross

print("Stopped Open WebUI and tunnel.")

print("nHelpers accessible:")

print("- tail_open_webui_logs()")

print("- stop_open_webui()")We seize the general public tunnel URL and print the ultimate entry particulars in order that we will open Open WebUI immediately within the browser. We additionally show the following steps for utilizing the interface, together with creating an admin account and choosing the configured mannequin. Additionally, we outline helper capabilities for checking logs and stopping the working processes, which makes the general setup simpler for us to handle and reuse.

In conclusion, we created a totally purposeful Open WebUI deployment on Colab and linked it to OpenAI in a safe, structured method. We put in the appliance and its supporting packages, supplied authentication particulars through protected enter, configured the backend connection to the OpenAI API, and began the native net server powering the interface. We then uncovered that server by means of a public tunnel, making the appliance usable by means of a browser with out requiring native set up on our machine. As well as, we included helper capabilities for viewing logs and stopping the working providers, which makes the setup simpler to handle and troubleshoot throughout experimentation. General, we established a reusable, sensible workflow that helps us shortly spin up Open WebUI in Colab, check OpenAI-powered chat interfaces, and reuse the identical basis for future prototyping, demos, and interface-driven AI tasks.

Take a look at the Full Codes here. Additionally, be happy to comply with us on Twitter and don’t neglect to hitch our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Must accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Connect with us

Michal Sutter is a knowledge science skilled with a Grasp of Science in Information Science from the College of Padova. With a stable basis in statistical evaluation, machine studying, and information engineering, Michal excels at reworking advanced datasets into actionable insights.