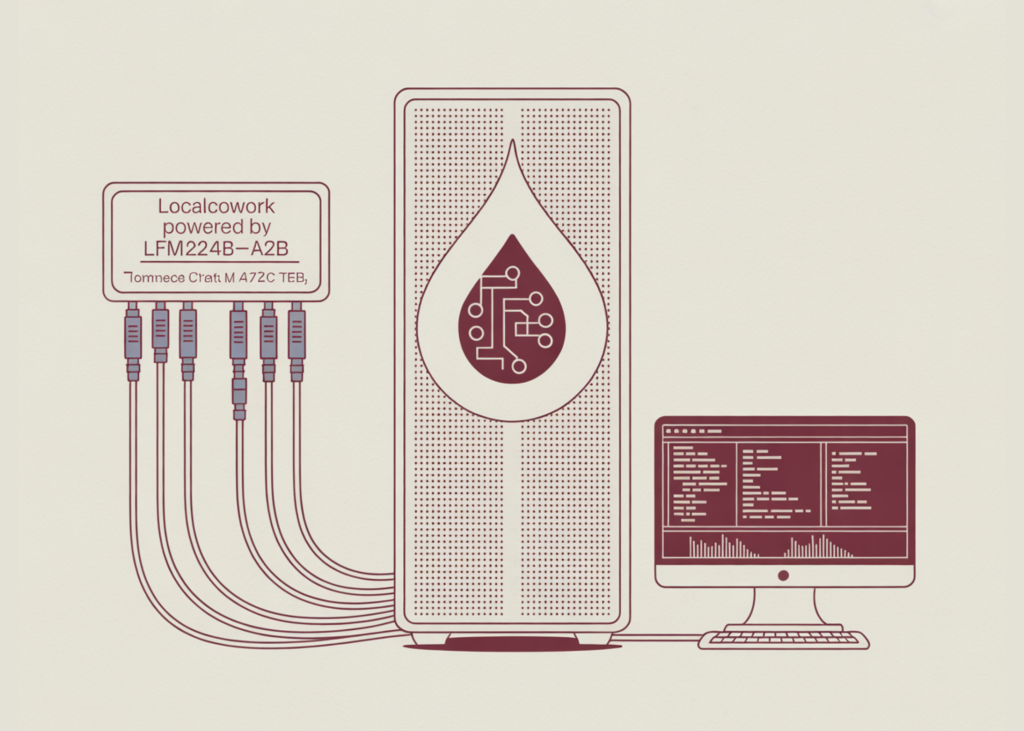

Liquid AI has launched LFM2-24B-A2B, a mannequin optimized for native, low-latency device dispatch, alongside LocalCowork, an open-source desktop agent utility obtainable of their Liquid4All GitHub Cookbook. The discharge supplies a deployable structure for operating enterprise workflows solely on-device, eliminating API calls and knowledge egress for privacy-sensitive environments.

Structure and Serving Configuration

To attain low-latency execution on client {hardware}, LFM2-24B-A2B makes use of a Sparse Combination-of-Specialists (MoE) structure. Whereas the mannequin comprises 24 billion parameters in whole, it solely prompts roughly 2 billion parameters per token throughout inference.

This structural design permits the mannequin to take care of a broad data base whereas considerably lowering the computational overhead required for every era step. Liquid AI stress-tested the mannequin utilizing the next {hardware} and software program stack:

- {Hardware}: Apple M4 Max, 36 GB unified reminiscence, 32 GPU cores.

- Serving Engine:

llama-serverwith flash consideration enabled. - Quantization:

Q4_K_M GGUFformat. - Reminiscence Footprint: ~14.5 GB of RAM.

- Hyperparameters: Temperature set to 0.1, top_p to 0.1, and max_tokens to 512 (optimized for deterministic, strict outputs).

LocalCowork Software Integration

LocalCowork is a totally offline desktop AI agent that makes use of the Mannequin Context Protocol (MCP) to execute pre-built instruments with out counting on cloud APIs or compromising knowledge privateness, logging each motion to a neighborhood audit path. The system contains 75 instruments throughout 14 MCP servers able to dealing with duties like filesystem operations, OCR, and safety scanning. Nevertheless, the supplied demo focuses on a extremely dependable, curated subset of 20 instruments throughout 6 servers, every rigorously examined to attain over 80% single-step accuracy and verified multi-step chain participation.

LocalCowork acts as the sensible implementation of this mannequin. It operates utterly offline and comes pre-configured with a set of enterprise-grade instruments:

- File Operations: Itemizing, studying, and looking out throughout the host filesystem.

- Safety Scanning: Figuring out leaked API keys and private identifiable info (PII) inside native directories.

- Doc Processing: Executing Optical Character Recognition (OCR), parsing textual content, diffing contracts, and producing PDFs.

- Audit Logging: Recording each device name regionally for compliance monitoring.

Efficiency Benchmarks

Liquid AI crew evaluated the mannequin towards a workload of 100 single-step device choice prompts and 50 multi-step chains (requiring 3 to six discrete device executions, akin to looking out a folder, operating OCR, parsing knowledge, deduplicating, and exporting).

Latency

The mannequin averaged ~385 ms per tool-selection response. This sub-second dispatch time is extremely appropriate for interactive, human-in-the-loop functions the place quick suggestions is important.

Accuracy

- Single-Step Executions: 80% accuracy.

- Multi-Step Chains: 26% end-to-end completion charge.

Key Takeaways

- Privateness-First Native Execution: LocalCowork operates solely on-device with out cloud API dependencies or knowledge egress, making it extremely appropriate for regulated enterprise environments requiring strict knowledge privateness.

- Environment friendly MoE Structure: LFM2-24B-A2B makes use of a Sparse Combination-of-Specialists (MoE) design, activating solely ~2 billion of its 24 billion parameters per token, permitting it to suit comfortably inside a ~14.5 GB RAM footprint utilizing

Q4_K_M GGUFquantization. - Sub-Second Latency on Client {Hardware}: When benchmarked on an Apple M4 Max laptop computer, the mannequin achieves a mean latency of ~385 ms for tool-selection dispatch, enabling extremely interactive, real-time workflows.

- Standardized MCP Software Integration: The agent leverages the Mannequin Context Protocol (MCP) to seamlessly join with native instruments—together with filesystem operations, OCR, and safety scanning—whereas mechanically logging all actions to a neighborhood audit path.

- Sturdy Single-Step Accuracy with Multi-Step Limits: The mannequin achieves 80% accuracy on single-step device execution however drops to a 26% success charge on multi-step chains as a consequence of ‘sibling confusion’ (deciding on an analogous however incorrect device), indicating it presently features greatest in a guided, human-in-the-loop loop somewhat than as a completely autonomous agent.

Try the Repo and Technical details. Additionally, be happy to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.