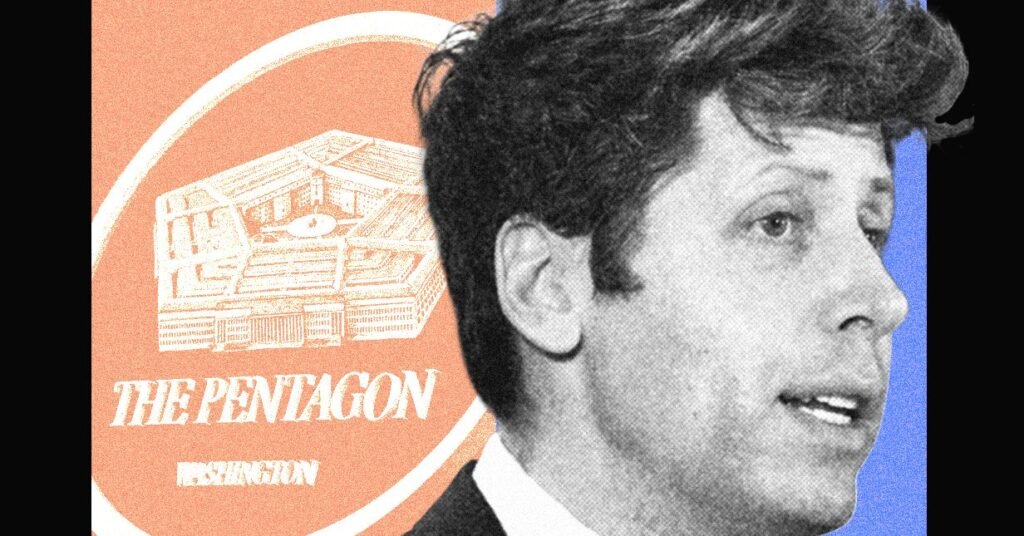

OpenAI CEO Sam Altman remains to be within the scorching seat this week after his firm signed a cope with the US army. OpenAI workers have criticized the transfer, which got here after Anthropic’s roughly $200 million contract with the Pentagon imploded, and requested Altman to launch extra details about the settlement. Altman admitted it appeared “sloppy” in a social media post.

Whereas this incident has turn out to be a serious information story, it could simply be the newest and most public instance of OpenAI creating obscure insurance policies round how the US army can entry its AI.

In 2023, OpenAI’s utilization coverage explicitly banned the army from accessing its AI fashions. However some OpenAI workers found the Pentagon had already began experimenting with Azure OpenAI, a model of OpenAI’s fashions supplied by Microsoft, two sources acquainted with the matter stated. On the time, Microsoft had been contracting with the Division of Protection for many years. It was additionally OpenAI’s largest investor, and had broad license to commercialize the startup’s expertise.

That very same yr, OpenAI workers noticed Pentagon officers strolling by the corporate’s San Francisco places of work, the sources stated. They spoke on the situation of anonymity as they aren’t licensed to touch upon personal firm issues.

Some OpenAI workers have been cautious about associating with the Pentagon, whereas others have been merely confused about what OpenAI’s utilization insurance policies meant. Did the coverage apply to Microsoft? Whereas sources inform WIRED it was not clear to most workers on the time, spokespeople from OpenAI and Microsoft say Azure OpenAI merchandise usually are not, and weren’t, topic to OpenAI’s insurance policies.

“Microsoft has a product known as the Azure OpenAI Service that turned out there to the US Authorities in 2023 and is topic to Microsoft phrases of service,” stated spokesperson Frank Shaw in a press release to WIRED. Microsoft declined to remark particularly on when it made Azure OpenAI out there to the Pentagon, however notes the service was not authorized for “top secret” authorities workloads till 2025.

“AI is already taking part in a big function in nationwide safety and we consider it’s necessary to have a seat on the desk to assist guarantee it’s deployed safely and responsibly,” OpenAI spokesperson Liz Bourgeois stated in a press release. “We have been clear with our workers as we’ve approached this work, offering common updates and devoted channels the place groups can ask questions and interact instantly with our nationwide safety staff.”

The Division of Protection didn’t reply to WIRED’s request for remark.

By January 2024, OpenAI up to date its insurance policies to take away the blanket ban on army use. A number of OpenAI workers discovered concerning the coverage replace by an article in The Intercept, sources say. Firm leaders later addressed the change at an all-hands assembly, explaining how the corporate would tread rigorously on this space shifting ahead.

In December 2024, OpenAI introduced a partnership with Anduril to develop and deploy AI methods for “nationwide safety missions.” Forward of the announcement, OpenAI informed workers that the partnership was slender in scope and would solely cope with unclassified workloads, the identical sources stated. This stood in distinction to a deal Anthropic had signed with Palantir, which might see Anthropic’s AI used for categorised army work.

Palantir approached OpenAI within the fall of 2024 to debate collaborating of their “FedStart” program, an OpenAI spokesperson confirmed to WIRED. The corporate in the end turned it down, and informed workers it will’ve been too high-risk, two sources acquainted with the matter inform WIRED. Nonetheless, OpenAI now works with Palantir in different methods.

Across the time the Anduril deal was introduced, just a few dozen OpenAI workers joined a public Slack channel to debate their considerations concerning the firm’s army partnerships, sources say and a spokesperson confirmed. Some believed the corporate’s fashions have been too unreliable to deal with a person’s bank card data, not to mention help People on the battlefield.