**The Ultimate Guide to Securing Your LLM: Building a Layered Defense Against Sneaky Attacks**

As AI models get smarter, the bad guys are getting smarter too – and that’s a problem! In this tutorial, I’m going to show you how to build a multi-layered security system that can detect and stop those pesky adaptive, paraphrased, and even adversarial attacks. We’ll use a combination of techniques that’ll make your LLM virtually unhackable.

**Getting Started**

Before we dive into the juicy stuff, let’s set up our Colab environment and install the necessary libraries. We’ll also secure our OpenAI API key using Colab Secrets, because you never know who might be snooping around.

**Building the Security Filter**

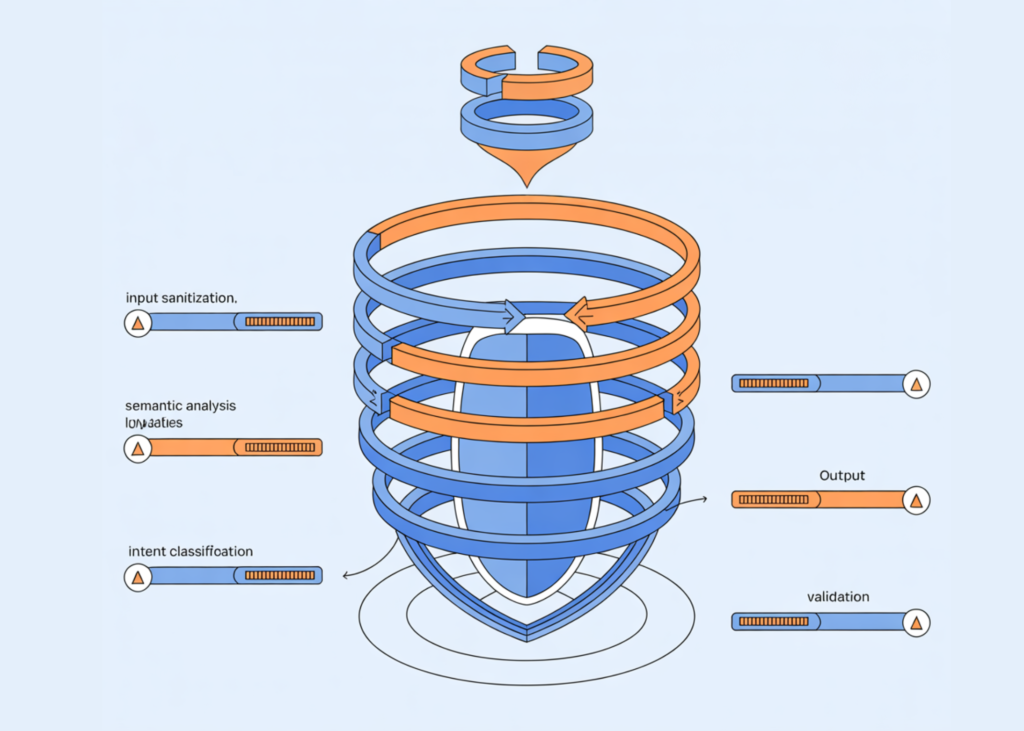

Once we have our environment set up, we’ll create a core security filter class and initialize our multi-layer protection structure. We’ll load sentence embeddings and prepare semantic representations of malicious intent patterns. And, we’ll configure our anomaly detector to learn what benign behavior looks like.

**The Power of Combination**

The real magic happens when we combine all these detection layers into a single scoring and decision-making pipeline. We’ll compute a unified risk score by combining semantic, heuristic, LLM-based, and anomaly alerts. And, we’ll provide clear, interpretable output that explains why an input is allowed or blocked.

**Putting it to the Test**

But don’t just take my word for it! We’ll show you how our security filter performs in real-world scenarios. We’ll generate benign training data, run comprehensive test cases, and demonstrate the entire system in action. You’ll see how it responds to direct attacks, paraphrased prompts, and even social engineering attempts.

**Beyond the Basics**

In addition to our multi-layered defense, we’ll explore some additional strategies to make our system even more robust. These include input sanitization, rate limiting, context awareness, ensemble methods, and continuous learning.

**The Bottom Line**

In conclusion, we’ve seen that effective LLM security requires a layered approach. By combining semantic understanding, heuristic rules, LLM reasoning, and anomaly detection, we can create a resilient security framework that adapts to evolving attacks. This tutorial has shown us how to move beyond brittle filters and build real-world LLM protection systems that can actually keep up with the bad guys.

**Get the Code and Join the Conversation**

Check out the complete code and resources used in this tutorial on our GitHub repository: [link]. Join us on Twitter [link] and our 100k+ ML SubReddit [link] for more updates on AI and machine learning. Let’s chat about LLM security and share our knowledge with the community!