**NVIDIA Revolutionizes AI with Nemotron-3-Nano-30B: The Ultra-Efficient 30B Parameter Mannequin**

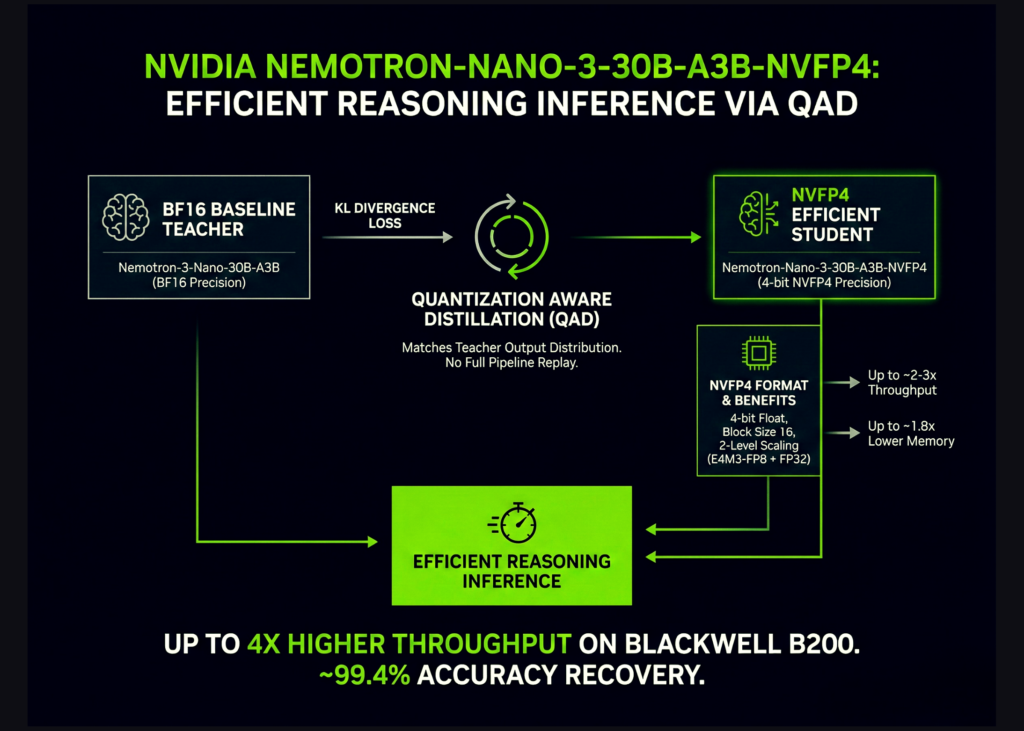

NVIDIA has simply dropped a bombshell within the AI neighborhood with the announcement of Nemotron-3-Nano-30B-A3B-NVFP4, a groundbreaking 30B parameter reasoning mannequin that redefines effectivity and accuracy. This mannequin is a game-changer, particularly when it comes to its astonishing 99.4% accuracy on its BF16 baseline whereas operating on 4-bit NVFP4 precision.

**What makes Nemotron-3-Nano-30B-A3B-NVFP4 so particular?**

Nemotron-3-Nano-30B-A3B-NVFP4 is a hybrid Transformer mannequin that’s been completely skilled from scratch by NVIDIA’s crew. It’s an enormous beast of a mannequin, boasting 52 layers of depth, 23 Mamba2 and MoE layers, and 6 grouped question consideration layers with 2 teams. This monster of a mannequin is designed to be quick, clever, and light-weight, whereas nonetheless delivering superior outcomes.

**Meet NVFP4: The Hero of Effectivity**

NVFP4 is the unsung hero behind Nemotron-3-Nano-30B’s success. This 4-bit floating-point format is a recreation changer for coaching and inference on NVIDIA GPUs, providing:

* 2-3 instances increased arithmetic throughput in comparison with FP8

* 1.8 instances decrease reminiscence utilization for weights and activations

* Sweet-talking Q4M3-FP8 scales per block and a FP32 scale per tensor

**QAD: The Key to Unlocking NVFP4’s Potential**

Conventional Quantization Conscious Coaching (QAT) has its limitations, however NVIDIA’s Quantization Conscious Distillation (QAD) is a complete recreation changer. QAD makes use of a frozen BF16 mannequin as a trainer and the NVFP4 mannequin as a scholar, minimizing KL divergence between their output token distributions. This strategy restores accuracy to ranges approaching BF16, with out the necessity for SFT, RL, or mannequin merging.

**Benchmarked Effectiveness: Nemotron-3-Nano-30B Takes the Lead**

The benchmarks are in, and Nemotron-3-Nano-30B-A3B-NVFP4 is the undisputed winner. With QAD, this mannequin matches the accuracy of its BF16 baseline and even beats it on some metrics. On AA-LCR, AIME25, GPQA-D, LiveCodeBench, and SciCode, NVFP4-QAD recovers efficiency to close-BF16 ranges.

**The Finish Sport**

Nemotron-3-Nano-30B-A3B-NVFP4 is a recreation changer, pushing the boundaries of AI effectivity and accuracy. NVFP4 is the game-changing 4-bit floating-point format that’s set to revolutionize low-power structure. QAD is the unsung hero that makes it all occur. Go learn the [paper](https://analysis.nvidia.com/labs/nemotron/recordsdata/NVFP4-QAD-Report.pdf) and [mannequin weights](https://huggingface.co/nvidia/NVIDIA-Nemotron-3-Nano-30B-A3B-NVFP4) to study extra.