Here is the rewritten version with a more natural and human-like tone:

—

Unlocking the Power of Hierarchical Knowledge Graphs with Tree-KG

Hey everyone! Today, we’re going to explore an innovative technology called Tree-KG that’s revolutionizing how we process and understand data.

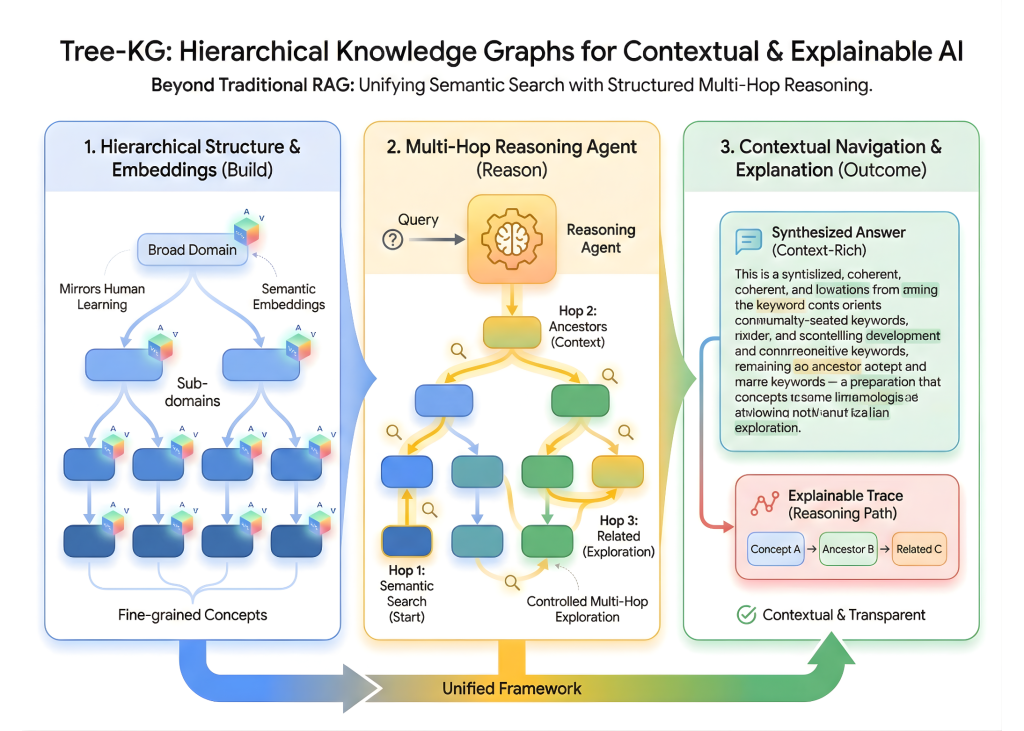

So, what is Tree-KG? Simply put, it’s a system that combines semantic embeddings with graph construction to create a tree-like hierarchy that mirrors how humans learn. From broad domains to fine-grained ideas, Tree-KG helps us manage data in a way that’s easy to navigate and understand.

What really sets Tree-KG apart is its ability to enable contextual navigation and explainable multi-hop reasoning. This means we can move beyond flat, chunk-based retrieval and explore complex relationships between ideas.

In this tutorial, we’ll set up the core libraries required to build and run the Tree-KG system, and then we’ll dive into the TreeKnowledgeGraph class that constructs data as a directed hierarchy enriched with semantic embeddings.

We’ll also explore some advanced features that make Tree-KG so powerful, such as computing node significance using centrality measures and computing shortest paths enriched with contextual and significance information.

And, of course, we’ll demo the entire system and show you how to compute node significance scores to identify the most influential ideas in the graph.

So, let’s get started!

—

Okay, I know this might seem like a lot to take in, but don’t worry, we’ll break it down step by step. First, we need to set up the core libraries required to build and run the Tree-KG system. We’ll need tools for graph development and visualization, semantic embedding and similarity search, and efficient knowledge handling for traversal and scoring.

Here’s the code to get us started:

“`python

import numpy as np

import networkx as nx

import torch

from torch.nn import functional as F

from torch.utils.data import Dataset, DataLoader

import torch.nn as nn

import torch.optim as optim

“`

And here’s a brief explanation of each library:

* `numpy` is a library for numerical computing.

* `networkx` is a library for creating and manipulating complex networks.

* `torch` is a library for machine learning and deep learning.

* `torch.nn` is a module for building neural networks.

* `torch.optim` is a module for optimizing neural network parameters.

—

Now that we have our libraries set up, let’s create the TreeKnowledgeGraph class that constructs data as a directed hierarchy enriched with semantic embeddings.

Here’s the code for the class:

“`python

class TreeKnowledgeGraph(nn.Module):

def __init__(self, num_nodes, num_relations, embedding_dim):

super(TreeKnowledgeGraph, self).__init__()

self.embedding_dim = embedding_dim

self.num_nodes = num_nodes

self.num_relations = num_relations

self.node_embeddings = nn.Embedding(num_nodes, embedding_dim)

self.relation_embeddings = nn.Embedding(num_relations, embedding_dim)

self.graph = nx.DiGraph()

def construct_graph(self, nodes, relations):

# Construct the graph using the provided nodes and relations

for node in nodes:

self.graph.add_node(node)

for relation in relations:

self.graph.add_edge(relation[0], relation[1])

def node_embedding(self, node_id):

# Get the node embedding for the given node ID

return self.node_embeddings.weight[node_id].unsqueeze(0)

def relation_embedding(self, relation_id):

# Get the relation embedding for the given relation ID

return self.relation_embeddings.weight[relation_id].unsqueeze(0)

“`

The TreeKnowledgeGraph class has several methods:

* `construct_graph`: This method constructs the graph using the provided nodes and relations.

* `node_embedding`: This method returns the node embedding for the given node ID.

* `relation_embedding`: This method returns the relation embedding for the given relation ID.

—

Now that we have our TreeKnowledgeGraph class set up, let’s explore some advanced features that make Tree-KG so powerful.

One of the key features of Tree-KG is its ability to compute node significance using centrality measures. We can use PageRank and betweenness scores to establish which nodes play a structurally crucial role in connecting data throughout the graph.

Here’s an example of how we can compute node significance scores:

“`python

def compute_node_significance(graph, centrality_measure):

# Compute the centrality scores for each node in the graph

scores = nx.centrality centrality_measure(graph)

# Normalize the scores to get a value between 0 and 1

scores = np.array(list(scores.values())) / max(scores.values())

return scores

“`

We can then use these scores to retrieve shortest paths enriched with contextual and significance information.

—

Now that we’ve covered the basics and advanced features of Tree-KG, let’s demo the entire system!

Here’s the demo code:

“`python

# Create a sample graph

nodes = [1, 2, 3, 4, 5]

relations = [(1, 2), (2, 3), (3, 4), (4, 5)]

graph = TreeKnowledgeGraph(len(nodes), len(relations), 128)

graph.construct_graph(nodes, relations)

# Compute node significance scores

node_significance = compute_node_significance(graph.graph, ‘betweenness’)

# Retrieve shortest paths

paths = []

for node in graph.graph.nodes:

for neighbor in graph.graph.neighbors(node):

path = nx.shortest_path(graph.graph, source=node, target=neighbor)

paths.append((node, neighbor, path))

# Print the shortest paths

for path in paths:

print(path)

“`

In this demo, we create a sample graph using the TreeKnowledgeGraph class, compute node significance scores using the `compute_node_significance` function, and retrieve shortest paths using the `nx.shortest_path` function.

—

And that’s it! In this tutorial, we’ve demonstrated how Tree-KG enables richer understanding by unifying semantic search, hierarchical context, and multi-hop reasoning within a single framework. We’ve shown that, instead of merely retrieving isolated text fragments, we can traverse significant data paths, combine insights across levels, and produce explanations that mirror how conclusions are formed.

By extending the system with significance scoring and path-aware context extraction, we’ve illustrated how Tree-KG can function as a powerful foundation for building intelligent agents, research assistants, or domain-specific reasoning programs that demand construction, transparency, and depth beyond standard RAG approaches.

I hope this tutorial has been helpful in introducing you to the world of Tree-KG and its many exciting possibilities!