**DSGym: A Reusable Container-Based Substrate for Building and Benchmarking Data Science Agents**

As data scientists, we know that the quality of our work hinges on the quality of our datasets, designed workflows, and verifiable solutions. But what about the quality of the AI brokers that help us with these tasks? DSGym, a framework developed by researchers from Stanford University, Collectively AI, Duke University, and Harvard University, aims to evaluate and train these brokers on more than 1,000 data science challenges with curated real-world datasets and a constant post-coaching pipeline.

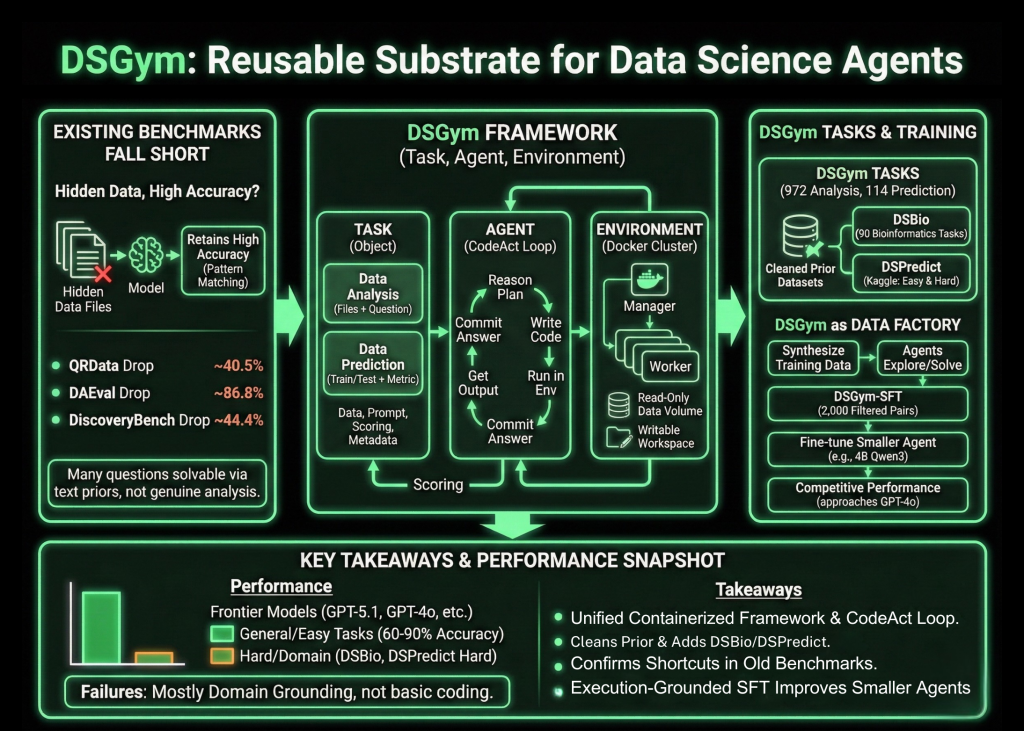

**Why Current Benchmarks Fall Short**

The researchers probed existing benchmarks that claim to test knowledge-aware brokers. What they found was that many of these benchmarks rely on hidden knowledge and retain high accuracy. For example, on QRData, the average drop is 40.5%, on DAEval, it’s 86.8%, and on DiscoveryBench, it’s 44.4%. Many questions can be solved using priors and sample matching on the text alone, rather than real data analysis, and they also discover annotation errors and inconsistent numerical tolerances.

**Process, Agent, and Environment**

DSGym standardizes analysis into three objects: Process, Agent, and Environment. Tasks are either Knowledge Evaluation or Knowledge Prediction. Knowledge Evaluation tasks present multiple data along with a natural language query that needs to be answered via code. Knowledge Prediction tasks present practice and test splits along with a specific metric and require the agent to build a modeling pipeline and output predictions.

Each task is packaged into a Process Object that holds the data information, query prompt, scoring function, and metadata. Brokers work together via a CodeAct type loop. At every turn, the agent writes a reasoning block that describes its plan, a code block that runs within the environment, and a solution block when it’s ready to commit. The Environment is executed as a supervisor and employee cluster of Docker containers, where each employee mounts data as read-only volumes, exposes a writable workspace, and ships with area-specific Python libraries.

**DSGym Duties, DSBio, and DSPredict**

On top of this runtime, DSGym Duties aggregates and refines existing datasets and provides new ones. The researchers also introduce DSBio, a set of 90 bioinformatics tasks derived from peer-reviewed papers and open-source datasets. Tasks cover single-cell analysis, spatial and multi-omics, and human genetics, with deterministic numerical or categorical solutions supported by expert reference notebooks.

DSPredict targets modeling on real Kaggle competitions. A crawler collects recent competitions that accept CSV submissions and fulfill dimension and readability guidelines. After preprocessing, the suite is split into DSPredict Simple with 38 playground-type and introductory competitions, and DSPredict Arduous with 54 high-complexity challenges. In total, DSGym Duties contains 972 knowledge evaluation tasks and 114 prediction tasks.

**What Current Brokers Can and Can’t Do**

The analysis covers closed-source models like GPT-5.1, GPT-5, and GPT-4o, open weights models like Qwen3-Coder-480B, Qwen3-235B-Instruct, and GPT-OSS-120B, and smaller models like Qwen2.5-7B-Instruct and Qwen3-4B-Instruct. All are run with the same CodeAct agent, temperature 0, and tools disabled.

On cleaned common evaluation benchmarks, such as QRData Verified, DAEval Verified, and the better split of DABStep, high models achieve between 60% and 90% actual match accuracy. On DABStep Arduous, accuracy drops for each model, which shows that multi-step quantitative reasoning over financial tables is still brittle.

DSBio exposes a more severe weakness. Kimi-K2-Instruct achieves the highest general accuracy of 43.33%. For all models, between 85% and 96% of inspected failures on DSBio are area grounding errors, including misuse of specialized libraries and incorrect organic interpretations, rather than fundamental coding errors.

On MLEBench Lite and DSPredict Simple, most frontier models obtain near-perfect Legitimate Submission Rate above 80%. On DSPredict Arduous, legitimate submissions rarely exceed 70% and medal rates on Kaggle leaderboards are near 0%. This pattern helps the research crew’s remark of a simplicity bias, where brokers stop after a baseline answer instead of exploring more aggressive models and hyperparameters.

**DSGym as a Knowledge Factory and Coaching Floor**

The same environment can also synthesize training data. Ranging from a subset of QRData and DABStep, the research crew asks brokers to discover datasets, suggest questions, resolve them with code, and document trajectories, which yields 3,700 artificial queries. A select model filters these to a set of 2,000 prime quality question plus trajectory pairs known as DSGym-SFT, and fine-tuning a 4B Qwen3-based model on DSGym-SFT produces an agent that reaches aggressive performance with GPT-4o on standardized evaluation benchmarks, despite having far fewer parameters.

**Key Takeaways**

DSGym offers a unified Process, Agent, and Environment framework, with containerized execution and a CodeAct type loop, to evaluate data science brokers on real code-based workflows instead of static prompts.

The benchmark suite, DSGym-Duties, consolidates and cleans prior datasets and provides DSBio and DSPredict, reaching 972 knowledge evaluation tasks and 114 prediction tasks across domains like finance, bioinformatics, and earth science.

Shortcut evaluation on present benchmarks shows that removing data entry only reasonably reduces accuracy in many instances, which confirms that prior evaluations often measure sample matching on text rather than real data analysis.

Frontier models obtain strong performance on cleaned common evaluation duties and on simpler prediction duties, but they perform poorly on DSBio and DSPredict-Arduous, where most errors come from area grounding points and conservative, under-tuned modeling pipelines.

The DSGym-SFT dataset, constructed from 2,000 filtered artificial trajectories, allows a 4B Qwen3-based agent to approach GPT-4o level accuracy on multiple evaluation benchmarks, which shows that execution-grounded supervision on structured tasks is an effective way to enhance data science brokers.

Check out the paper and Repo. Also, be sure to follow us on Twitter and join our 100k+ ML SubReddit and Subscribe to our Newsletter. And, are you on Telegram? Now you can join us on Telegram as well!