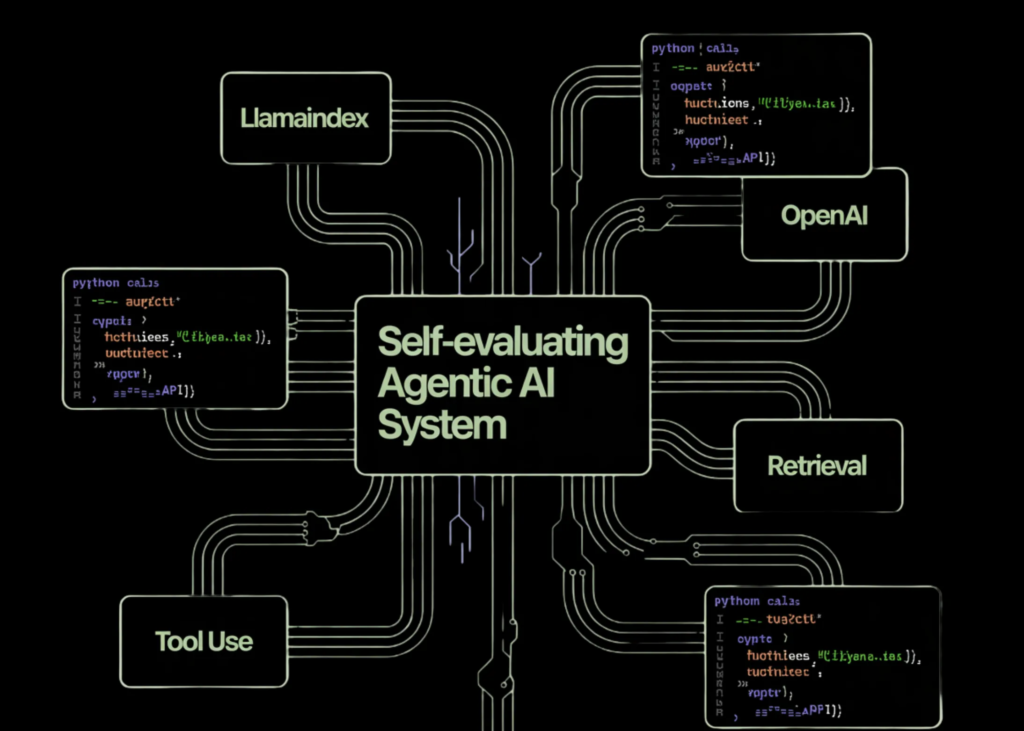

**Building a Self-Evaluating Agentic AI System with LlamaIndex and OpenAI: A Comprehensive Guide**

Hey, developers! As we strive to create more reliable and controllable AI systems, we’re often faced with the challenge of designing a system that can not only retrieve relevant information but also evaluate its own responses. In this tutorial, we’re going to explore how to build a self-evaluating agentic AI system using LlamaIndex and OpenAI, with a focus on retrieval-augmented technology (RAG) agents.

**Getting Started**

Before we dive into the code, let’s set up our environment. We’ll need to install the required dependencies, including LlamaIndex and OpenAI. Here’s the code snippet to get us started:

“`

!pip -q install llama-index llama-index-llms-openai llama-index-embeddings-openai nest_asyncio

import os

import asyncio

import nest_asyncio

nest_asyncio.apply()

from getpass import getpass

if not os.environ.get(“OPENAI_API_KEY”):

os.environ[“OPENAI_API_KEY”] = getpass(“Enter OPENAI_API_KEY: “)

“`

In this code, we’re installing the necessary dependencies using pip, importing the required modules, and setting up the environment for our agent. We’re also overriding the default prompt for the OpenAI API key and storing it as an environment variable.

**Configuring the Agent**

Now that we have our environment set up, let’s configure our agent. We’ll define the core components of our RAG agent, including document retrieval, answer synthesis, and self-evaluation. Here’s the code snippet:

“`

from llama_index.core import Doc, VectorStoreIndex, Settings

from llama_index.llms.openai import OpenAI

from llama_index.embeddings.openai import OpenAIEmbedding

Settings.llm = OpenAI(model=”gpt-4o-mini”, temperature=0.2)

Settings.embed_model = OpenAIEmbedding(model=”text-embedding-3-small”)

texts = [

“Reliable RAG systems separate retrieval, synthesis, and verification. Common failures include hallucination and shallow retrieval.”,

“RAG evaluation focuses on faithfulness, answer relevancy, and retrieval quality.”,

“Tool-using agents require constrained tools, validation, and self-review loops.”,

“A robust workflow follows retrieve, answer, evaluate, and revise steps.”

]

docs = [Doc(text=t) for t in texts]

index = VectorStoreIndex.from_documents(docs)

query_engine = index.as_query_engine(similarity_top_k=4)

“`

In this code, we’re configuring our LlamaIndex and OpenAI models, creating a vector store index from our text data, and defining our query engine. We’re also setting up the agent’s system prompt and specifying the temperature for our OpenAI model.

**Implementing the Agent’s Instruments**

Now that we have our agent configured, let’s implement its instruments. We’ll define two key functions: `retrieve_evidence` and `score_answer`. Here’s the code snippet:

“`

from llama_index.core.analysis import FaithfulnessEvaluator, RelevancyEvaluator

faith_eval = FaithfulnessEvaluator(llm=Settings.llm)

rel_eval = RelevancyEvaluator(llm=Settings.llm)

def retrieve_evidence(q: str) -> str:

r = query_engine.question(q)

out = []

for i, n in enumerate(r.source_nodes or []):

out.append(f”[{i+1}] {n.node.get_content()[:300]}”)

return “n”.join(out)

def score_answer(q: str, a: str) -> str:

r = query_engine.question(q)

ctx = [n.node.get_content() for n in r.source_nodes or []]

f = faith_eval.evaluate(question=q, response=a, contexts=ctx)

r = rel_eval.evaluate(question=q, response=a, contexts=ctx)

return f”Faithfulness: {f.rating}nRelevancy: {r.rating}”

“`

In this code, we’re implementing our `retrieve_evidence` function, which retrieves relevant information for a given query, and our `score_answer` function, which evaluates the faithfulness and relevancy of an answer. We’re using our LlamaIndex and OpenAI models to perform these evaluations.

**Creating the Agent and Running the Workflow**

Now that we have our agent’s instruments defined, let’s create the agent and run the workflow. Here’s the code snippet:

“`

from llama_index.core.agent.workflow import ReActAgent

from llama_index.core.workflow import Context

agent = ReActAgent(

instruments=[retrieve_evidence, score_answer],

llm=Settings.llm,

system_prompt=”””

All the time retrieve proof first.

Produce a structured reply.

Consider the reply and revise as soon as if scores are low.

“””,

verbose=True

)

ctx = Context(agent)

async def run_brief(matter: str):

q = f”Design a dependable RAG + tool-using agent workflow and tips on how to consider it. Subject: {matter}”

handler = agent.run(q, ctx=ctx)

async for ev in handler.stream_events():

print(getattr(ev, “delta”, “”), end=””)

res = await handler

return str(res)

matter = “RAG agent reliability and analysis”

loop = asyncio.get_event_loop()

consequence = loop.run_until_complete(run_brief(matter))

print(“nnFINAL OUTPUTn”)

print(consequence)

“`

In this code, we’re creating our ReAct agent and defining its workflow. We’re also implementing a `run_brief` function, which executes the agent’s workflow for a given query.

**Conclusion**

In conclusion, we’ve demonstrated how to build a self-evaluating agentic AI system using LlamaIndex and OpenAI, with a focus on retrieval-augmented technology (RAG) agents. We’ve shown how to configure the agent, implement its instruments, and run the workflow. This method illustrates how we can use agentic AI with LlamaIndex and OpenAI models to build more successful and reliable systems.

**Try the FULL CODES here**.

Additionally, be sure to follow us on **Twitter** and don’t forget to join our **Reddit community** and Subscribe to our **Newsletter**. Wait! Are you on telegram? **now you can join us on telegram as well**.

**FULL CODES here**:

**Author**: Asif Razzaq, CEO of Marktechpost Media Inc.