On this tutorial, we implement an agentic AI sample utilizing LangGraph that treats reasoning and motion as a transactional workflow reasonably than a single-shot choice. We mannequin a two-phase commit system through which an agent levels reversible adjustments, validates strict invariants, pauses for human approval through graph interrupts, and commits or rolls again solely then. With this, we show how agentic methods may be designed with security, auditability, and controllability at their core, transferring past reactive chat brokers towards structured, governance-aware AI workflows that run reliably in Google Colab utilizing OpenAI fashions. Try the Full Codes here.

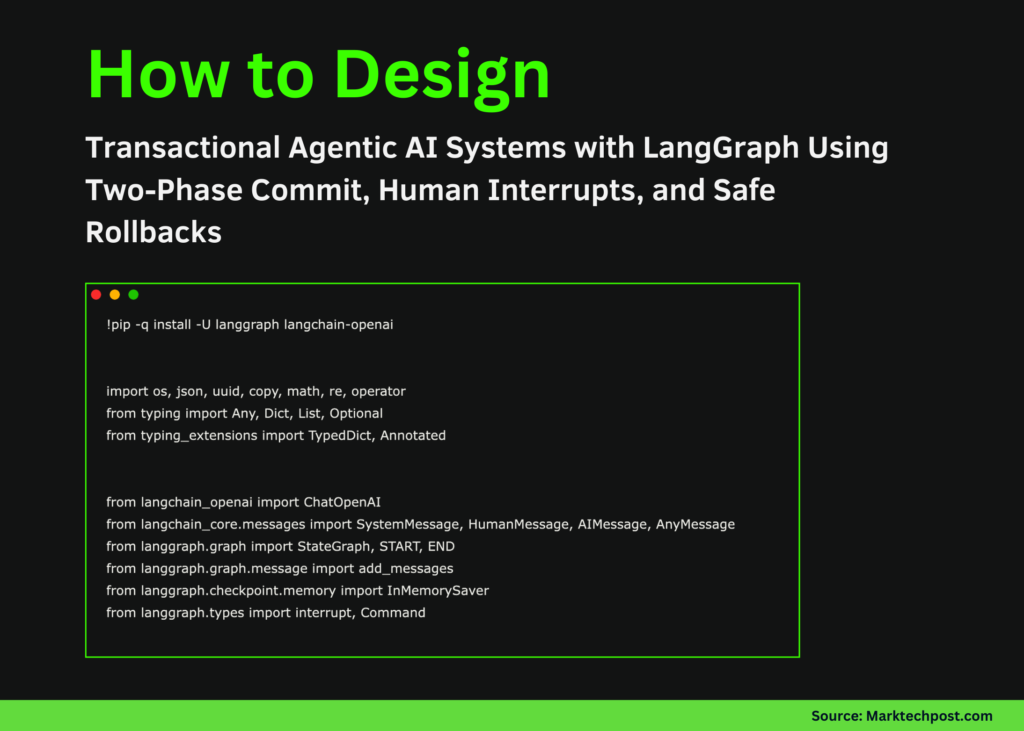

!pip -q set up -U langgraph langchain-openai

import os, json, uuid, copy, math, re, operator

from typing import Any, Dict, Checklist, Elective

from typing_extensions import TypedDict, Annotated

from langchain_openai import ChatOpenAI

from langchain_core.messages import SystemMessage, HumanMessage, AIMessage, AnyMessage

from langgraph.graph import StateGraph, START, END

from langgraph.graph.message import add_messages

from langgraph.checkpoint.reminiscence import InMemorySaver

from langgraph.varieties import interrupt, Command

def _set_env_openai():

if os.environ.get("OPENAI_API_KEY"):

return

attempt:

from google.colab import userdata

ok = userdata.get("OPENAI_API_KEY")

if ok:

os.environ["OPENAI_API_KEY"] = ok

return

besides Exception:

go

import getpass

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter OPENAI_API_KEY: ")

_set_env_openai()

MODEL = os.environ.get("OPENAI_MODEL", "gpt-4o-mini")

llm = ChatOpenAI(mannequin=MODEL, temperature=0)We arrange the execution atmosphere by putting in LangGraph and initializing the OpenAI mannequin. We securely load the API key and configure a deterministic LLM, guaranteeing that each one downstream agent habits stays reproducible and managed. Try the Full Codes here.

SAMPLE_LEDGER = [

{"txn_id": "T001", "name": "Asha", "email": "[email protected]", "quantity": "1,250.50", "date": "12/01/2025", "be aware": "Membership renewal"},

{"txn_id": "T002", "identify": "Ravi", "e mail": "[email protected]", "quantity": "-500", "date": "2025-12-02", "be aware": "Chargeback?"},

{"txn_id": "T003", "identify": "Sara", "e mail": "[email protected]", "quantity": "700", "date": "02-12-2025", "be aware": "Late charge waived"},

{"txn_id": "T003", "identify": "Sara", "e mail": "[email protected]", "quantity": "700", "date": "02-12-2025", "be aware": "Duplicate row"},

{"txn_id": "T004", "identify": "Lee", "e mail": "[email protected]", "quantity": "NaN", "date": "2025/12/03", "be aware": "Dangerous quantity"},

]

ALLOWED_OPS = {"change", "take away", "add"}

def _parse_amount(x):

if isinstance(x, (int, float)):

return float(x)

if isinstance(x, str):

attempt:

return float(x.change(",", ""))

besides:

return None

return None

def _iso_date(d):

if not isinstance(d, str):

return None

d = d.change("/", "-")

p = d.cut up("-")

if len(p) == 3 and len(p[0]) == 4:

return d

if len(p) == 3 and len(p[2]) == 4:

return f"{p[2]}-{p[1]}-{p[0]}"

return None

def profile_ledger(rows):

seen, anomalies = {}, []

for i, r in enumerate(rows):

if _parse_amount(r.get("quantity")) is None:

anomalies.append(i)

if r.get("txn_id") in seen:

anomalies.append(i)

seen[r.get("txn_id")] = i

return {"rows": len(rows), "anomalies": anomalies}

def apply_patch(rows, patch):

out = copy.deepcopy(rows)

for op in sorted([p for p in patch if p["op"] == "take away"], key=lambda x: x["idx"], reverse=True):

out.pop(op["idx"])

for op in patch:

if op["op"] in {"add", "change"}:

out[op["idx"]][op["field"]] = op["value"]

return out

def validate(rows):

points = []

for i, r in enumerate(rows):

if _parse_amount(r.get("quantity")) is None:

points.append(i)

if _iso_date(r.get("date")) is None:

points.append(i)

return {"okay": len(points) == 0, "points": points}We outline the core ledger abstraction together with the patching, normalization, and validation logic. We deal with knowledge transformations as reversible operations, permitting the agent to cause about adjustments safely earlier than committing them. Try the Full Codes here.

class TxnState(TypedDict):

messages: Annotated[List[AnyMessage], add_messages]

raw_rows: Checklist[Dict[str, Any]]

sandbox_rows: Checklist[Dict[str, Any]]

patch: Checklist[Dict[str, Any]]

validation: Dict[str, Any]

permitted: Elective[bool]

def node_profile(state):

p = profile_ledger(state["raw_rows"])

return {"messages": [AIMessage(content=json.dumps(p))]}

def node_patch(state):

sys = SystemMessage(content material="Return a JSON patch checklist fixing quantities, dates, emails, duplicates")

usr = HumanMessage(content material=json.dumps(state["raw_rows"]))

r = llm.invoke([sys, usr])

patch = json.masses(re.search(r"[.*]", r.content material, re.S).group())

return {"patch": patch, "messages": [AIMessage(content=json.dumps(patch))]}

def node_apply(state):

return {"sandbox_rows": apply_patch(state["raw_rows"], state["patch"])}

def node_validate(state):

v = validate(state["sandbox_rows"])

return {"validation": v, "messages": [AIMessage(content=json.dumps(v))]}

def node_approve(state):

choice = interrupt({"validation": state["validation"]})

return {"permitted": choice == "approve"}

def node_commit(state):

return {"messages": [AIMessage(content="COMMITTED")]}

def node_rollback(state):

return {"messages": [AIMessage(content="ROLLED BACK")]}We mannequin the agent’s inner state and outline every node within the LangGraph workflow. We categorical agent habits as discrete, inspectable steps that rework state whereas preserving message historical past. Try the Full Codes here.

builder = StateGraph(TxnState)

builder.add_node("profile", node_profile)

builder.add_node("patch", node_patch)

builder.add_node("apply", node_apply)

builder.add_node("validate", node_validate)

builder.add_node("approve", node_approve)

builder.add_node("commit", node_commit)

builder.add_node("rollback", node_rollback)

builder.add_edge(START, "profile")

builder.add_edge("profile", "patch")

builder.add_edge("patch", "apply")

builder.add_edge("apply", "validate")

builder.add_conditional_edges(

"validate",

lambda s: "approve" if s["validation"]["ok"] else "rollback",

{"approve": "approve", "rollback": "rollback"}

)

builder.add_conditional_edges(

"approve",

lambda s: "commit" if s["approved"] else "rollback",

{"commit": "commit", "rollback": "rollback"}

)

builder.add_edge("commit", END)

builder.add_edge("rollback", END)

app = builder.compile(checkpointer=InMemorySaver())We assemble the LangGraph state machine and explicitly encode the management circulate between profiling, patching, validation, approval, and finalization. We use conditional edges to implement governance guidelines reasonably than depend on implicit mannequin choices. Try the Full Codes here.

def run():

state = {

"messages": [],

"raw_rows": SAMPLE_LEDGER,

"sandbox_rows": [],

"patch": [],

"validation": {},

"permitted": None,

}

cfg = {"configurable": {"thread_id": "txn-demo"}}

out = app.invoke(state, config=cfg)

if "__interrupt__" in out:

print(json.dumps(out["__interrupt__"], indent=2))

choice = enter("approve / reject: ").strip()

out = app.invoke(Command(resume=choice), config=cfg)

print(out["messages"][-1].content material)

run()We run the transactional agent and deal with human-in-the-loop approval by means of graph interrupts. We resume execution deterministically, demonstrating how agentic workflows can pause, settle for exterior enter, and safely conclude with both a commit or rollback.

In conclusion, we confirmed how LangGraph allows us to construct brokers that cause over states, implement validation gates, and collaborate with people at exactly outlined management factors. We handled the agent not as an oracle, however as a transaction coordinator that may stage, examine, and reverse its personal actions whereas sustaining a full audit path. This strategy highlights how agentic AI may be utilized to real-world methods that require belief, compliance, and recoverability, and it supplies a sensible basis for constructing production-grade autonomous workflows that stay protected, clear, and human-supervised.

Try the Full Codes here. Additionally, be at liberty to observe us on Twitter and don’t neglect to hitch our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its recognition amongst audiences.