The AI coding panorama simply received an enormous shake-up. Should you’ve been counting on Claude 3.5 Sonnet or GPT-4o in your dev workflows, the ache: nice efficiency typically comes with a invoice that makes your pockets weep, or latency that breaks your circulate.This text supplies a technical overview of MiniMax-M2, specializing in its core design decisions and capabilities, and the way it adjustments the value to efficiency baseline for agentic coding workflows.

Branded as ‘Mini Value, Max Efficiency,’ MiniMax-M2 targets agentic coding workloads with round 2x the pace of main opponents at roughly 8% of their value. The important thing change shouldn’t be solely value effectivity, however a distinct computational and reasoning sample in how the mannequin constructions and executes its “pondering” throughout advanced software and code workflows.

The Secret Sauce: Interleaved Pondering

The standout function of MiniMax-M2 is its native mastery of Interleaved Pondering.

However what does that truly imply?

Most LLMs function in a linear “Chain of Thought” (CoT) the place they do all their planning upfront after which fireplace off a sequence of software calls (like working code or looking out the net). The issue? If the primary software name returns surprising information, the preliminary plan turns into stale, resulting in “state drift” the place the mannequin retains hallucinating a path that not exists.

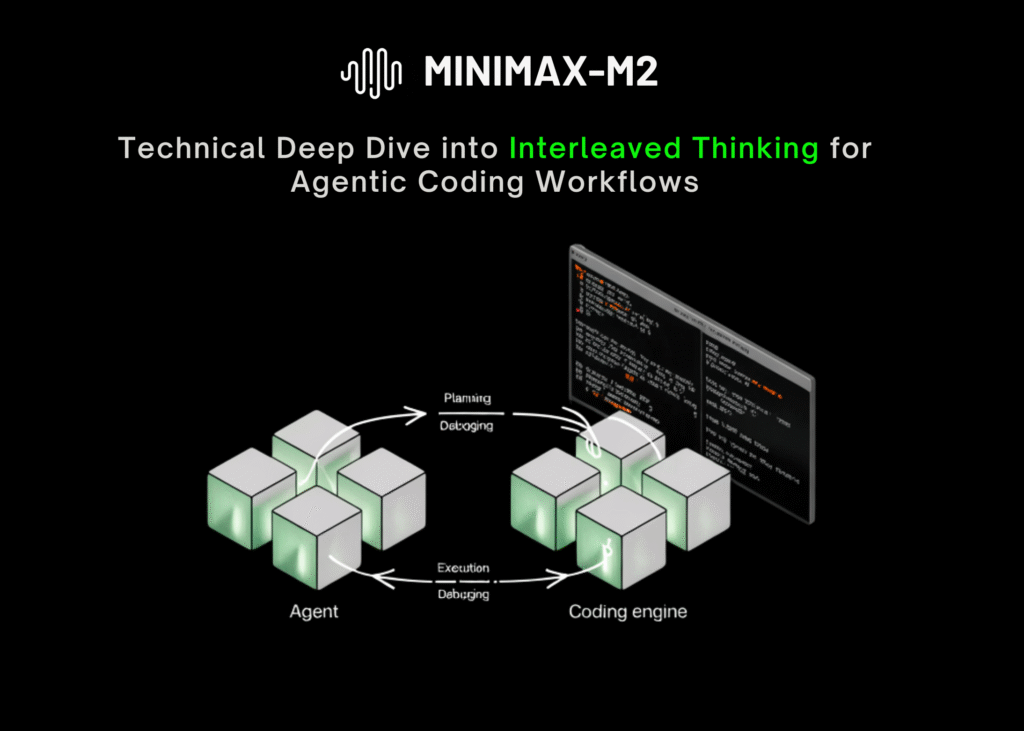

Interleaved Pondering adjustments the sport by making a dynamic Plan -> Act-> Mirror loop.

As a substitute of front-loading all of the logic, MiniMax-M2 alternates between express reasoning and power use. It causes, executes a software, reads the output, and then causes once more based mostly on that recent proof. This permits the mannequin to:

- Self-Right: If a shell command fails, it reads the error and adjusts its subsequent transfer instantly.

- Protect State: It carries ahead hypotheses and constraints between steps, stopping the “reminiscence loss” frequent in lengthy coding duties.

- Deal with Lengthy Horizons: This strategy is essential for advanced agentic workflows (like constructing a whole app function) the place the trail isn’t clear from the 1st step.

Benchmarks present the impression is actual: enabling Interleaved Pondering boosted MiniMax-M2’s rating on SWE-Bench Verified by over 3% and on BrowseComp by an enormous 40%.

Powered by Combination of Specialists MoE: Pace Meets Smarts

How does MiniMax-M2 obtain low latency whereas being sensible sufficient to interchange a senior dev? The reply lies in its Combination of Specialists (MoE) structure.

MiniMax-M2 is an enormous mannequin with 230 billion whole parameters, however it makes use of a “sparse” activation method. For any given token technology, it solely prompts 10 billion parameters.

This design delivers one of the best of each worlds:

- Large Data Base: You get the deep world information and reasoning capability of a 200B+ mannequin.

- Blazing Pace: Inference runs with the lightness of a 10B mannequin, enabling excessive throughput and low latency.

For interactive brokers like Claude Code, Cursor, or Cline, this pace is non-negotiable. You want the mannequin to suppose, code, and debug in real-time with out the “pondering…” spinner of loss of life.

Agent & Code Native

MiniMax-M2 wasn’t simply educated on textual content; it was developed for end-to-end developer workflows. It excels at dealing with sturdy toolchains together with MCP (Mannequin Context Protocol), shell execution, browser retrieval, and sophisticated codebases.

It’s already being built-in into the heavy hitters of the AI coding world:

- Claude Code

- Cursor

- Cline

- Kilo Code

- Droid

The Economics: 90% Cheaper than the Competitors

The pricing construction is probably essentially the most aggressive we’ve seen for a mannequin of this caliber. MiniMax is virtually gifting away “intelligence” in comparison with the present market leaders.

API Pricing (vs Claude 3.5 Sonnet):

- Enter Tokens: $0.3 / Million (10% of Sonnet’s value)

- Cache Hits: $0.03 / Million (10% of Sonnet’s value)

- Output Tokens: $1.2 / Million (8% of Sonnet’s value)

For particular person builders, they provide tiered Coding Plans that undercut the market considerably:

- Starter: $10/month (Features a $2 first-month promo).

- Professional: $20/month.

- Max: $50/month (As much as 5x the utilization restrict of Claude Code Max).

As if that was not sufficient…MiniMax recently launched a Global Developer Ambassador Program, a global initiative designed to empower independent ML and LLM developers. The program invites builders to collaborate directly with the MiniMax R&D team to shape the future.

The corporate is searching for builders with confirmed open-source expertise who’re already conversant in MiniMax fashions and lively on platforms like GitHub and Hugging Face.

Key Program Highlights:

- The Incentives: Ambassadors obtain complimentary entry to the MiniMax-M2 Max Coding Plan, early entry to unreleased video and audio fashions, direct suggestions channels with product leads, and potential full-time profession alternatives.

- The Position: Contributors are anticipated to construct public demos, create open-source instruments, and supply essential suggestions on APIs earlier than public launches.

You possibly can join here.

Editorial Notes

MiniMax-M2 challenges the concept “smarter” should imply “slower” or “dearer.” By leveraging MOE effectivity and Interleaved Pondering, it presents a compelling different for builders who need to run autonomous brokers with out bankrupting their API price range.

As we transfer towards a world the place AI brokers don’t simply write code however architect complete methods, the flexibility to “suppose, act, and replicate” repeatedly, at a value that permits for 1000’s of iterations, may simply make M2 the brand new normal for AI engineering.

Due to the MINIMAX AI staff for the thought management/ Assets for this text. MINIMAX AI staff has supported this content material/article.

Jean-marc is a profitable AI enterprise government .He leads and accelerates development for AI powered options and began a pc imaginative and prescient firm in 2006. He’s a acknowledged speaker at AI conferences and has an MBA from Stanford.